Part 0: Prologue, what we're building and how to think about it

What you build

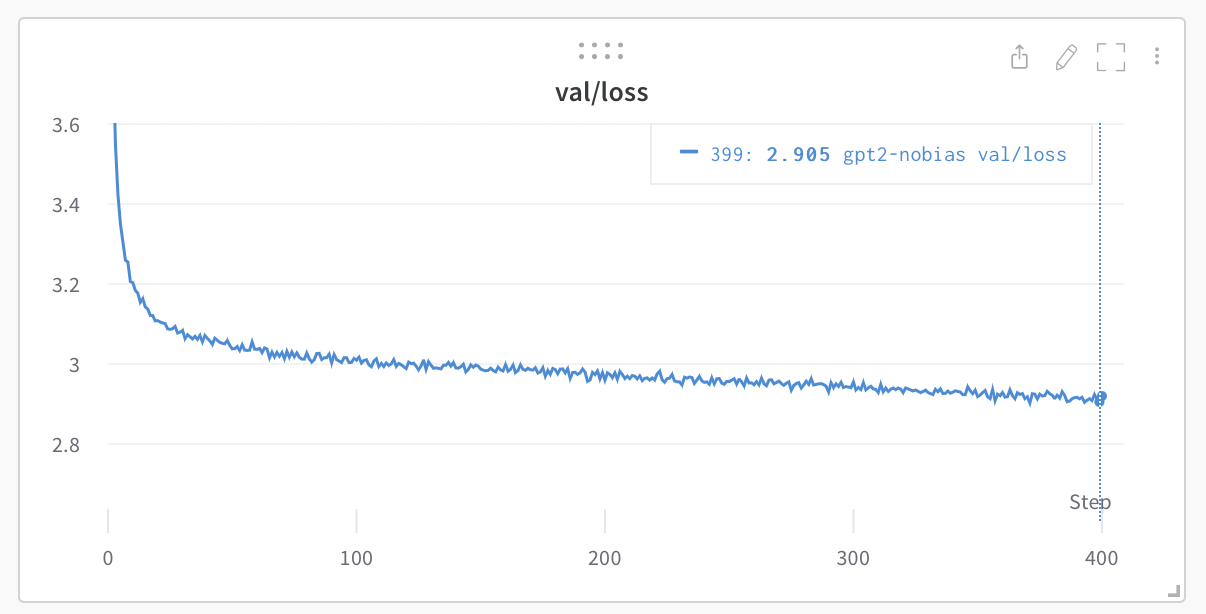

By the end you have two things. The first is code: a working autograd engine (a Tensor class that records the operations done to it and replays them in reverse to compute gradients) and a GPT-2 built on top of it, with an AdamW optimizer and a training loop. It runs on a CPU, in NumPy, with no deep learning framework underneath. It trains. Loaded with the real GPT-2 124M weights, it reproduces the reference perplexity numbers exactly: WikiText-103 perplexity 26.57, LAMBADA accuracy 38.00% (tests/benchmark.md).

The second thing is the more valuable one: the mental model to build such systems yourself. Code you can copy. The reasoning that produces correct code is what transfers. A deep network looks like one enormous function, but it is a few dozen small functions stacked up, and you only ever work on one at a time. This course is built so that when you finish, you could derive the backward pass of an operation you have never seen, implement it, and know whether you got it right.

Who this is for

Someone with little or no calculus background. If you have never taken a derivative, that is the expected starting point, not a gap to apologize for. Part 1 begins with limits: what it means for a quantity to approach a value, why the slope of a curve is a limit, and how that single idea extends to functions of many variables. Every piece of mathematics the model needs is built from there. We do not assume; we derive.

What you do need is comfort with arrays (indexing, shapes, basic NumPy) and willingness to work through algebra with a pen. The derivations are not hard to follow, but they are not spectator material. Watching a derivation is not the same as doing one.

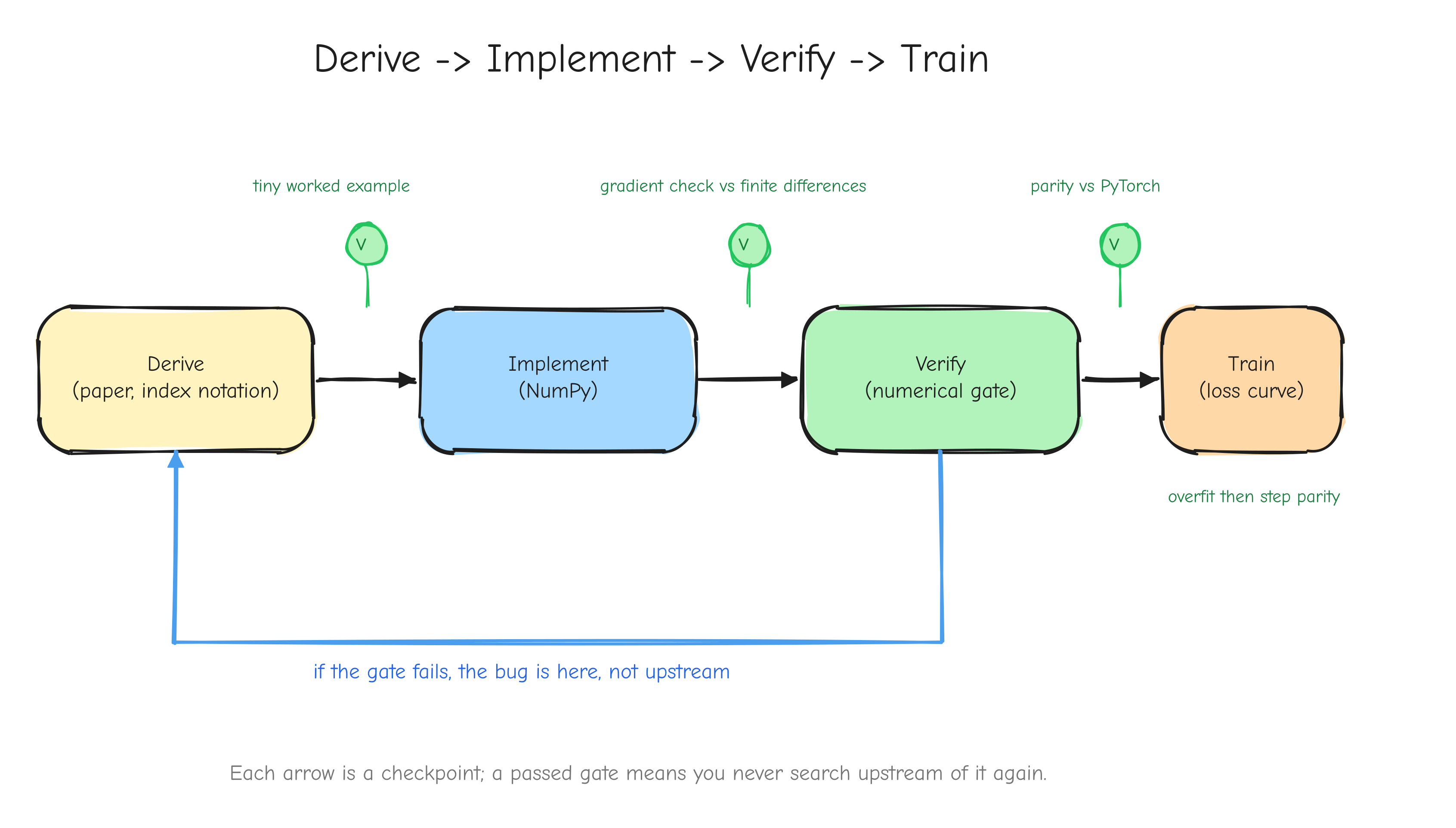

The central thesis: derive, then implement, then verify, then train

Four verbs, in that order. The order is the method.

Derive. Before writing code for an operation, work out its gradient by hand, on paper, in index notation. You produce an equation, not a guess. This is slow at first. It is also the only step that gives you certainty about what the code is supposed to compute.

Implement. Translate the derived equation into NumPy. Because you derived it, implementation is close to transcription. You know the shape of the answer before you write the line.

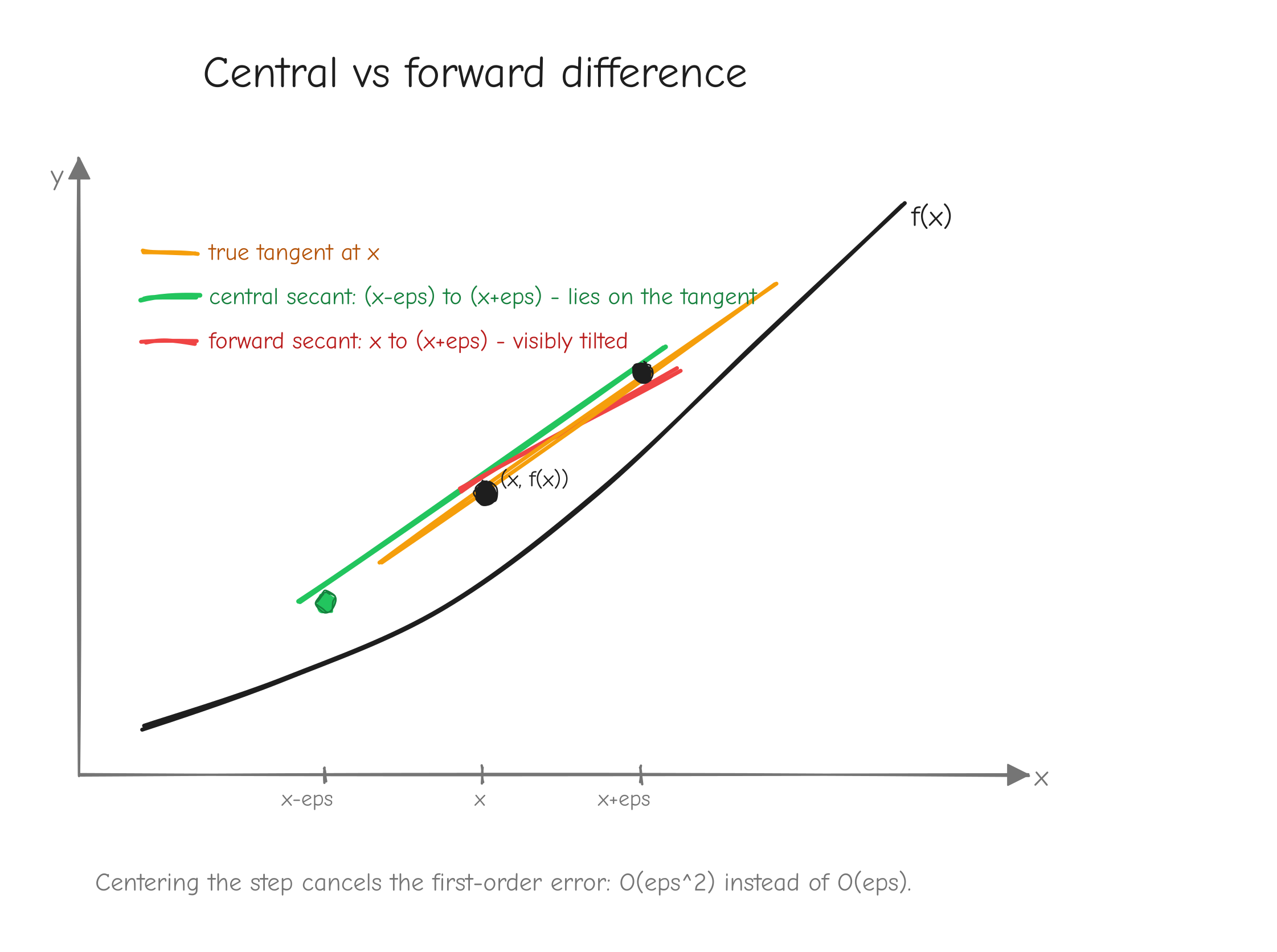

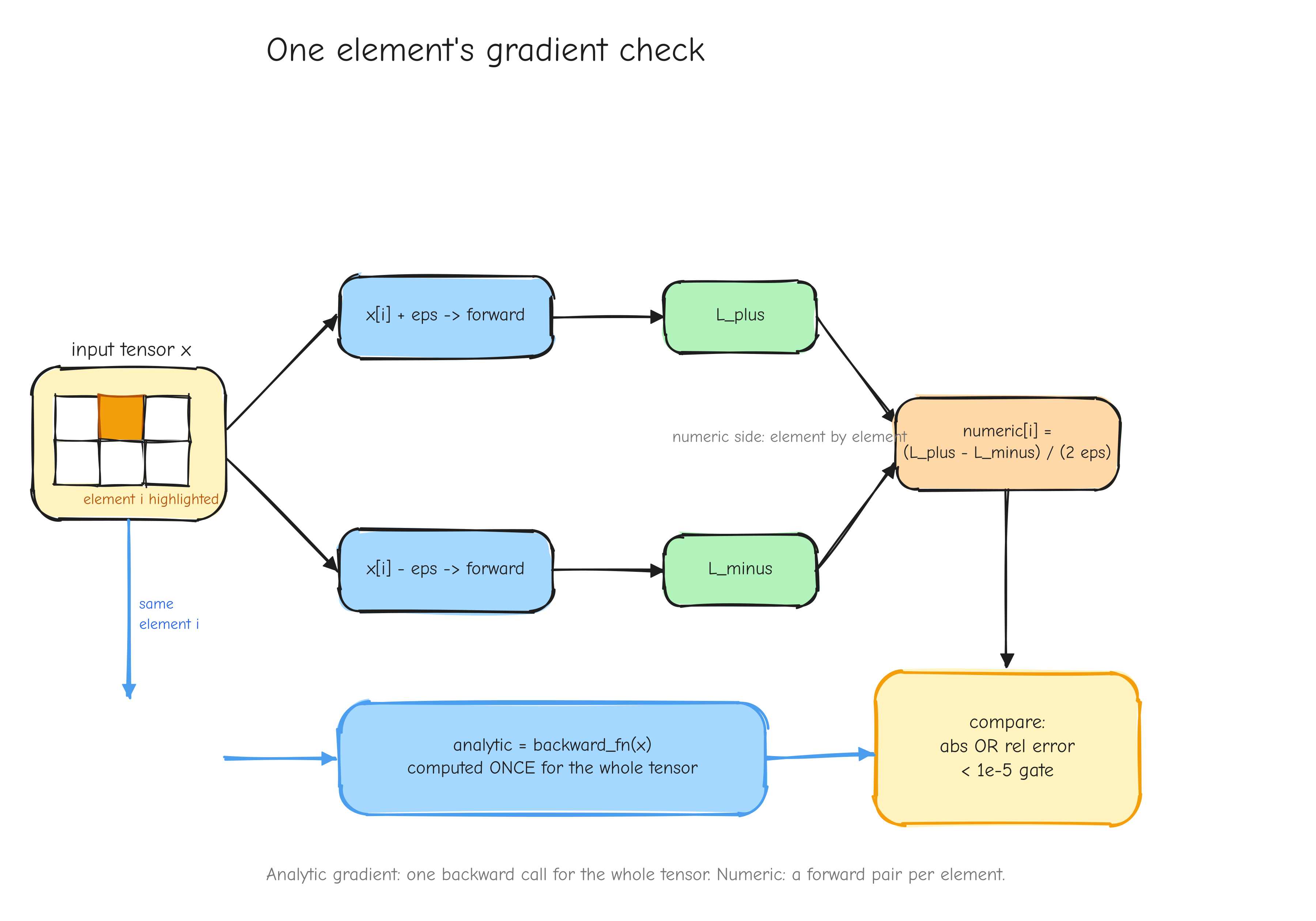

Verify. Check the implementation numerically before trusting it. A derived gradient can still be transcribed wrong. The numerical gradient check (Part 7, Verifying the math) perturbs each input slightly, measures how the loss changes, and compares that to the gradient the code returned. If they disagree, something is wrong, and you find out now.

Train. Only after the components are verified do you assemble them and run the optimizer. Training is where small errors that survived the earlier steps would otherwise hide for hours before surfacing as a loss curve that drifts.

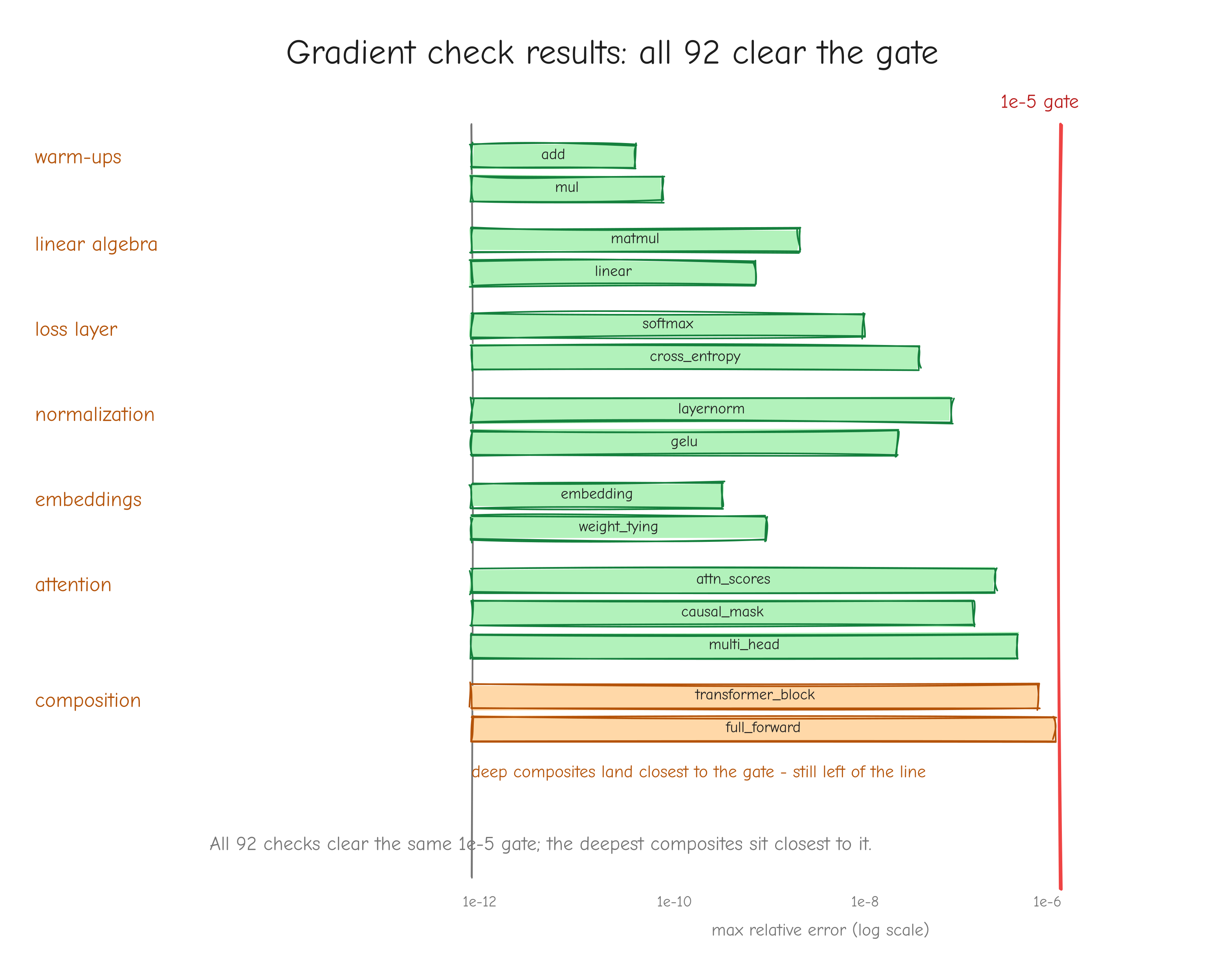

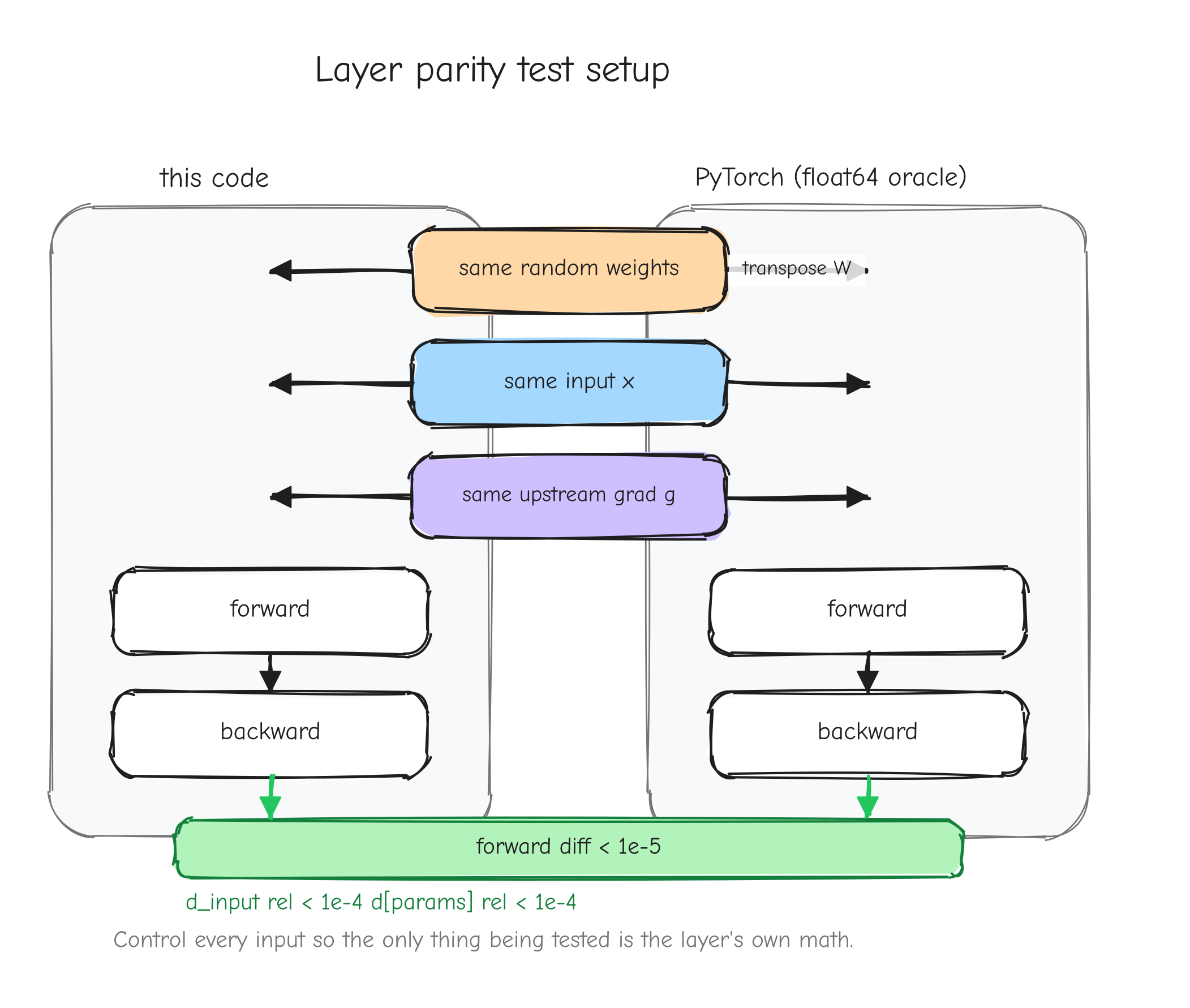

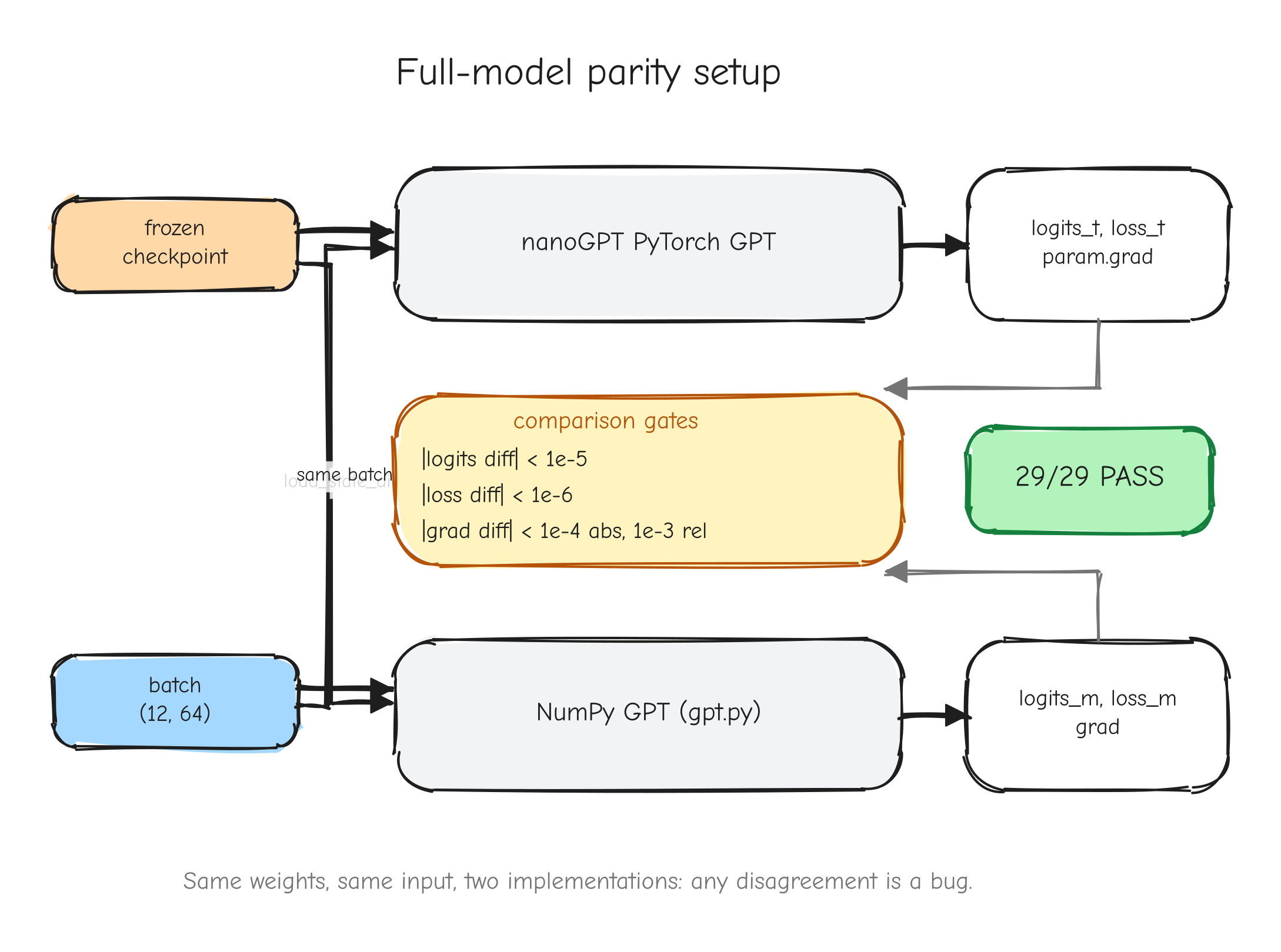

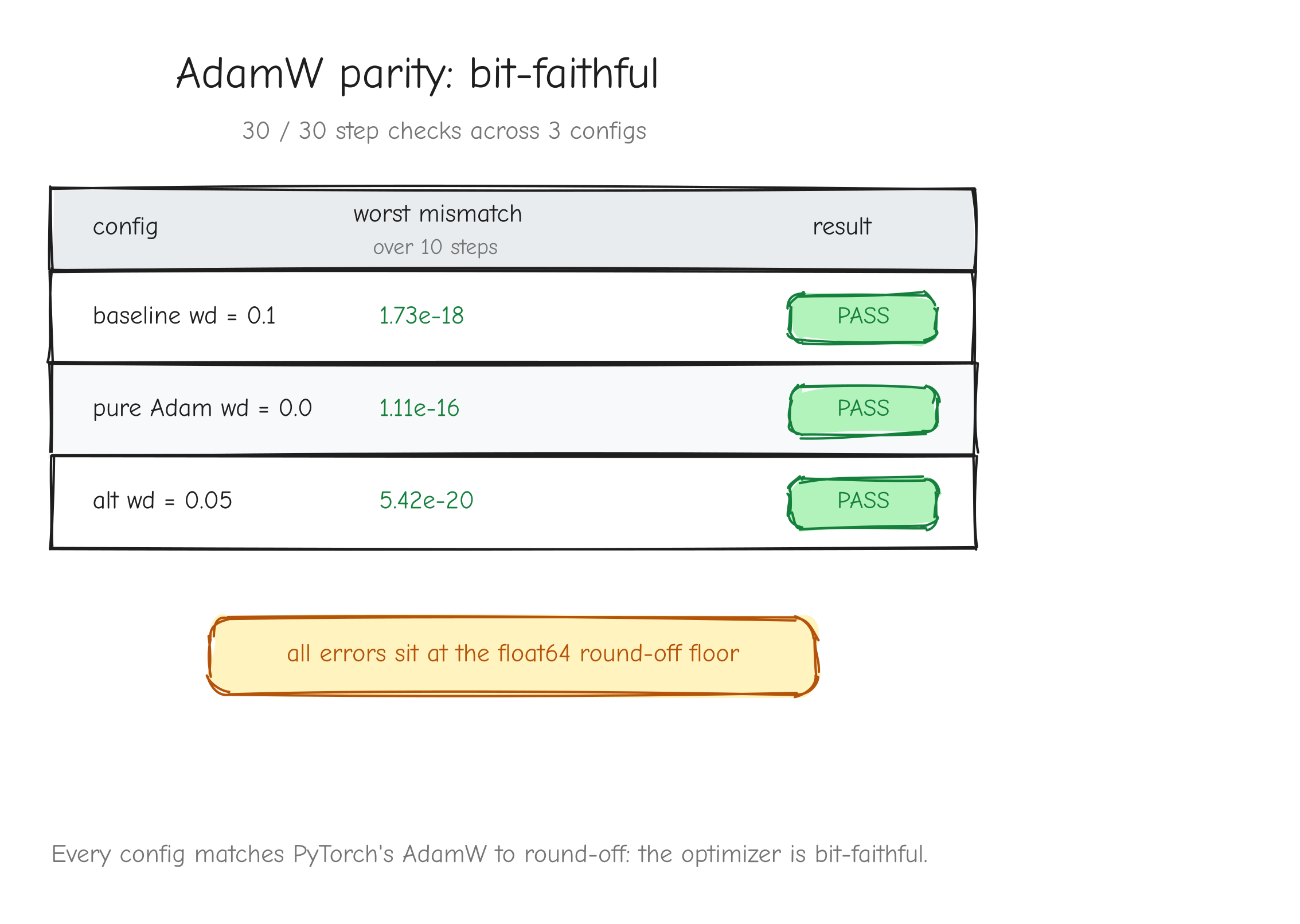

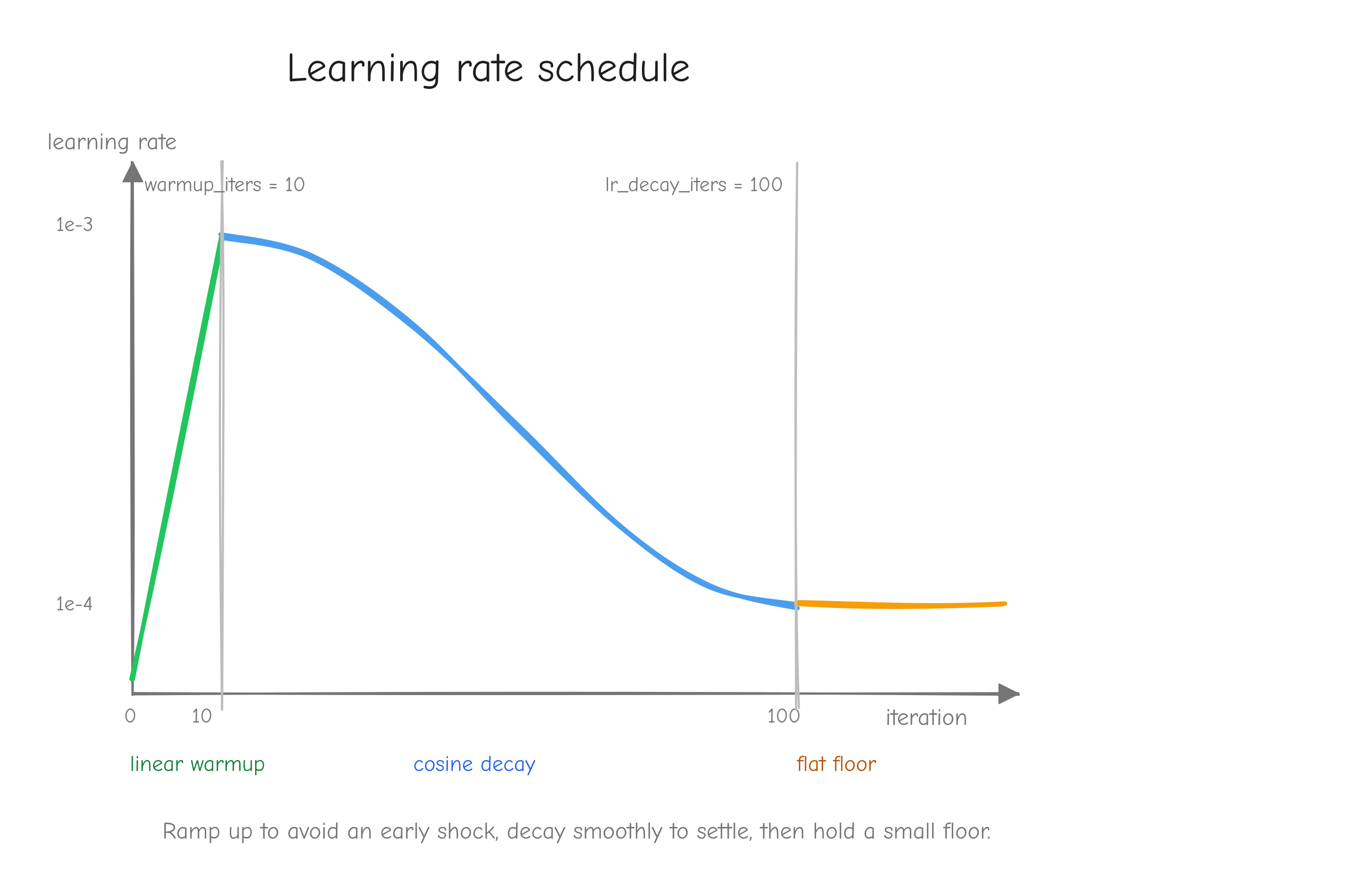

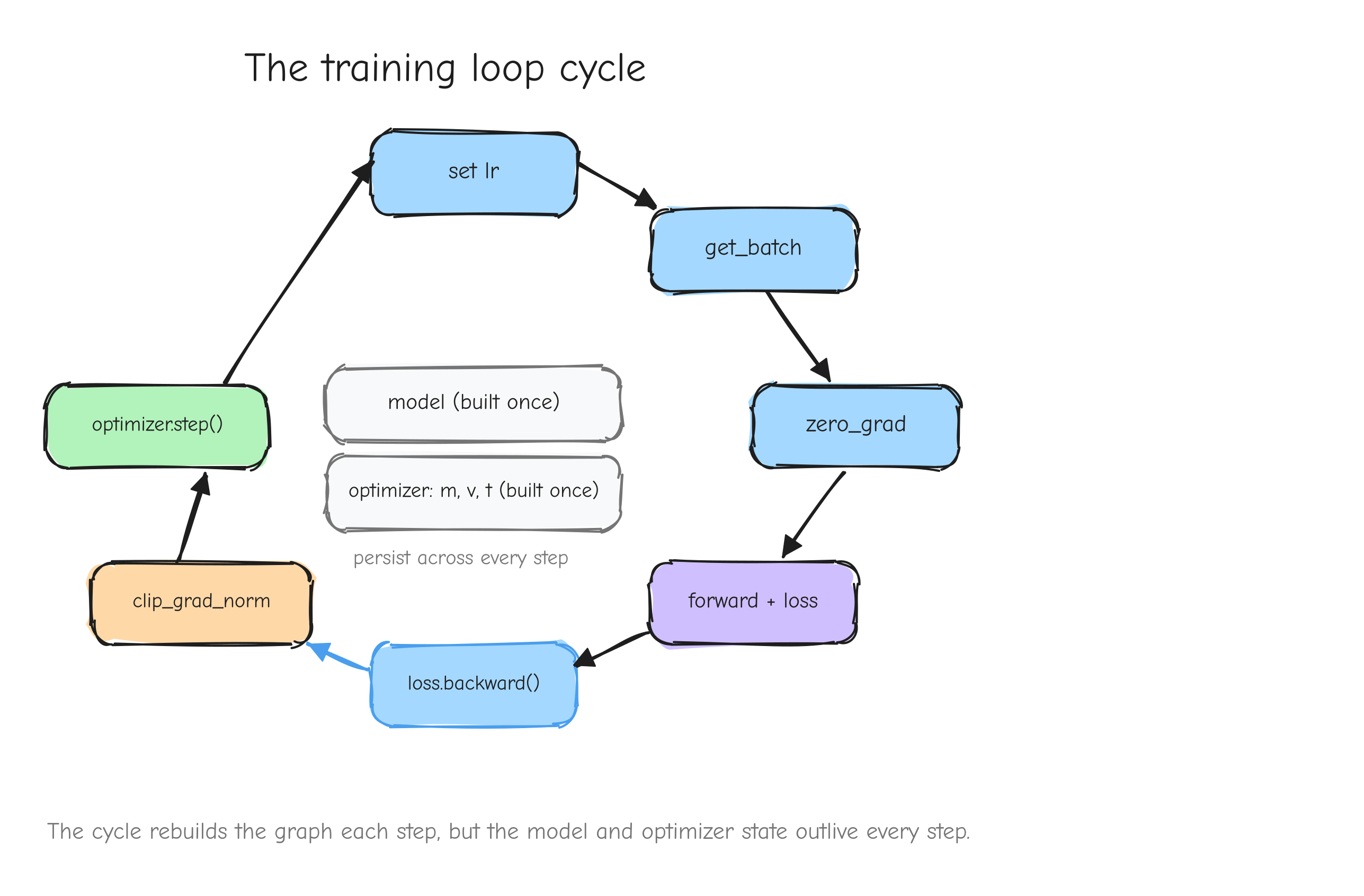

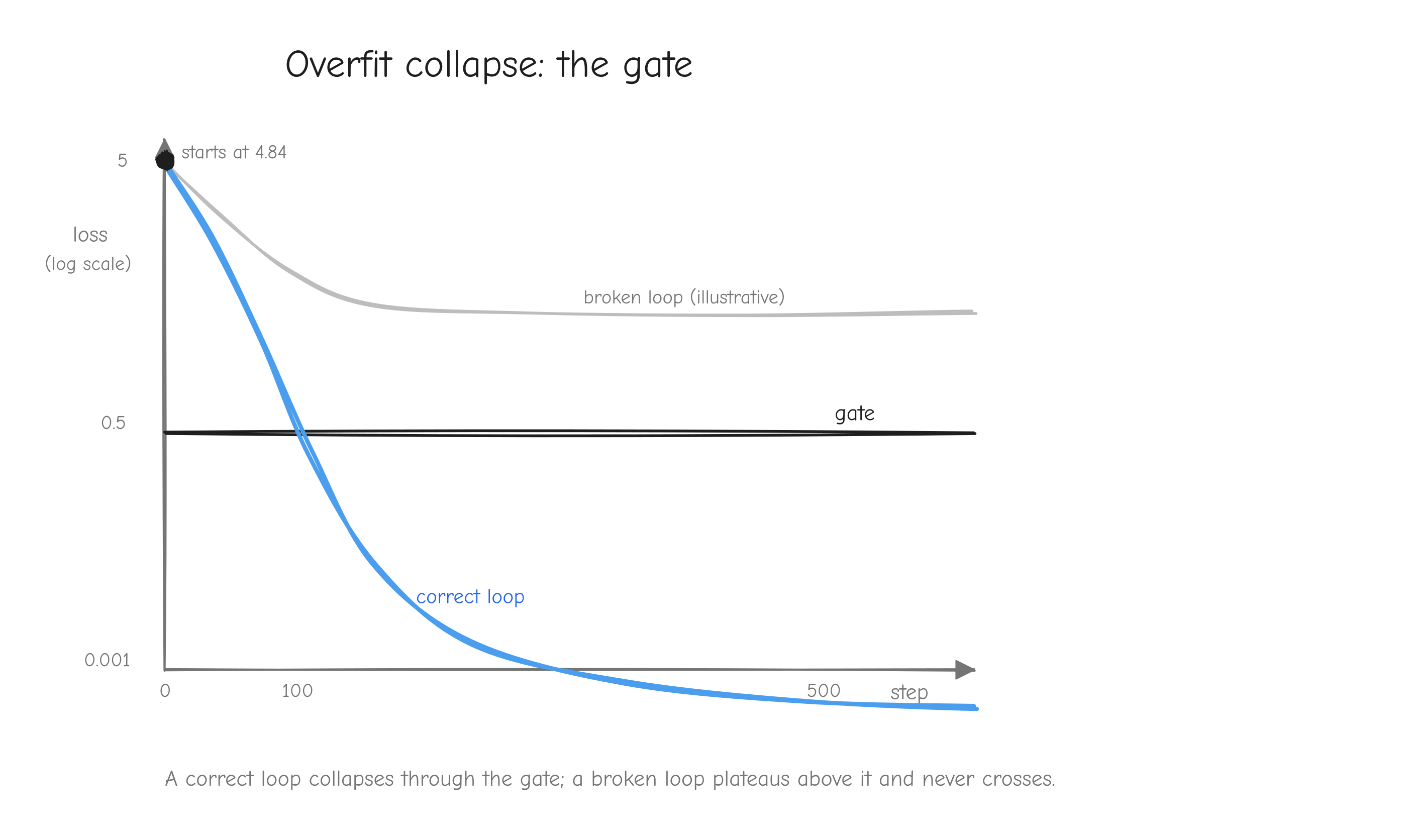

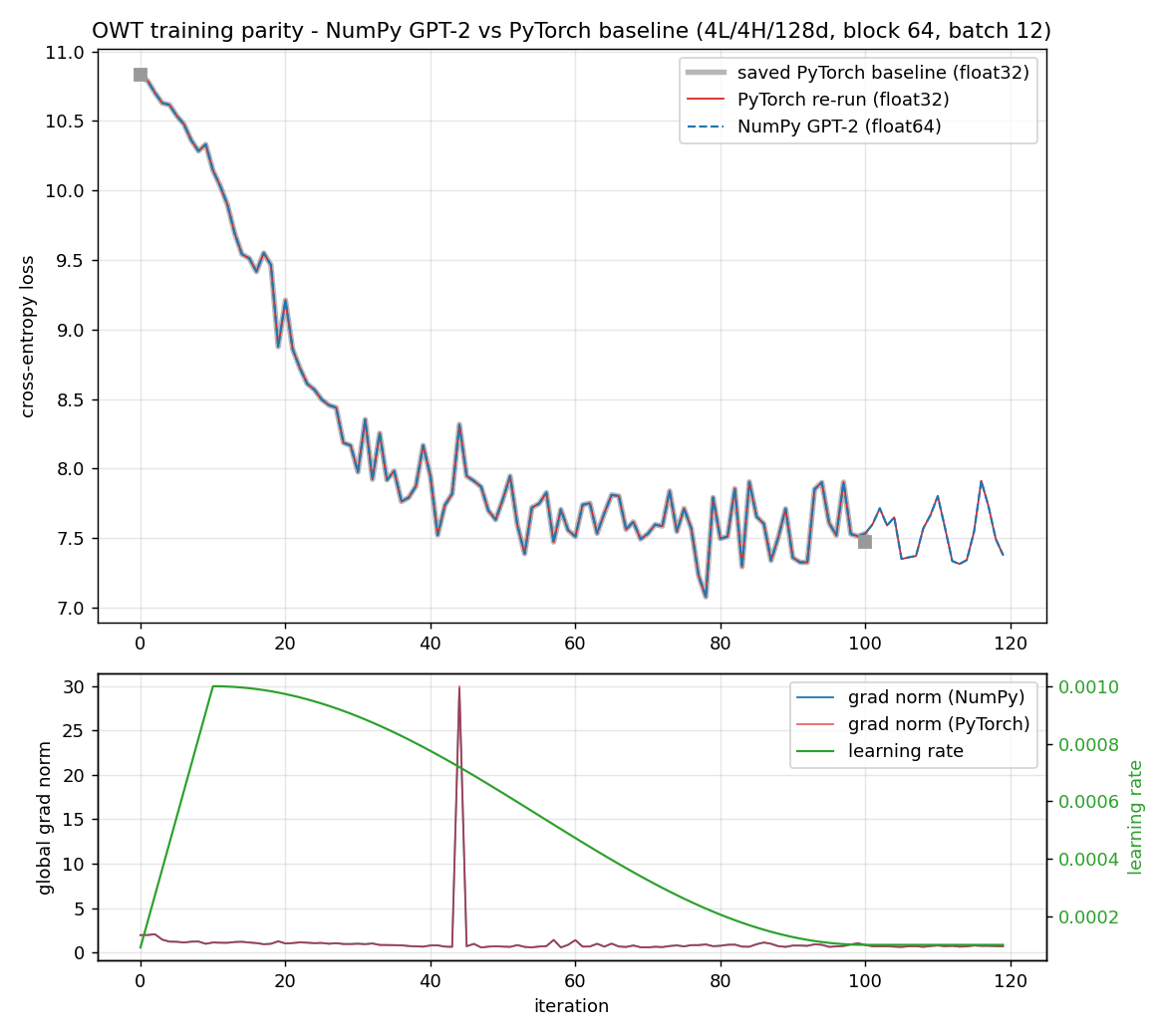

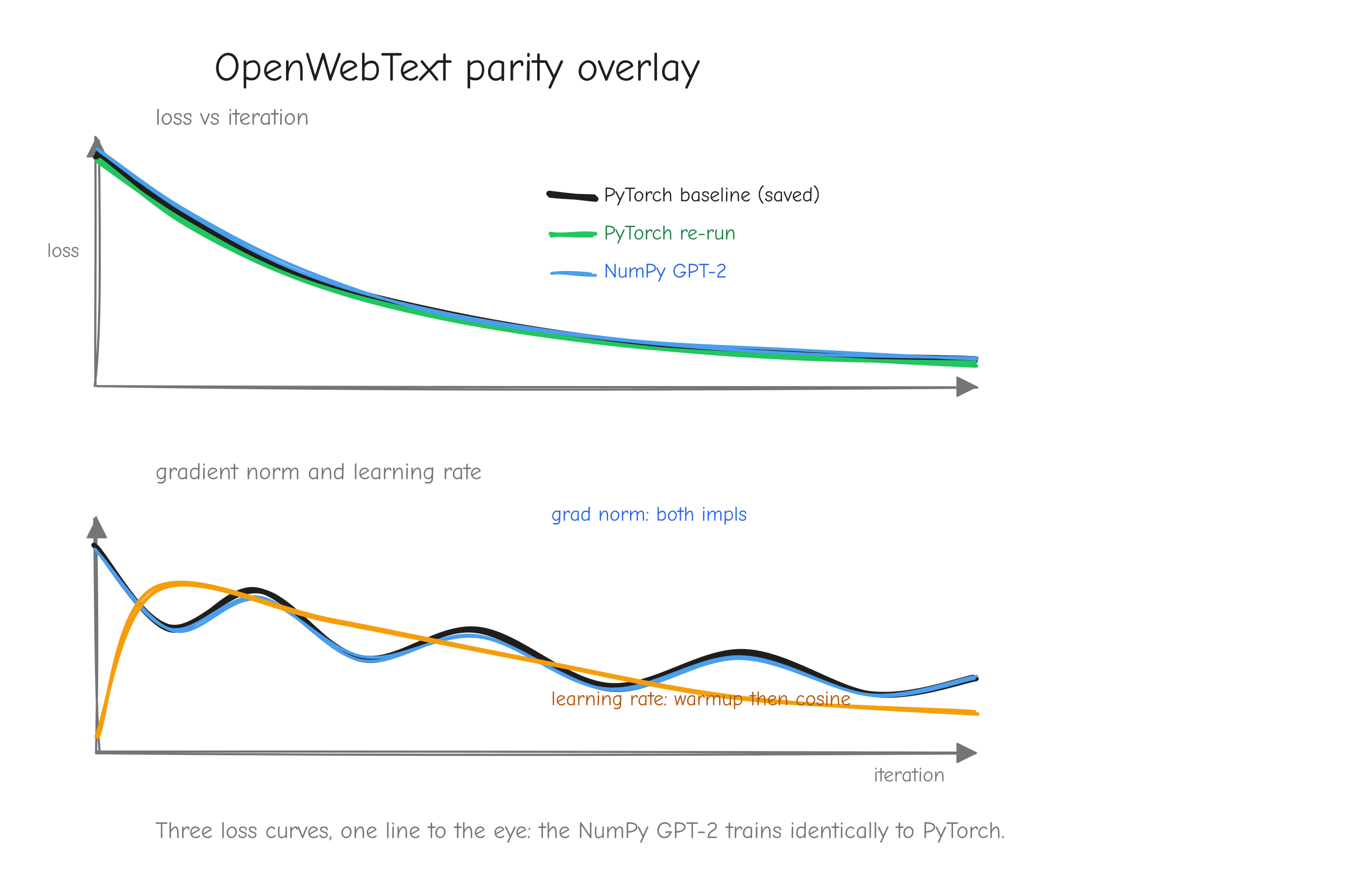

Why this order, and not some other? Because each step has a numerical gate that must pass before the next begins. The derivation is gated by a tiny worked example you carry through by hand. The implementation is gated by the gradient check against finite differences (tests/gradcheck_results.md: 92/92 checks pass; a deliberately buggy version fails loudly with error about 2.49). The assembled layers are gated by parity against PyTorch (tests/layer_parity.md: 45/45 checks match; tests/model_parity.md: forward loss 7.420869 matching PyTorch exactly). Training is gated first by an overfitting test (tests/overfit_results.md: loss driven from 4.8435 to 0.0010 on 16 sequences) and then by step-for-step parity against the reference (tests/owt_parity.md: max loss difference 5.79e-3 over 100 steps).

The payoff of the gates is debugging that stays local. When something fails at step 8, you do not have to suspect steps 1 through 7, because steps 1 through 7 each passed a gate you trust. The bug is in step 8 or its inputs, and that is a small place to search. A project without gates is a project where every failure is a search over the entire codebase.

The reference baseline: nanoGPT

You cannot know your gradients are correct by inspection. A backward pass can be plausible, run without error, produce arrays of the right shape, and still be wrong. You need an external source of truth.

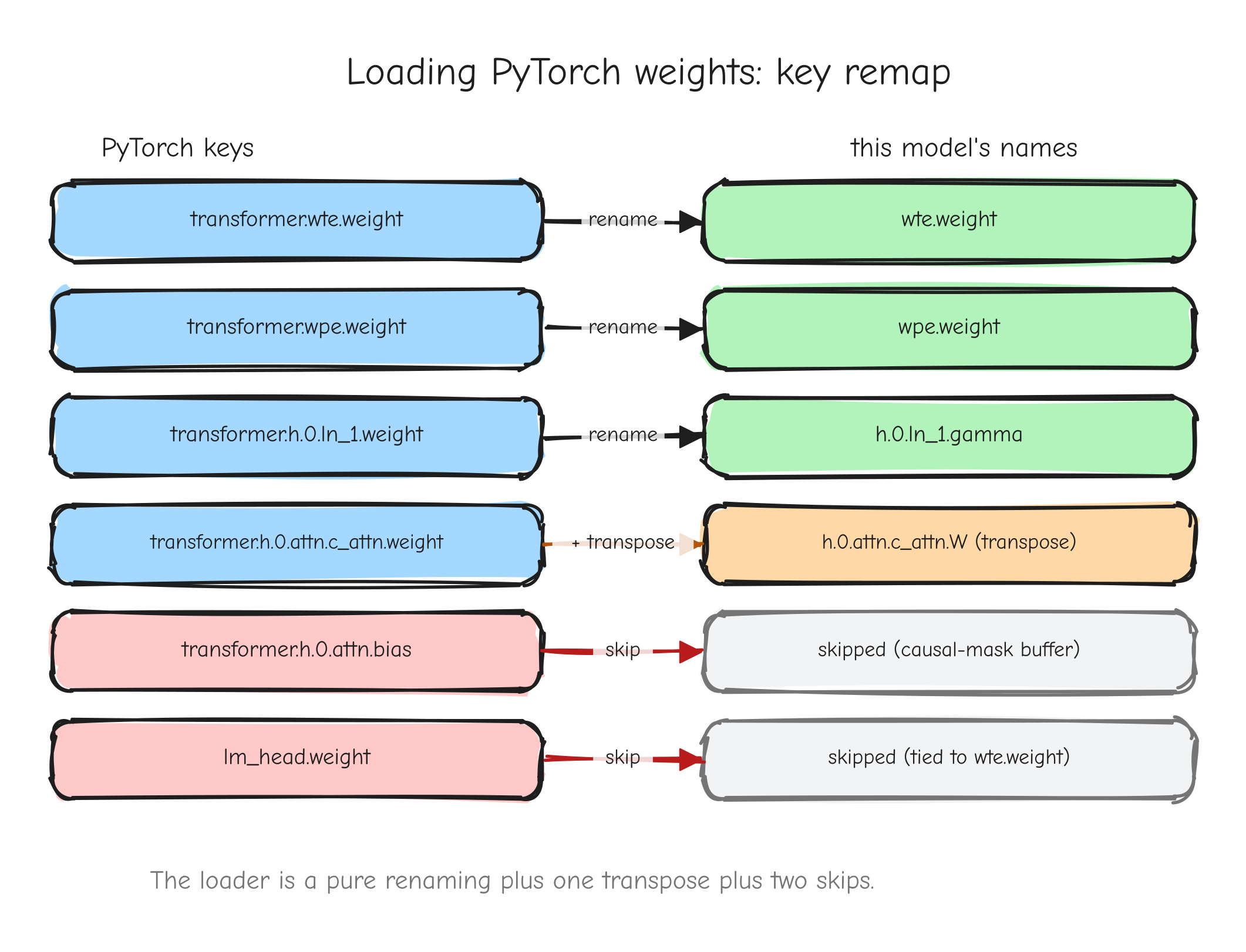

That source is nanoGPT, Andrej Karpathy's compact PyTorch implementation of GPT-2 (nanogpt/). It is a roughly 300-line model and a roughly 300-line training loop, readable end to end, and it can load the real OpenAI GPT-2 weights. We reimplement it in NumPy and check our outputs against it at every step: forward activations, gradients, optimizer updates, and finally the loss curve over a real training run. PyTorch already knows the right answer; we use it as an oracle. When our LayerNorm backward pass produces a gradient, we hand the same inputs to PyTorch's LayerNorm, ask for its gradient, and demand the two agree to a tight tolerance.

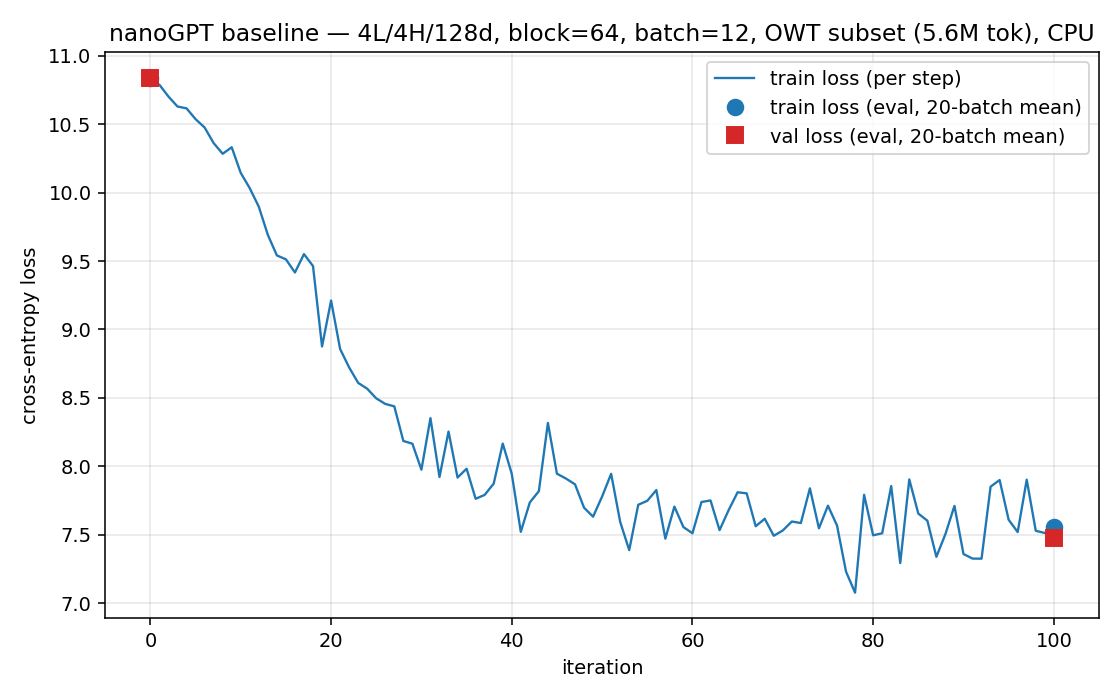

The training configuration we check against is deliberately tiny so it runs on a CPU in minutes: 4 layers, 4 heads, embedding dimension 128, sequence length 64, batch size 12, learning rate 1e-3, 100 steps (nanogpt/config/train_owt_baseline.py). Small enough to iterate on while debugging, complete enough to be a real GPT-2. When our NumPy training run and the PyTorch run agree step for step (step 0 loss 10.8217 on both), we have evidence the whole system is correct, not a hope.

What this course narrates

Most explanations of backpropagation present the finished derivative and move on. This one narrates the work: why a derivation starts where it does, which substitution to reach for and why, where an index notation step is doing real work versus bookkeeping, and what a failed gate actually looks like when you hit it. The dead ends that teach something are kept in. The skill being taught is not "here is the gradient of softmax", it is "here is how you would find the gradient of softmax, or of anything else, on your own". You will see this in the > **Thinking process:** callouts throughout. The formulas you can look up; the reasoning is the part that is hard to transfer and worth your attention.

Roadmap: the 16 parts

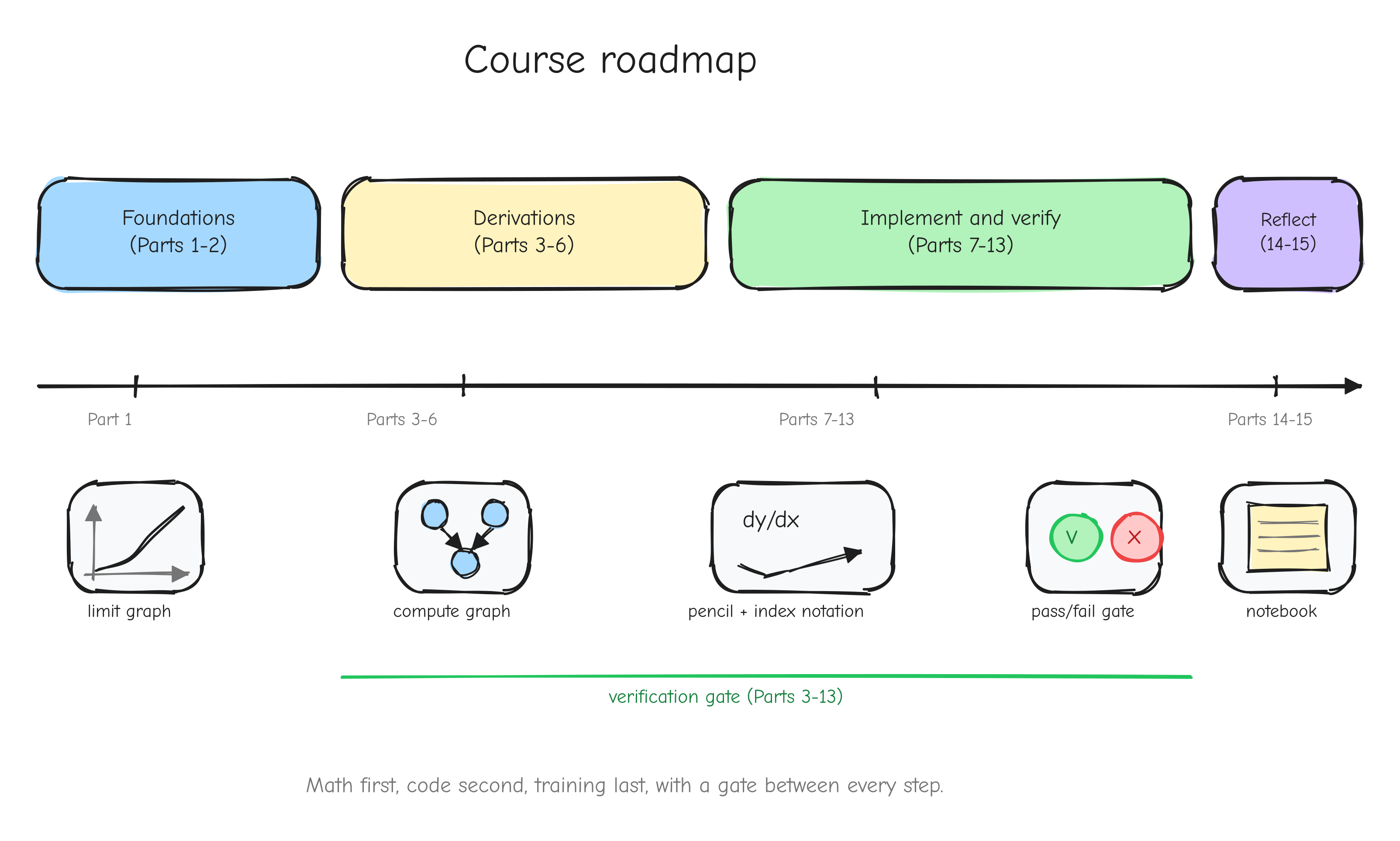

The course is 16 parts in four groups.

Foundations (Parts 1 to 2). Part 1, Mathematical foundations from zero, builds the mathematics: limits, derivatives, the chain rule, partial derivatives, gradients. Part 2, Backpropagation and computational graphs, reframes a neural network as a graph of operations and shows how the chain rule, applied mechanically backward through that graph, is backpropagation.

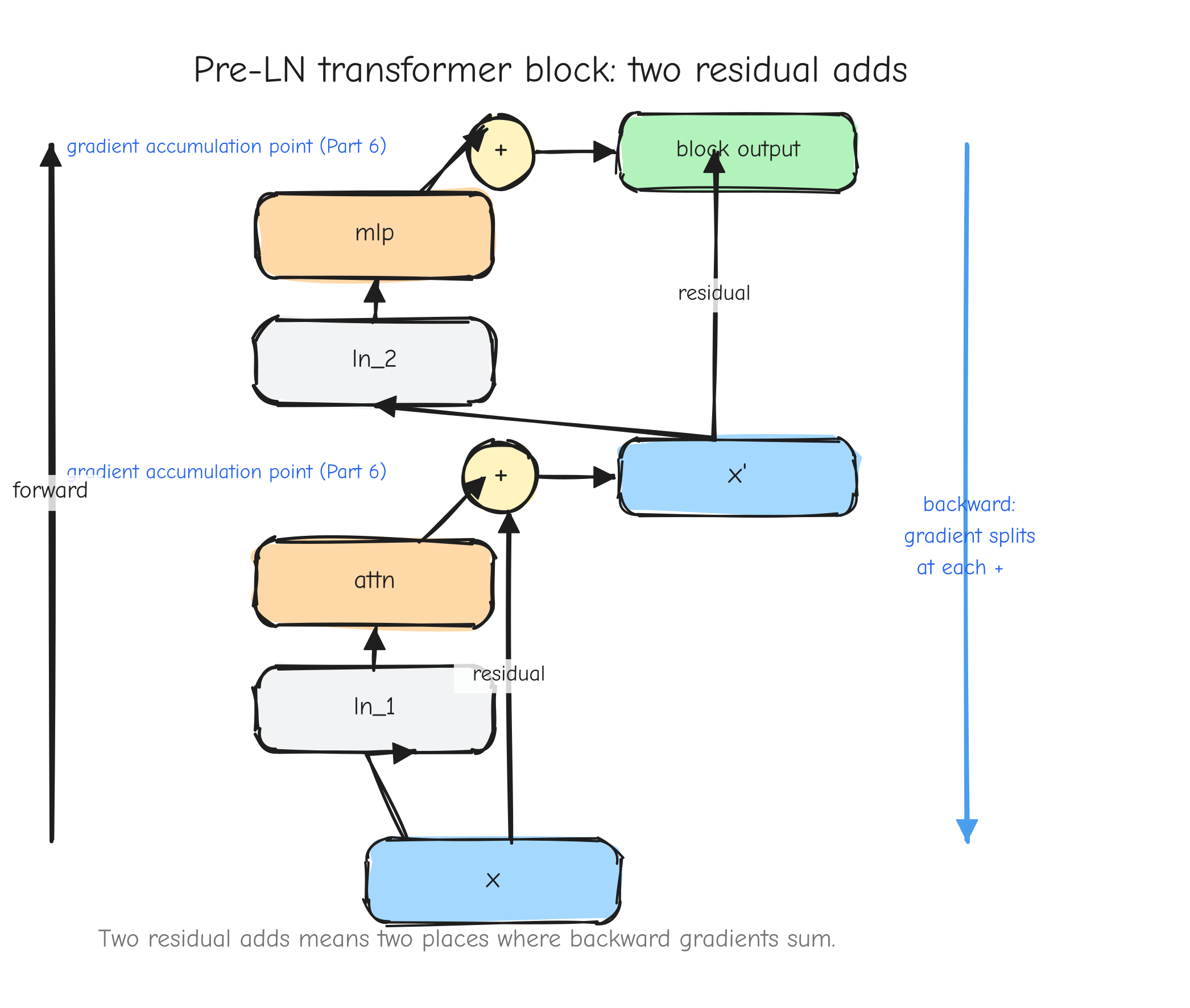

The derivations (Parts 3 to 6). Every operation GPT-2 needs, derived by hand, tiered by difficulty. Part 3 covers warm-ups and linear algebra (add, multiply, transpose, reshape, reductions, ReLU, matmul). Part 4 derives the loss layer (softmax fused with cross-entropy). Part 5 covers normalization, embeddings, and weight tying. Part 6 covers attention and the composition of a full transformer block. These parts use the pen.

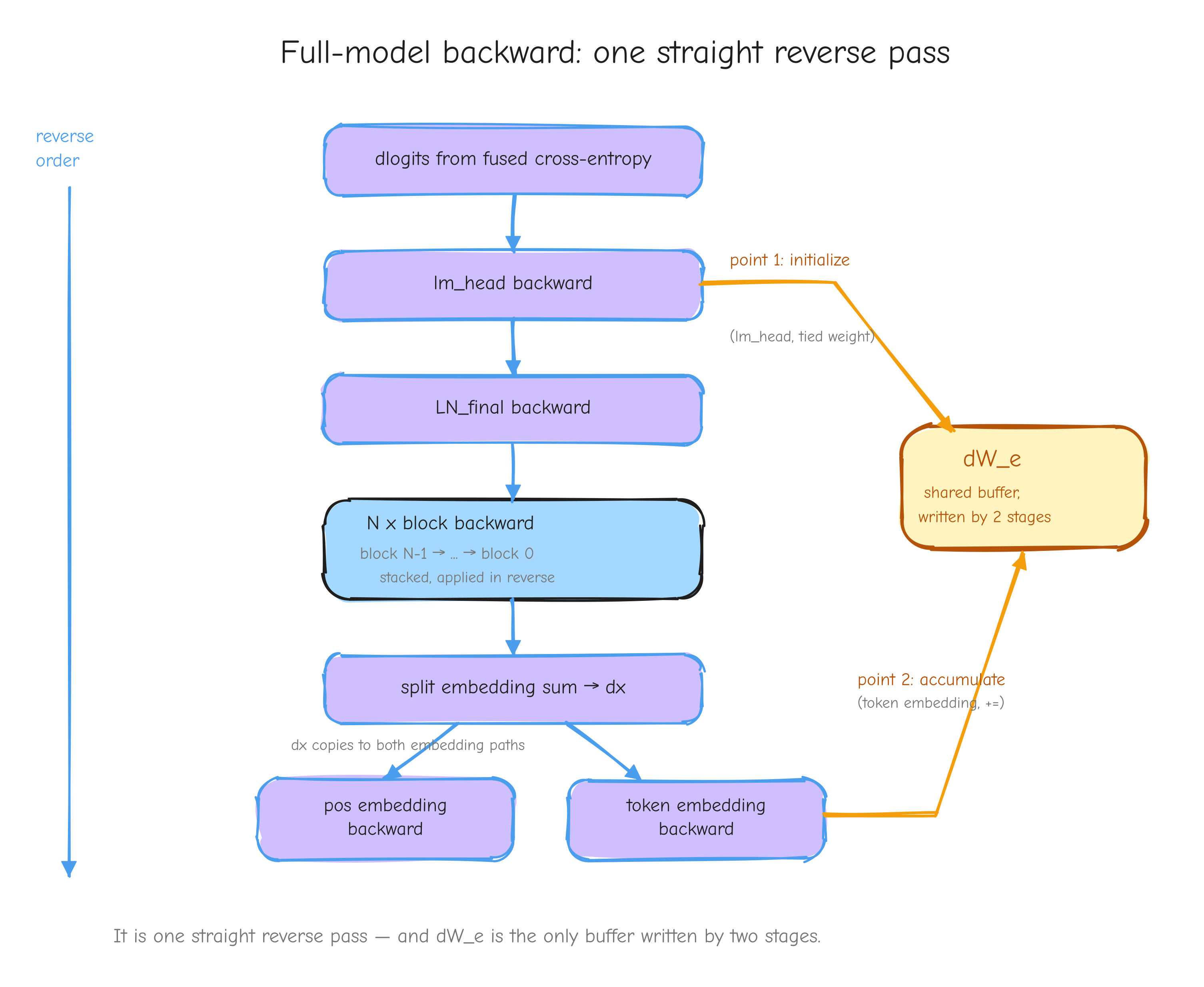

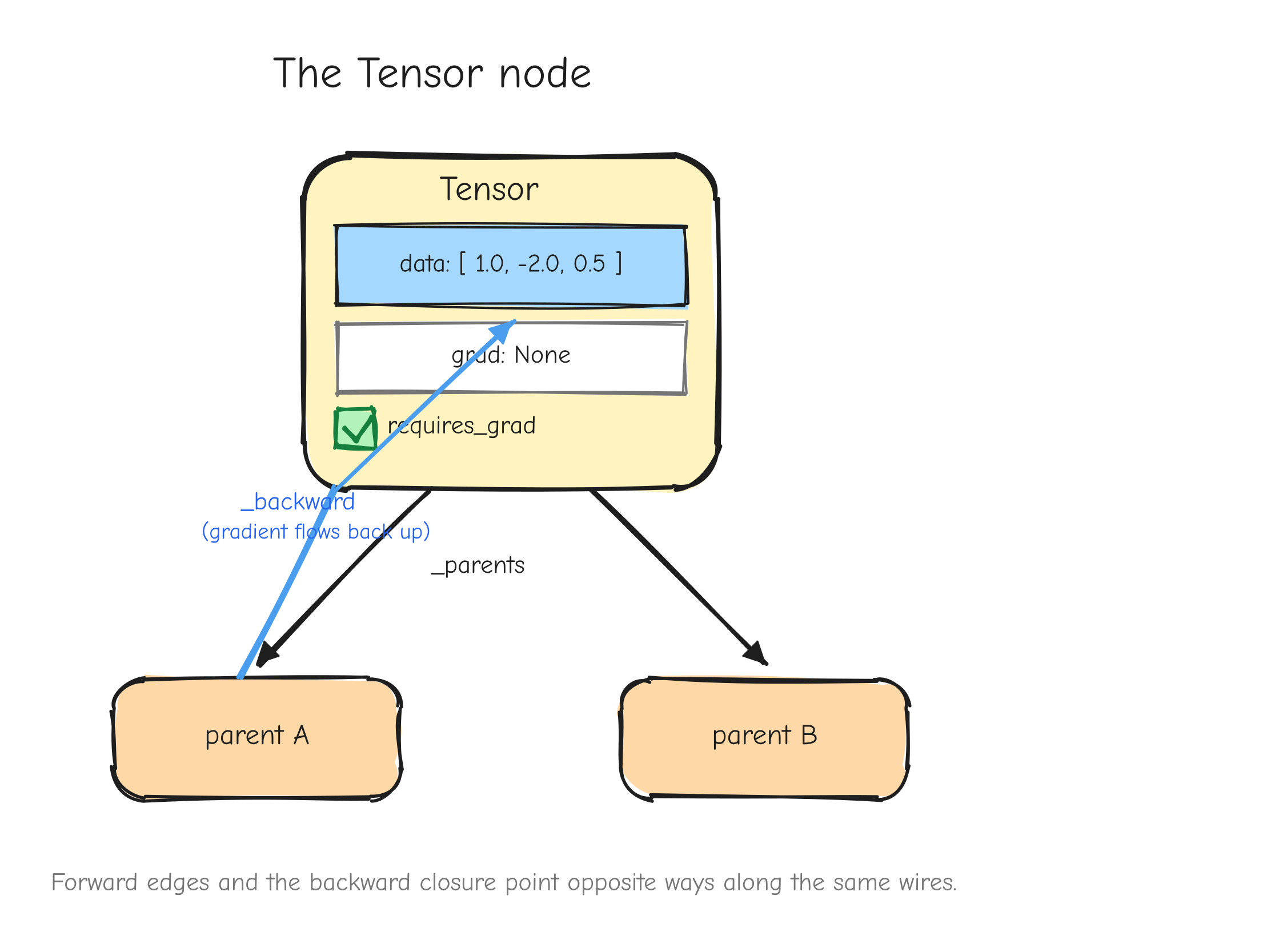

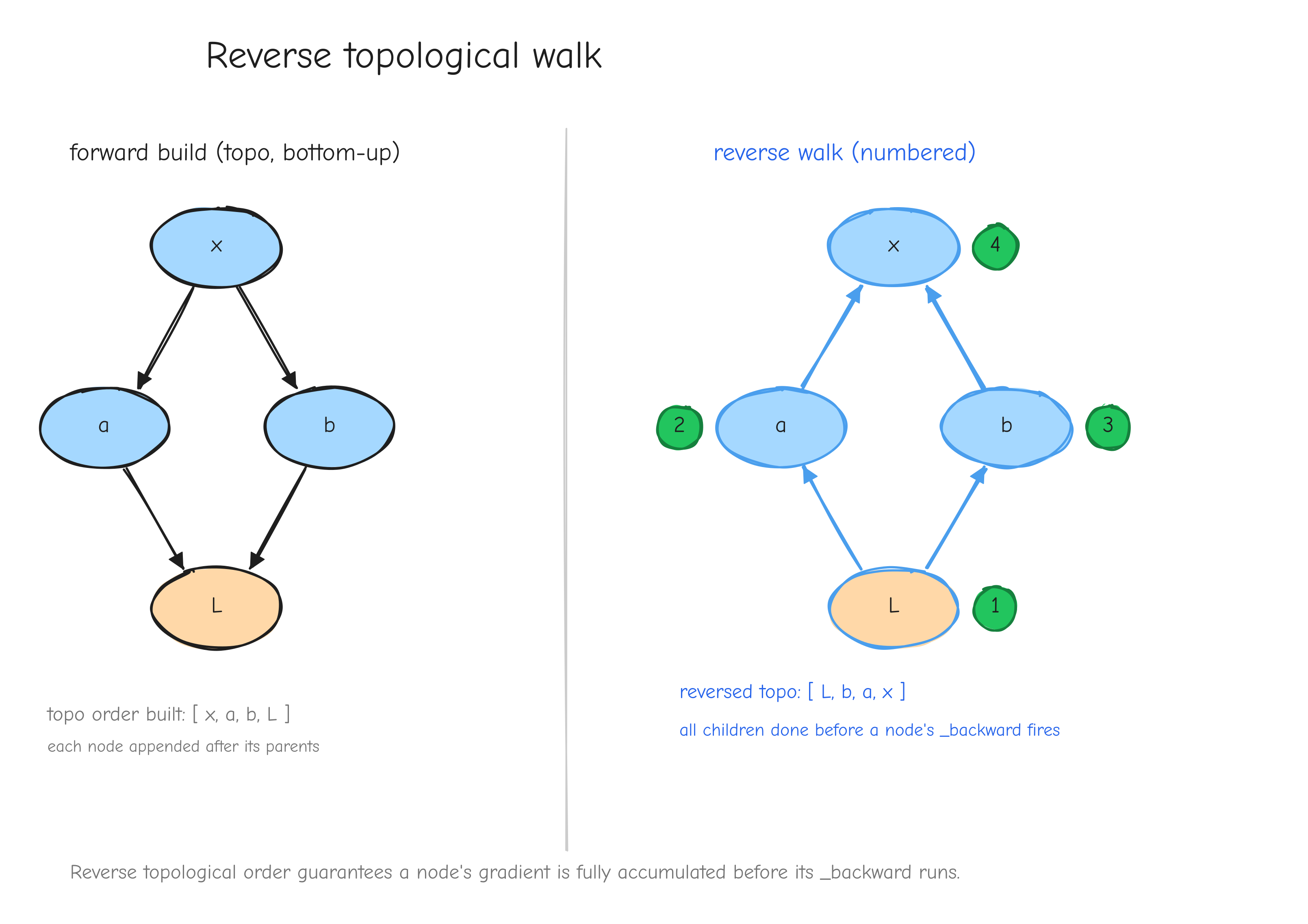

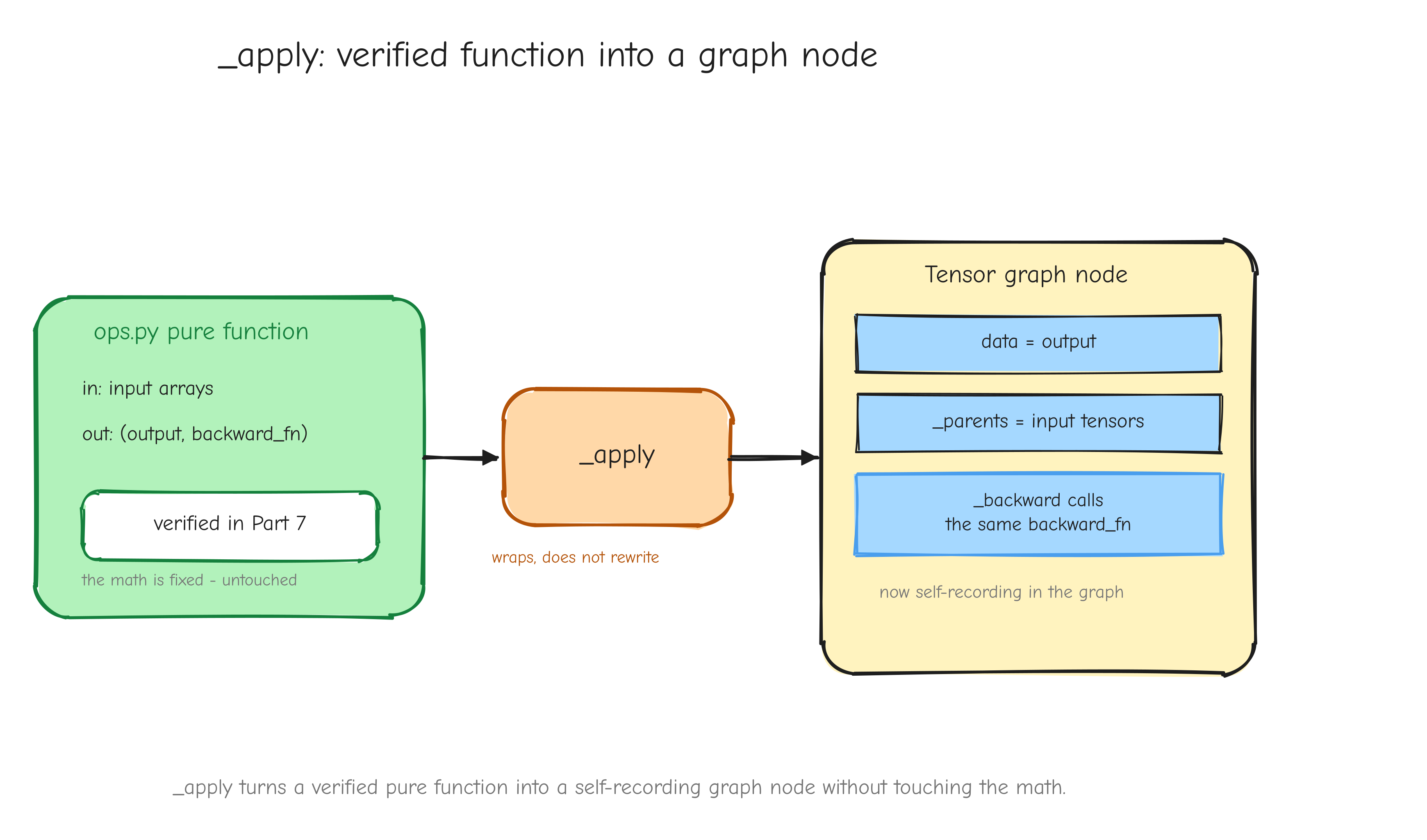

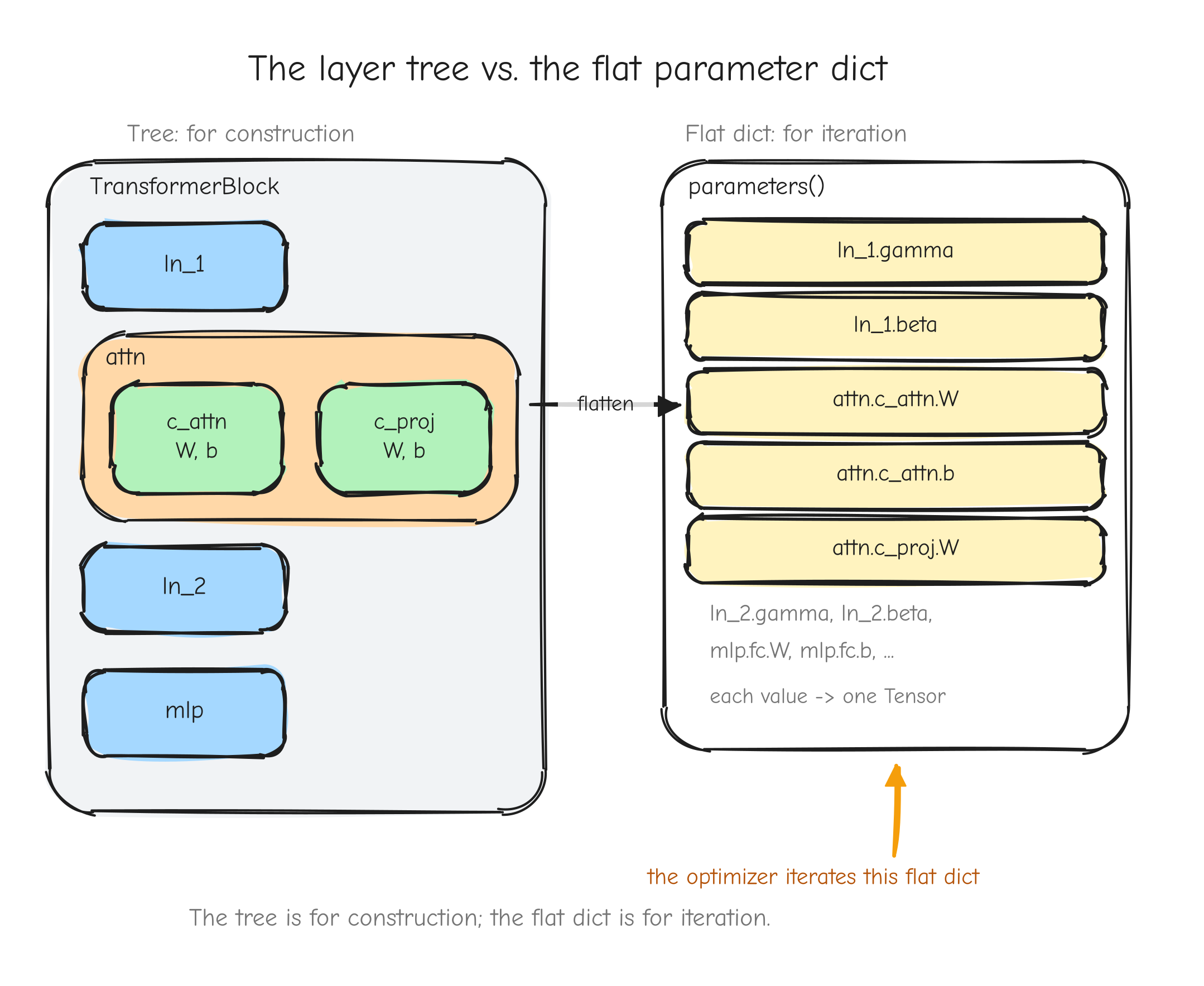

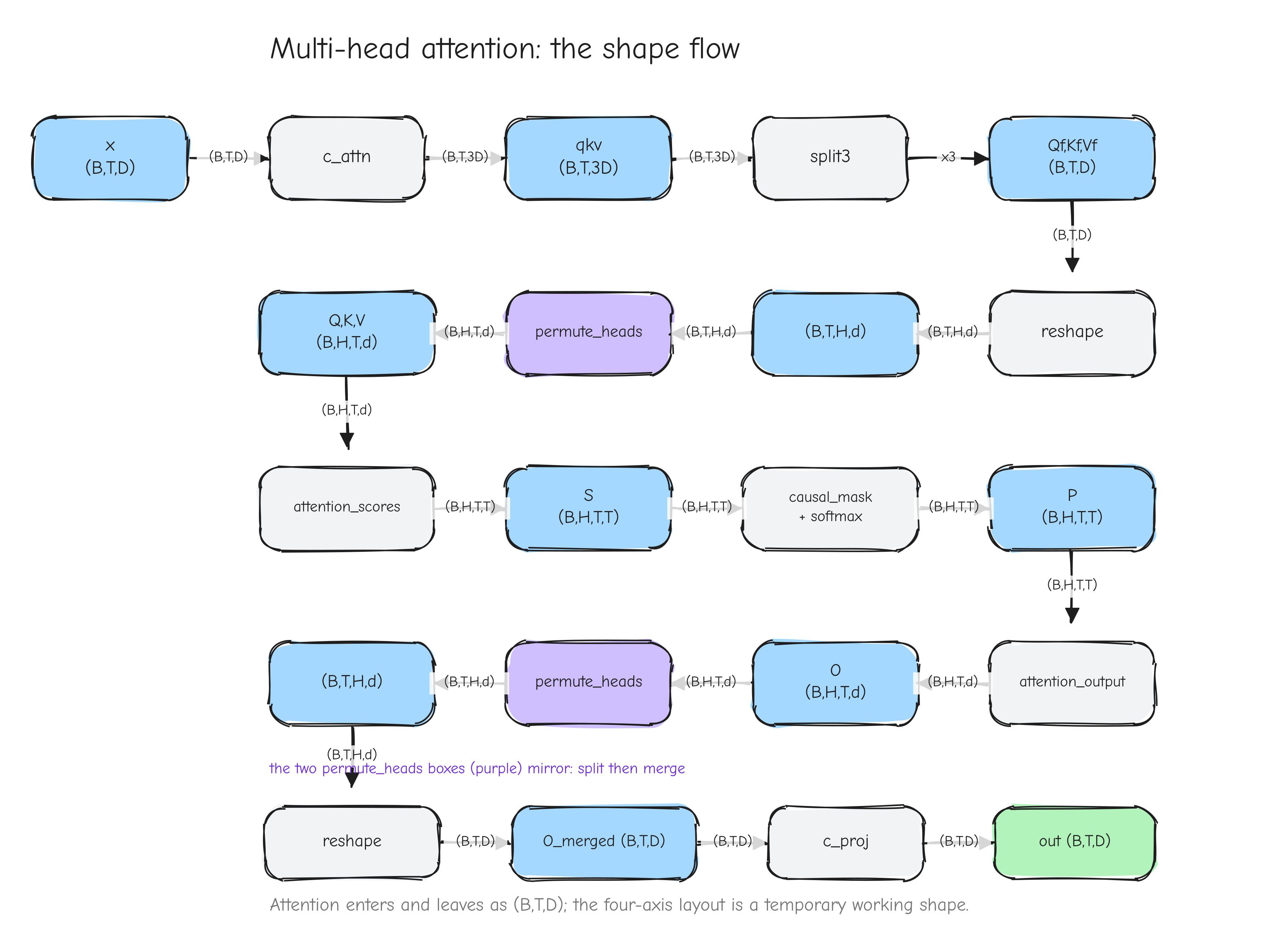

Implement and verify (Parts 7 to 13). Part 7 builds the numerical gradient checker, the gate for everything that follows. Part 8 builds the autograd engine, the Tensor class. Part 9 builds the neural network layers and checks each against PyTorch. Part 10 assembles GPT-2. Part 11 builds the AdamW optimizer. Part 12 builds the training loop and runs it. Part 13 evaluates the trained model and runs the benchmarks.

Reflect (Parts 14 to 15). Part 14, Roadblocks and lessons, is the honest log of what went wrong and what each failure taught. Part 15 is the epilogue.

How to read it

The two movements ask different things of you.

The derivation parts (3 to 6) want paper and a pen. You will not absorb an index-notation derivation by reading it the way you read prose. Work the small examples alongside the text; the course gives you tiny cases (1-D or 2x2) specifically so you can carry them through by hand and confirm you followed. These parts are best read slowly, away from a screen.

The implementation parts (7 to 13) want the repository open in an editor. The code in those parts is copied faithfully from the real files (tensor.py, layers.py, gpt.py, optimizer.py, trainer.py, and the tests). Read it next to the actual source, run it, break it on purpose, and watch the gates catch you.

Parts 0, 1, 2, 14, and 15 want neither; read them straight through. One practical note on length: this is long-form, and it does not rush the hard parts. The math foundations and the deep derivations (LayerNorm, attention) are the longest stretches; the warm-up operations are deliberately short. Slowing down on the hard parts is the correct move, not a failure.

Part 1: Mathematical foundations from zero

Training GPT-2 means adjusting millions of numbers so the model's output gets closer to what we want. To know which direction to adjust each number, we need one idea: the derivative. Everything in this course rests on it. So we build it from the ground up, starting with the question the derivative answers.

You need basic algebra: you can read f(x) = x^2, plug in a number, and solve a simple equation. Nothing else. We go slow on the hard parts and fast on the rest.

Limits: approaching a value

Here is a concrete problem. You drop a ball, and after t seconds it has fallen f(t) = 5t^2 meters. How fast is it moving at exactly t = 2 seconds?

"Speed" is distance divided by time. But at a single instant, no time passes and no distance is covered, so the formula gives 0/0, which is meaningless. We need a different move.

Instead of the speed at t = 2, compute the average speed over a short interval starting at t = 2. From t = 2 to t = 2 + h:

Plug in f(t) = 5t^2. We have . Now shrink h and watch:

h |

f(2+h) |

average speed (f(2+h) - 20) / h |

|---|---|---|

1 |

25 |

|

0.1 |

20.5 |

|

0.01 |

20.05 |

|

0.001 |

20.020005 |

20.005 |

The numbers are closing in on 20. They never reach 20, because reaching it would need h = 0 and the 0/0 problem returns. But they get as close to 20 as we want by making h small enough. That value the numbers approach is the limit.

We write it like this:

Read as "h approaches zero". The whole expression reads: "the value the fraction approaches as h gets arbitrarily close to zero". The limit is 20, so the ball's instantaneous speed at t = 2 is 20 meters per second.

This is the entire reason limits exist for us: they turn "change at an instant", which looks like 0/0, into a real number. With that tool we can define instantaneous change. That is the derivative.

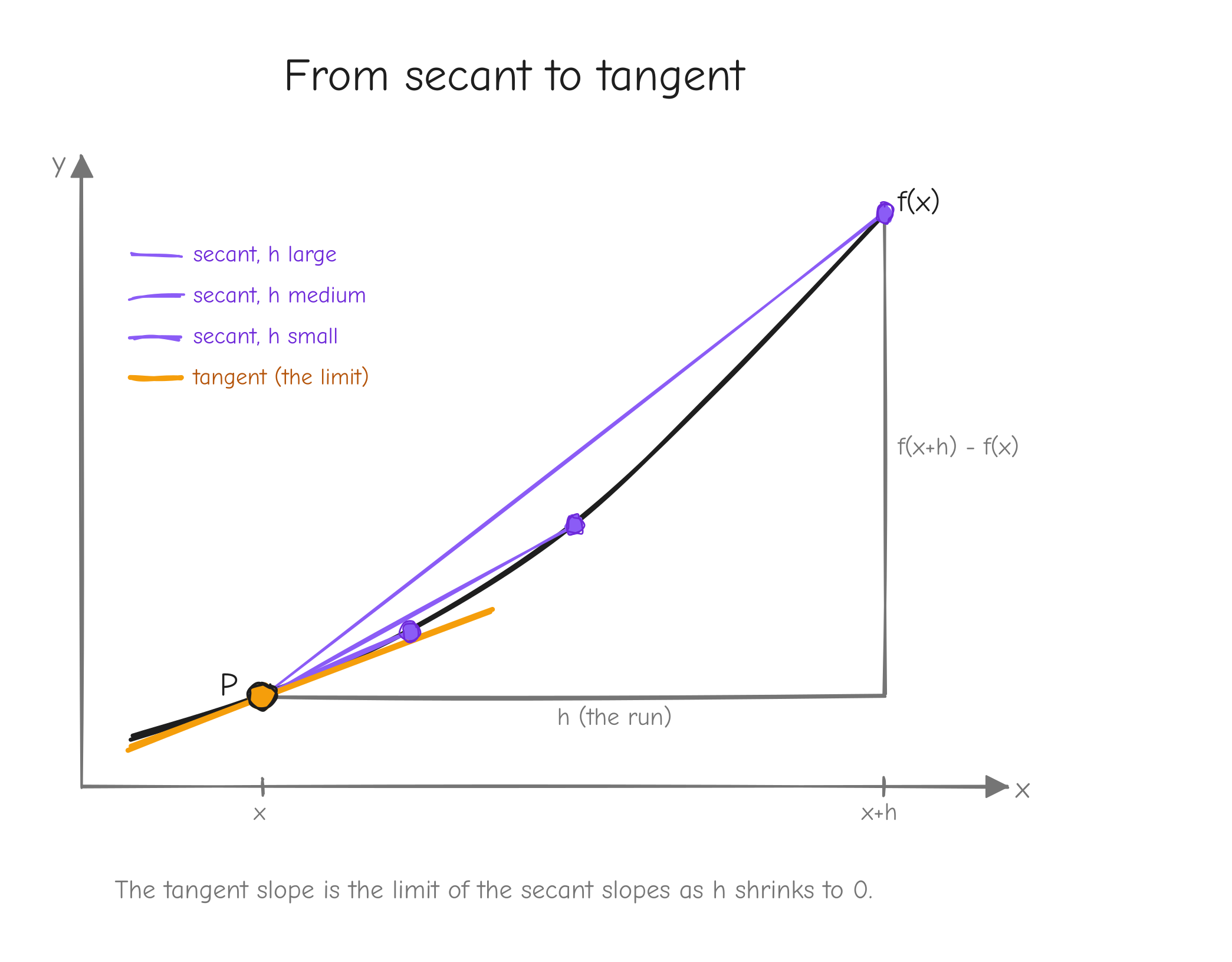

The derivative: the slope of a curve

Plot a function f(x) as a curve. Pick a point on it. The derivative at that point is the slope of the curve there: how steep it is, how fast f rises or falls as x moves.

For a straight line, slope is easy: pick two points, take rise / run. For a curve, the slope changes from place to place, so "the slope" needs care.

Start with two points on the curve: (x, f(x)) and a second point h to the right, (x+h, f(x+h)). The straight line through these two points is the secant line. Its slope is rise / run:

This is exactly the average-speed fraction from the last section, now read geometrically. As h shrinks, the second point slides toward the first along the curve, and the secant line pivots. In the limit, the two points merge and the secant becomes the tangent line: the straight line that just grazes the curve at that single point. Its slope is the derivative.

f(x) with a fixed point P marked. Show three secant lines through P and a second point, for h large, medium, small, each secant flatter against the curve than the last. Then the tangent line at P as the limit. Label the run h and the rise f(x+h) - f(x) on the largest secant. Takeaway: the tangent slope is the limit of secant slopes as .The formal definition:

f'(x), read "f prime of x", is the derivative of f. It is itself a function: feed it an x, get back the slope of f at that x. The other common notation is , read "d f d x", which you can think of as "a tiny change in f divided by the tiny change in x that caused it". The two notations mean the same thing.

Working f(x) = x^2 by hand

Let us derive f'(x) for f(x) = x^2 straight from the definition, with nothing skipped.

Start by writing the fraction:

Expand (x+h)^2. That is (x+h)(x+h) = x^2 + 2xh + h^2. Substitute:

The x^2 and -x^2 cancel:

Both terms in the numerator have a factor of h. Divide it out:

This is the key step. Before dividing, plugging in h = 0 gave 0/0. After dividing, the expression is 2x + h, and now h = 0 is harmless. Take the limit:

So the derivative of x^2 is 2x. Check it against the ball: f(t) = 5t^2 has derivative , and at t = 2 that is 20, exactly the limit we computed numerically.

Differentiation rules

Deriving every function from the definition is slow. A handful of rules cover everything we need. Each one can be proven from the definition; we state the rule, say why in one line, and work one example.

Constant rule. If f(x) = c for a constant c, then f'(x) = 0. A constant function is a flat horizontal line, and a flat line has slope zero. Example: f(x) = 7, f'(x) = 0.

Power rule. If f(x) = x^n, then f'(x) = n x^{n-1}. We saw the pattern for n = 2: x^2 gave 2x^1. The same cancellation works for any n. Example: f(x) = x^3, f'(x) = 3x^2. Example: f(x) = x = x^1, (a line of slope 1).

Sum rule. If f(x) = g(x) + h(x), then f'(x) = g'(x) + h'(x). The slope of a sum is the sum of the slopes; the limit of a sum splits into a sum of limits. Example: f(x) = x^2 + x^3, f'(x) = 2x + 3x^2. A constant multiple comes along for free: , so f(x) = 5x^2 has f'(x) = 10x.

Product rule. If , then f'(x) = g'(x) h(x) + g(x) h'(x). It is not g'(x) h'(x). Reason: when both factors change at once, f changes from each factor moving while the other holds, and you add the two contributions. Example: f(x) = x^2 (x^3) . Then g = x^2, h = x^3, so f'(x) = (2x)(x^3) + (x^2)(3x^2) = 2x^4 + 3x^4 = 5x^4. Check: , and the power rule gives 5x^4. They agree.

Quotient rule. If f(x) = g(x) / h(x), then

It follows from the product rule applied to . The minus sign and the squared denominator are the parts people forget. Example: . Here g = x^2, g' = 2x, h = x+1, h' = 1:

These five rules plus the chain rule (next) let us differentiate every function in GPT-2.

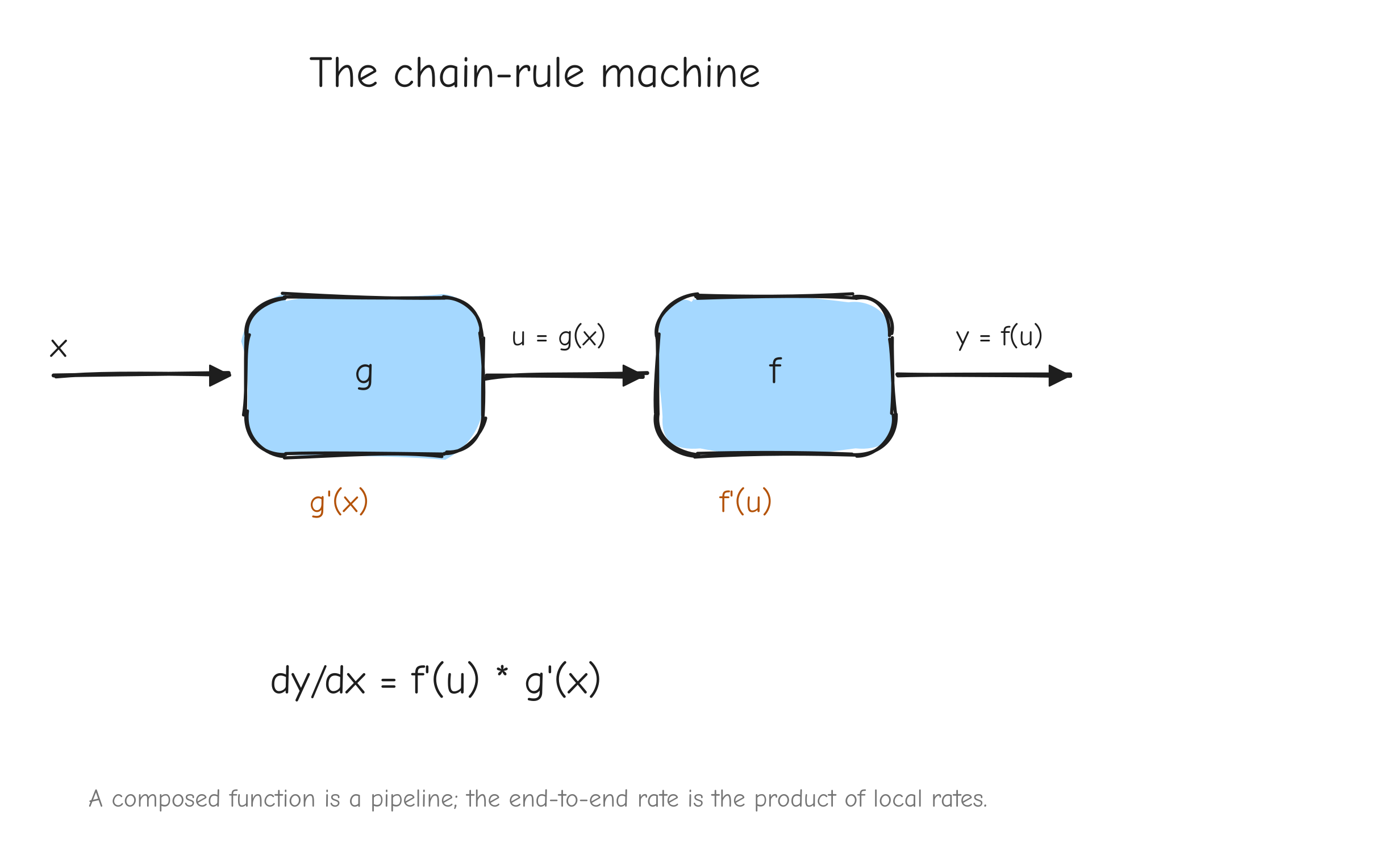

The chain rule

This is the most important section in this part. Backpropagation, the algorithm that trains every neural network, is the chain rule applied at scale. If you understand this section, the rest of the course is mechanics.

A composed function is a function inside a function. Write f(g(x)): take x, apply g to get g(x), then apply f to that. Example: f(u) = u^2 and g(x) = x + 1. Then f(g(x)) = (x+1)^2. The inner function runs first, the outer function runs on its result.

The chain rule says how to differentiate the whole thing:

means "f composed with g", the composed function. Read the right side as: the derivative of the outer function, evaluated at the inner value, times the derivative of the inner function.

Why rates multiply

Think of two connected gears, or a chain of cause and effect. Suppose g turns x into u, and f turns u into y.

g'(x)says: whenxmoves by a tiny amount,umovesg'(x)times as much.f'(u)says: whenumoves by a tiny amount,ymovesf'(u)times as much.

Now nudge x. It pushes u by g'(x) times the nudge. That movement in u pushes y by f'(u) times that. The two factors multiply: y moves by times the original nudge. That product is the derivative of the whole chain. Rates of change compose by multiplication.

x enters box g, output u = g(x) enters box f, output y = f(u) exits. Under each box write its local rate: under g write g'(x), under f write f'(u). Below the whole pipeline write the total rate . Takeaway: a composed function is a pipeline, and the end-to-end rate is the product of the local rates along it.Worked examples

Example 1. f(g(x)) = (x+1)^2. Outer f(u) = u^2, so f'(u) = 2u. Inner g(x) = x+1, so g'(x) = 1. The chain rule:

Check by expanding first: (x+1)^2 = x^2 + 2x + 1, derivative 2x + 2 = 2(x+1). Agrees.

Example 2. f(g(x)) = (3x^2 + 1)^5. Outer f(u) = u^5, f'(u) = 5u^4. Inner g(x) = 3x^2 + 1, g'(x) = 6x. Then:

Expanding (3x^2+1)^5 by hand would be miserable. The chain rule makes it routine. That is the point.

Example 3: a 3-deep composition. Let y = h(g(f(x))) with three layers:

- innermost

f(x) = x^2, sof'(x) = 2x - middle

g(u) = u + 1, sog'(u) = 1 - outer

h(v) = v^3, soh'(v) = 3v^2

So y = (x^2 + 1)^3. The chain rule extends by multiplying one factor per layer, each evaluated at the value flowing into that layer:

Evaluate at a concrete x = 2. Compute the forward values first, inside out:

f(2) = 4g(4) = 5h(5) = 125, soy = 125

Now the three derivative factors, each at its own input:

g'(f(x)) = g'(4) = 1

Multiply: at x = 2.

A neural network is a deeply nested composition: each layer is a function, and the network applies dozens of them in sequence, with the loss L as the final outer function. Training means computing the derivative of L with respect to every internal number, and that is the chain rule applied thousands of times, one factor per operation. There is no other trick. The whole machine is this section, scaled up and organized.

Functions of many variables and partial derivatives

So far f took one input. The functions in GPT-2 take many: a layer's output depends on its input and all its weights. We need derivatives for that case.

A function of two variables, f(x, y), takes a pair and returns a number. Picture it as a landscape: x and y are east and north on a map, and f(x, y) is the height of the ground there.

Standing on that landscape, "the slope" is ambiguous: it depends which way you face. So we ask a sharper question: what is the slope if I walk due east, in the x direction only, holding y fixed? That is the partial derivative of f with respect to x, written . The symbol (a rounded d) signals "partial: other variables held fixed".

Computing it is no new skill. Treat every variable except x as a constant, and differentiate normally.

Worked example. Let f(x, y) = x^2 y + 3y.

For , treat y as a constant. The term x^2 y is "a constant times x^2", with derivative . The term 3y has no x in it, so it is a constant and its derivative is 0:

For , treat x as a constant. The term x^2 y is "a constant times y", derivative x^2. The term 3y has derivative 3:

One function, two partial derivatives, one per input direction. A function of n variables has n partial derivatives.

The gradient

Collect all the partial derivatives of f into a single vector. That vector is the gradient, written (the symbol is "nabla").

For f(x, y) = x^2 y + 3y from above:

At the point (x, y) = (1, 2), the gradient is .

The gradient is not just a bag of numbers. It has a geometric meaning: the gradient points in the direction of steepest ascent. On the landscape picture, stand at a point, compute the gradient, and it points straight uphill, the steepest possible way up. Its length tells you how steep that climb is.

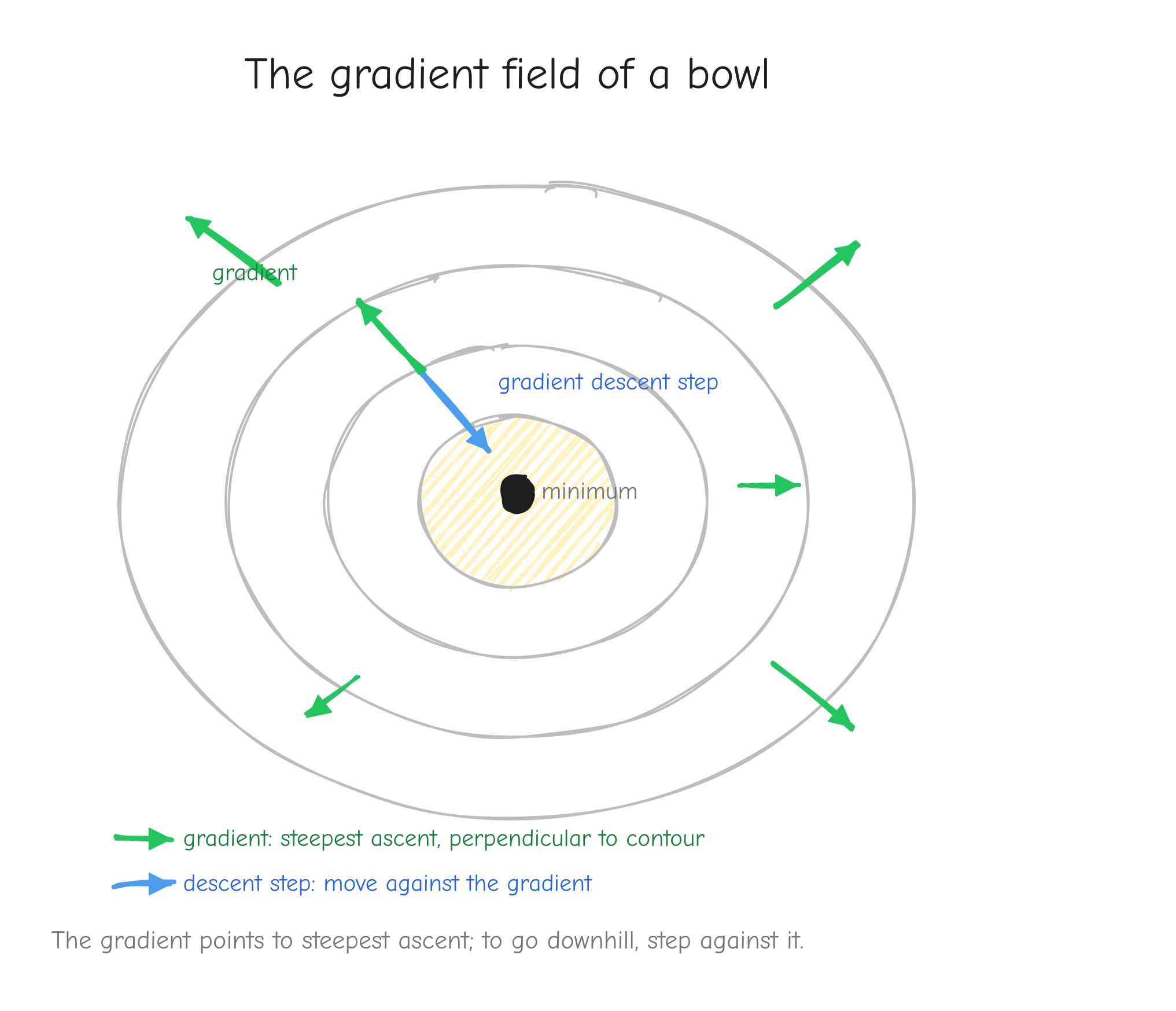

f(x, y), contour lines like a topographic map. At several points draw the gradient as an arrow. Every arrow points "uphill", perpendicular to the contour line through that point, longer where contours are tight (steep) and shorter where they are loose (flat). Add one arrow pointing the opposite way, labeled "gradient descent step". Takeaway: the gradient points to steepest ascent; to go downhill, step against it.This is the bridge to training. We will have a loss L, a single number that measures how wrong the model is, depending on millions of parameters. We want L small. The gradient of L points in the direction that increases L the fastest, so the opposite direction decreases it the fastest. Gradient descent is the rule: compute the gradient, take a small step in the negative gradient direction, repeat. Each step nudges every parameter a little, and L drops. That loop, run thousands of times, is training. Everything else in this course exists to compute that gradient correctly and quickly.

Vectors, matrices, tensors

We just used vectors informally. Pin down the objects we compute with, because GPT-2 is built from them.

A vector is an ordered list of numbers: [2, 0, -1] is a vector of length 3. Geometrically it is a point in space, or an arrow from the origin to that point.

A matrix is a rectangular grid of numbers, arranged in rows and columns. This one has 2 rows and 3 columns:

We say its shape is (2, 3). The entry in row i, column j is written with two indices, like A_{ij}.

A tensor is the general term for an n-dimensional array of numbers. A single number is a 0-D tensor (a scalar). A vector is a 1-D tensor. A matrix is a 2-D tensor. A 3-D tensor is a stack of matrices, and you keep going. In NumPy every one of these is an ndarray, and its .shape is a tuple of sizes, one per dimension: a scalar has shape (), a length-3 vector has shape (3,), the matrix above has shape (2, 3).

Shapes matter constantly in this course. GPT-2 processes a batch of B sequences, each T tokens long, each token represented by a D-dimensional vector. That activation is a 3-D tensor of shape (B, T, D). Most bugs in a from-scratch implementation are shape bugs, so we track shapes on every line. The notation table in the style guide fixes what B, T, D, and the rest mean for the whole course.

The Jacobian

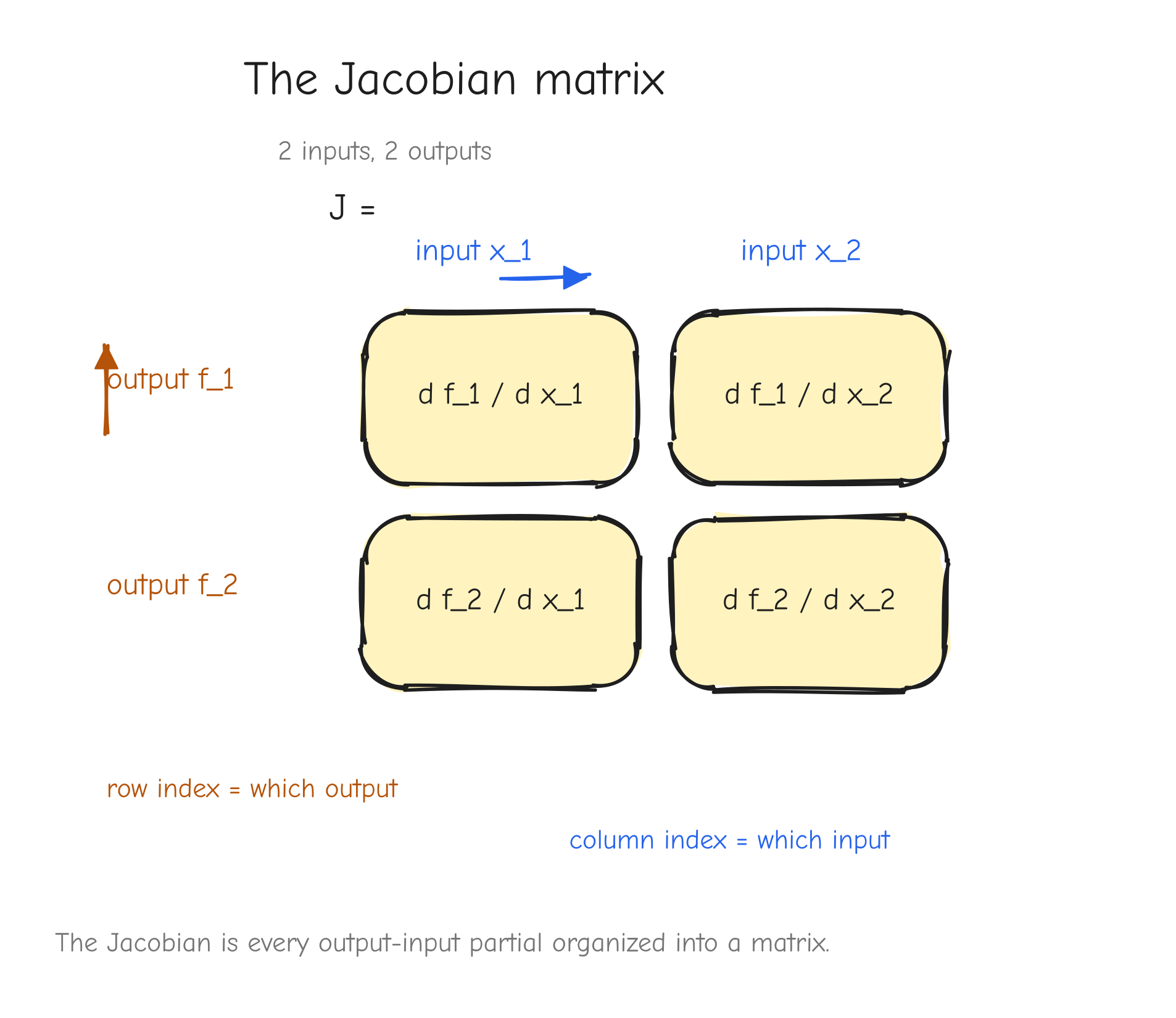

A function can take a vector in and return a vector out. Say f takes a 3-vector and returns a 2-vector. Then f is really 2 output functions, each depending on all 3 inputs. Each output has a partial derivative with respect to each input, so there are partial derivatives. Arrange them in a grid: that grid is the Jacobian matrix.

The convention: the Jacobian J of f has one row per output and one column per input. Entry J_{ij} is the partial derivative of output i with respect to input j:

Concrete example. Let f take (x_1, x_2) and return two outputs:

Compute all four partials:

Arrange them, output index picks the row, input index picks the column:

At the point (x_1, x_2) = (1, 2) this is the concrete matrix .

f_1" and "output f_2", the two columns "input x_1" and "input x_2". Fill each cell with its partial . Draw an arrow showing "row index = which output, column index = which input". Takeaway: the Jacobian is just every output-input partial, organized into a matrix.The Jacobian is the chain rule for vector functions: if you compose vector-to-vector functions, you multiply their Jacobian matrices, the same way you multiplied scalar derivatives in the chain rule section. That is the general rule. But for our purposes, building it explicitly is a trap, and the next section says why.

The vector-Jacobian product (VJP)

Here is the problem with Jacobians at the scale of GPT-2.

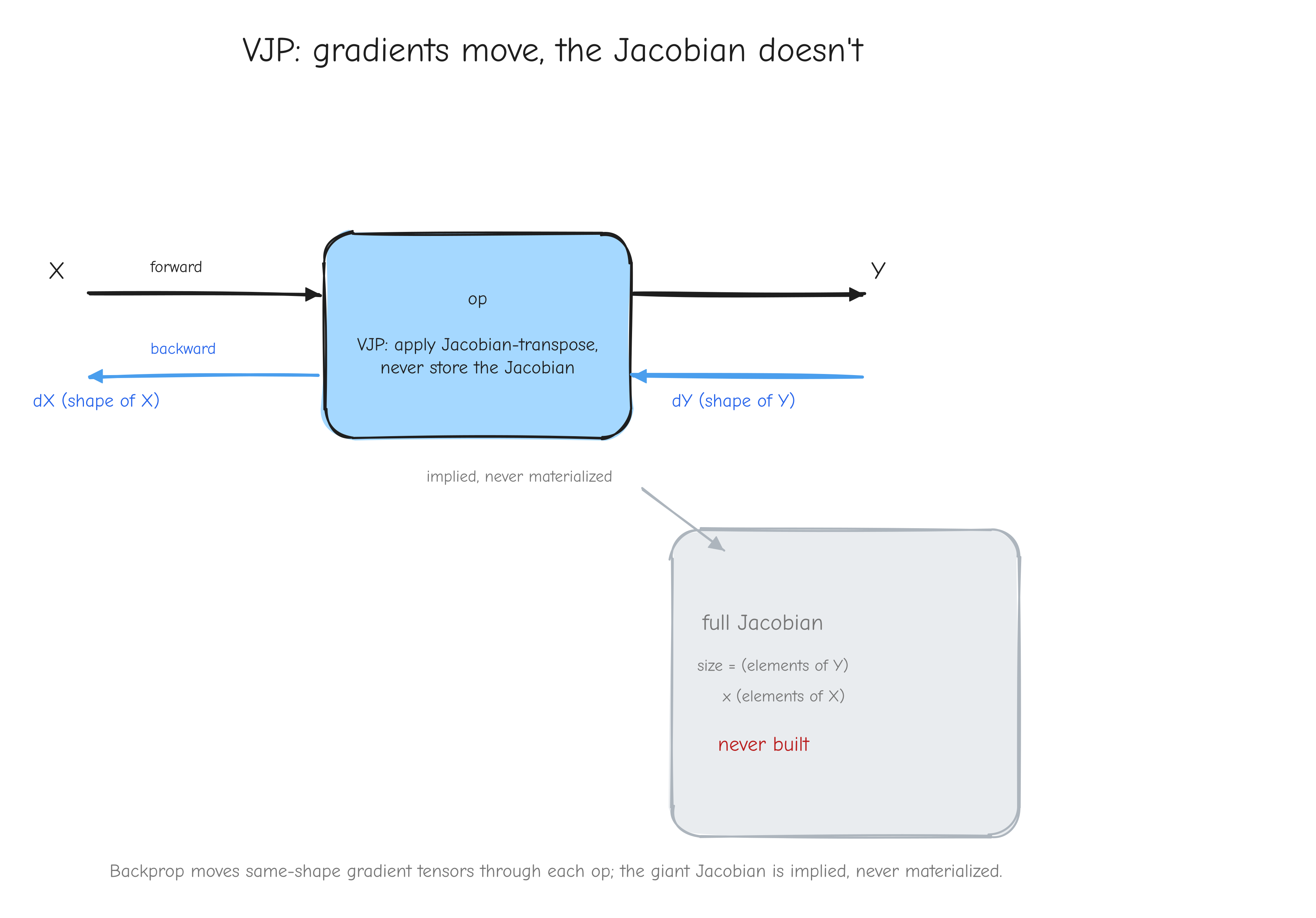

A single layer might map an activation of shape (B, T, D) to another of shape (B, T, D). With numbers on each side, the full Jacobian has entries. For modest sizes that is billions of numbers for one layer. We cannot store it, and we do not need to.

The escape comes from a fact about our setup: the very last function in the network is the loss L, and L is a single scalar. We never want the Jacobian of an intermediate layer by itself. We always want the derivative of the final scalar L with respect to some intermediate tensor.

Follow the chain rule. Let an operation take input X and produce output Y, and let L depend on Y somehow. The chain rule for the derivative of L with respect to X is:

The second factor, , is the operation's Jacobian, the huge object. But look at the first factor: is the derivative of a scalar with respect to Y, so it is just a tensor the same shape as Y, not a giant matrix. And the product we want, , is the derivative of a scalar with respect to X, so it is a tensor the same shape as X.

So the operation never needs to hand us its full Jacobian. It only needs to answer one question: "given a vector the shape of my output, produce the corresponding vector the shape of my input." That operation, vector in times Jacobian, scalar-gradient-out, is the vector-Jacobian product, the VJP. It computes from directly, without ever forming as a stored matrix.

This is the shape of backpropagation. We fix the convention now and use it for the rest of the course (it matches the style guide notation table):

Lis the scalar loss.- For any tensor

Xin the computation,dXmeans , the gradient of the loss with respect toX.dXalways has exactly the same shape asX. - An operation receives

dY, the gradient with respect to its output, called the upstream gradient. It returnsdX(anddW,db, and so on for any other inputs), the gradients with respect to its inputs. - The operation's job in the backward pass is exactly the VJP: turn

dYintodX, using the math of that specific operation, never building the Jacobian.

X on the left and output Y on the right (forward pass, arrow pointing right). Below it, the backward pass, arrow pointing left: dY (same shape as Y) enters from the right, dX (same shape as X) exits to the left. Inside the box write "VJP: apply Jacobian-transpose, never store the Jacobian". Off to the side, draw the full Jacobian as a huge greyed-out square labeled "size = (elements of Y) x (elements of X), never built". Takeaway: backprop moves same-shape gradient tensors through each op; the giant Jacobian is implied, never materialized.Every operation we derive in Parts 3 through 6 follows this template: given the forward equation and given dY, derive the rule that produces dX with the right shape. Part 2 turns that template into the autograd algorithm, and Part 8 turns it into running NumPy code.

Your turn

Part 2: Backpropagation and computational graphs

Part 1 gave you the chain rule for a chain of scalars: if L depends on y and y depends on x, then dL/dx = (dL/dy)(dy/dx). A neural network is not a chain. It is a branching structure: values split, get reused, and merge back together. This part is the bridge. It takes the scalar chain rule and turns it into a procedure that works on the real shape of a model, so that Parts 3 to 6 can derive one operation at a time and trust that the pieces compose.

By the end you will be able to draw any expression as a graph, walk that graph backward to get every gradient in one pass, and state the one shape rule that prevents most of the bugs you would otherwise hit.

The computational graph

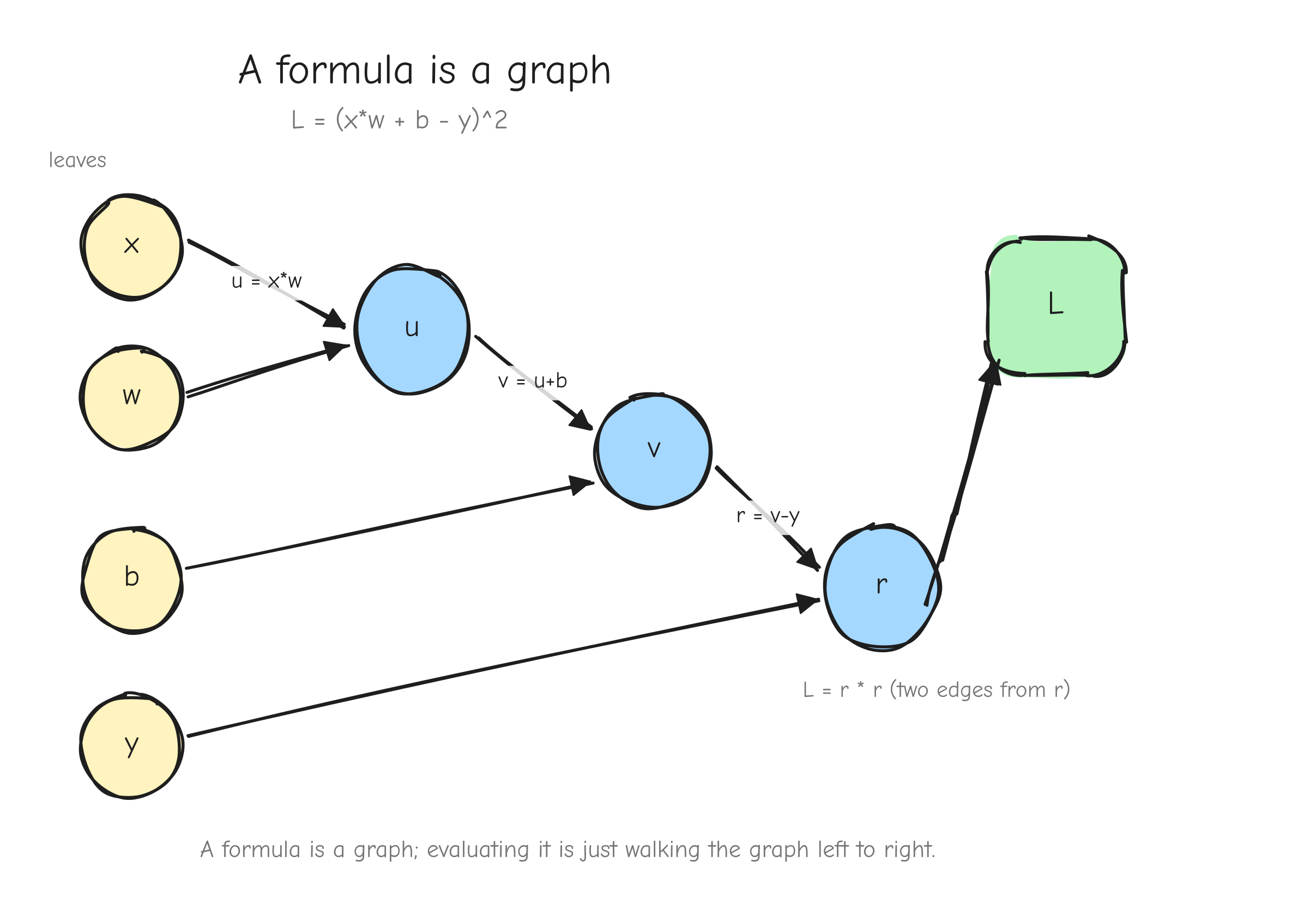

Take a single concrete expression. It is the smallest thing that already has every feature we need:

This is the squared error of a one-number linear model: input x, weight w, bias b, target y, prediction x*w + b, and L the loss. Five inputs, one scalar output.

You cannot differentiate this in one move, and you should not try. Instead, break it into the individual operations the way a machine would evaluate it. Each operation produces a named intermediate value:

u = x * w # multiply

v = u + b # add

r = v - y # subtract

L = r * r # square (r times itself)

Now draw it. Every value (input or intermediate) is a node. Every operation is an edge, or rather a small fan of edges: it has incoming edges from the values it reads and one outgoing path to the value it produces. Inputs x, w, b, y are nodes with nothing feeding them. L is the node with nothing downstream of it.

L = (x*w + b - y)^2. Leaf nodes x, w, b, y on the left. Then u = x*w (fed by x and w), v = u+b (fed by u and b), r = v-y (fed by v and y), and L = r*r (fed by r, with two arrows from r into the square node to make the reuse visible). Arrows point left to right, in the direction of the forward computation. Label each edge with the operation. The takeaway: a formula is a graph, and evaluating the formula is walking the graph forward.This structure is a directed acyclic graph, a DAG. Directed: edges have a direction, from the values an operation reads to the value it writes. Acyclic: nothing feeds back into itself, because each value is computed once from values that already exist. Evaluating the expression means walking the graph from the leaves forward to L. That walk is the forward pass.

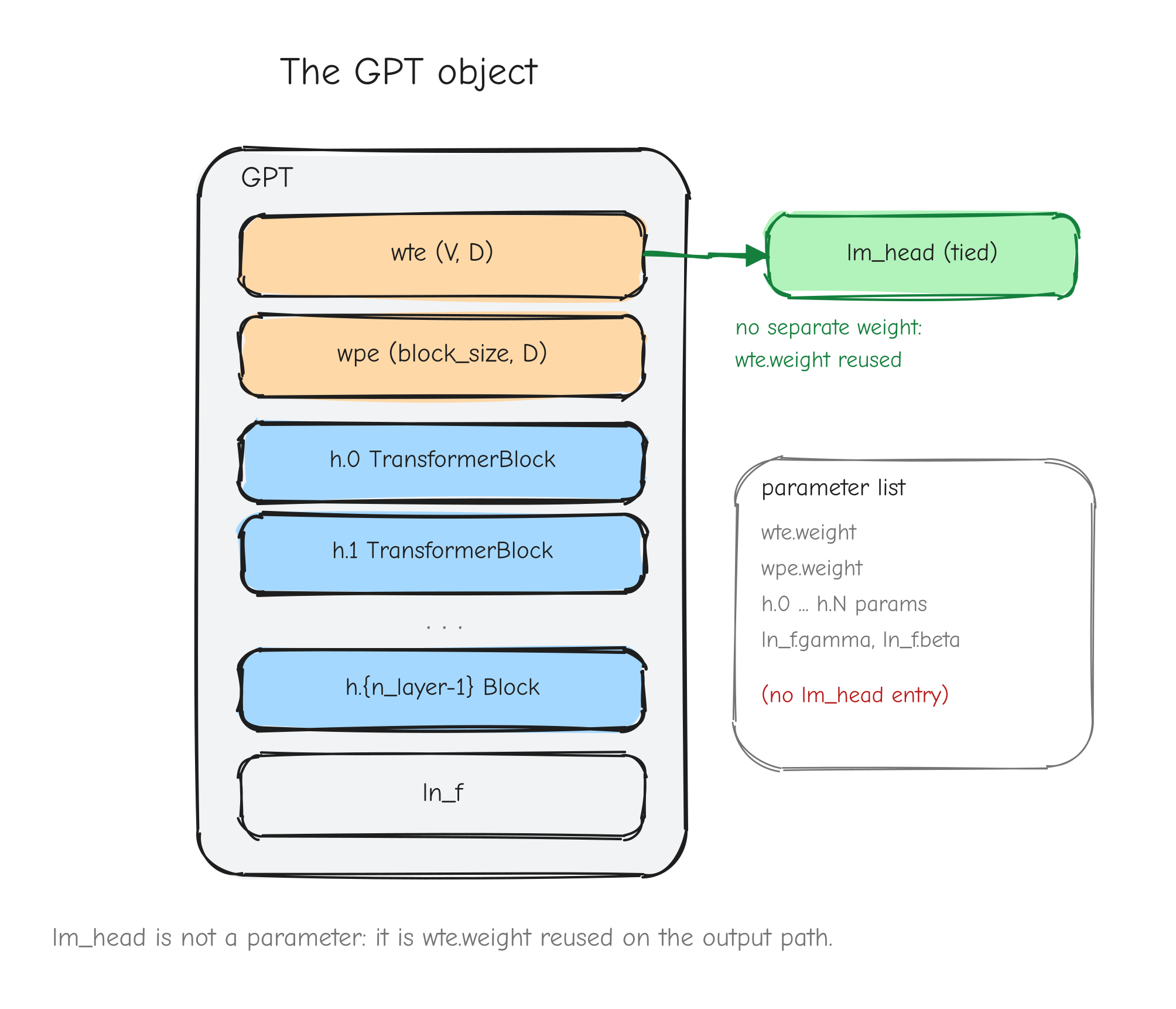

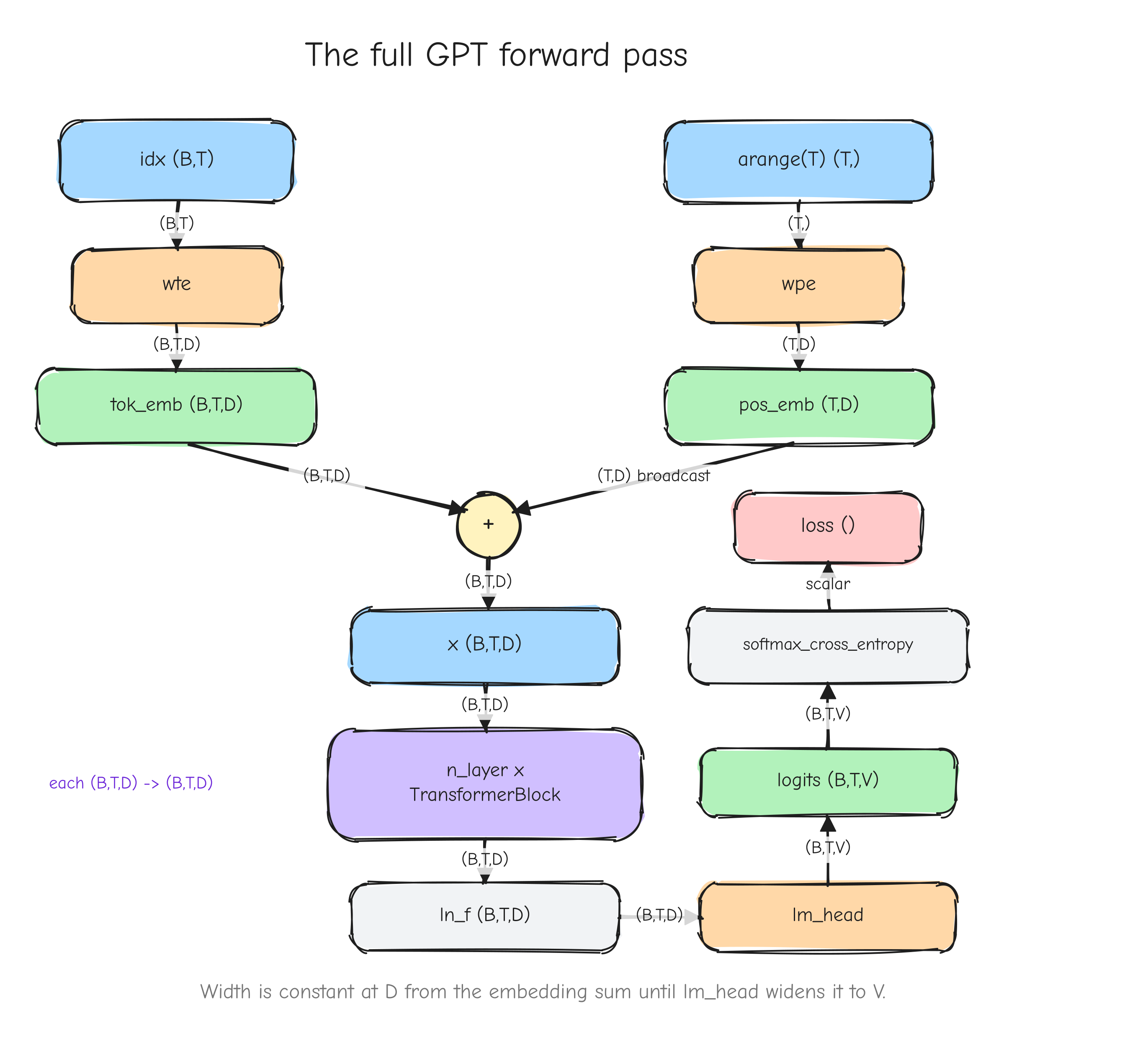

Nothing about this is specific to a one-number model. GPT-2 is the same picture with bigger nodes. The leaves are token ids and parameter tensors, the intermediate nodes are activations of shape (B, T, D) and friends, the operations are matmuls and softmaxes and LayerNorms instead of * and +, and the single scalar at the end is still the loss. The graph is larger and the nodes hold arrays instead of numbers, but it is the same DAG, walked forward the same way.

Forward mode vs reverse mode

We want the gradient of L with respect to every input: dx, dw, db, dy. The chain rule tells us each of these is a product of local derivatives along the paths from that input to L. The question is the order in which we multiply those derivatives. There are two, and the choice is not cosmetic.

Forward mode picks one input and pushes its influence forward. Fix w. Walk the graph from w toward L, and at each node compute the derivative of that node with respect to w:

- at

u = x*w:du/dw = x - at

v = u + b:dv/dw = du/dw = x - at

r = v - y:dr/dw = dv/dw = x - at

L = r*r:dL/dw = 2r * dr/dw = 2r*x

One walk gave you dw. But only dw. To get dx you walk again, fixing x. To get db, walk again. Forward mode does one pass per input.

Reverse mode picks the output and pulls its sensitivity backward. Start at L with dL/dL = 1. Walk the graph from L back toward the leaves, and at each node compute how sensitive L is to that node:

- at

r:dL/dr = 2r - at

v:dL/dv = dL/dr * dr/dv = 2r * 1 = 2r - at

u:dL/du = dL/dv * dv/du = 2r * 1 = 2r - at

b:dL/db = dL/dv * dv/db = 2r * 1 = 2r - at

x:dL/dx = dL/du * du/dx = 2r * w - at

w:dL/dw = dL/du * du/dw = 2r * x - at

y:dL/dy = dL/dr * dr/dy = 2r * (-1) = -2r

One walk gave you all five gradients.

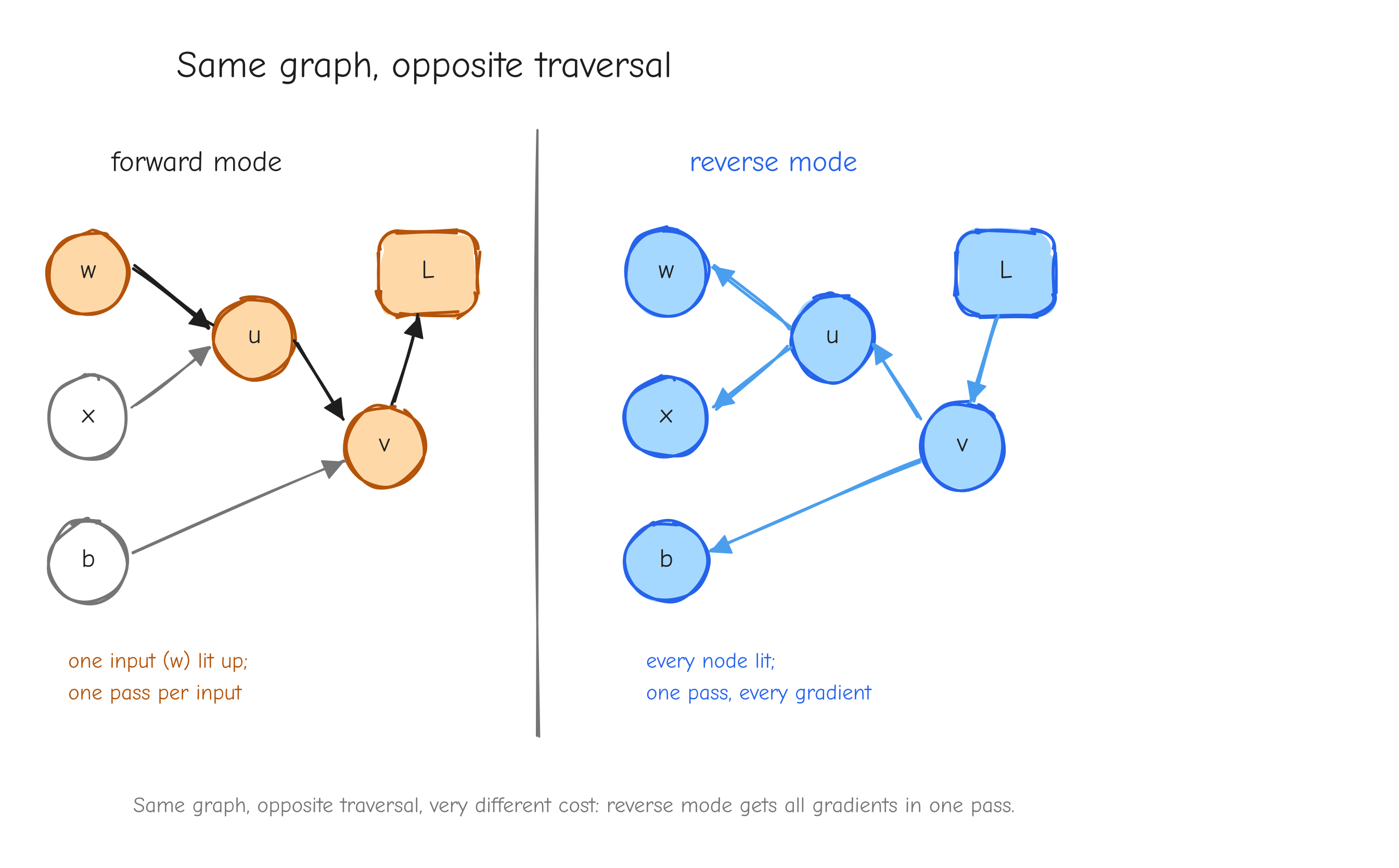

w) lit up and its derivative propagating toward L; a caption noting "one pass per input." Right copy, "reverse mode": arrows reversed, all pointing from L back to the leaves, every node lit up at once; caption "one pass, every gradient." The takeaway: same graph, opposite traversal, very different cost.Here is the rule. The cost of forward mode scales with the number of inputs. The cost of reverse mode scales with the number of outputs. Our setting has many inputs and exactly one output: GPT-2 124M has 124 million parameters, every one an input we need a gradient for, and one scalar loss. Forward mode would mean 124 million passes. Reverse mode means one.

Reverse mode applied to a computational graph is called backpropagation. That is the entire reason it is the algorithm of deep learning: the shape of the problem, many parameters and one scalar loss, is exactly the shape reverse mode is good at.

Backpropagation is the chain rule over the graph

Reverse mode is not a new idea. It is the scalar chain rule from Part 1, applied node by node, with the graph keeping the bookkeeping. Walk the example again, slowly, and name the parts.

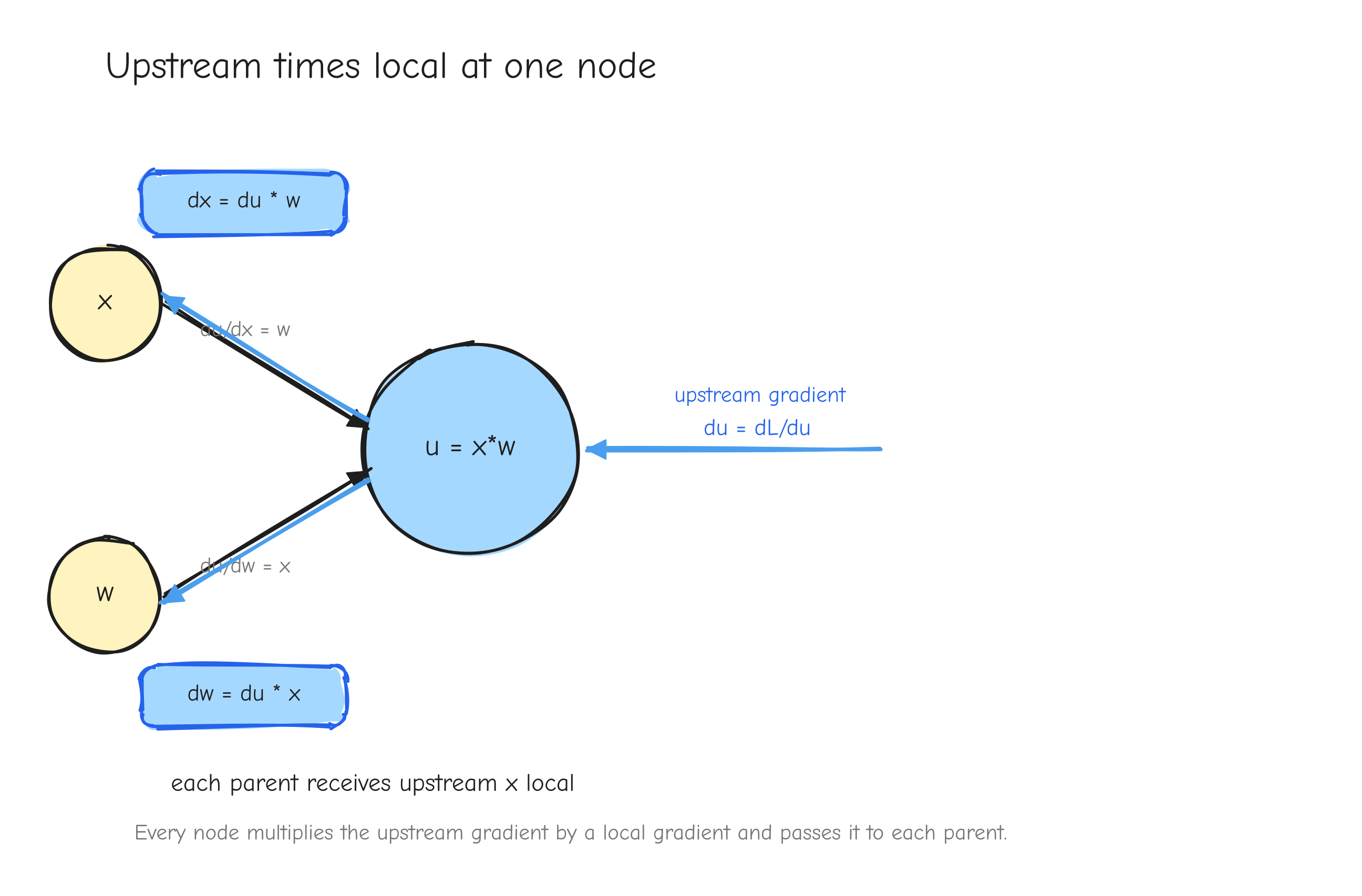

Each node, during the backward pass, receives one thing from downstream: the derivative of L with respect to that node's output. Call it the upstream gradient. The node already knows one thing about itself from the forward pass: the derivative of its output with respect to each of its inputs. Call that the local gradient. The node's job is to multiply them and hand the result to each parent. That product is the chain rule, and it is the whole of backpropagation.

Start at the end. L = r*r. The upstream gradient into L is dL/dL = 1, the seed. L's local gradient with respect to r is d(r*r)/dr = 2r. Multiply: r receives 1 * 2r = 2r. Write dr = 2r.

Move to r = v - y. Its upstream gradient is the dr = 2r we just computed. Its local gradients: dr/dv = 1 and dr/dy = -1. Multiply upstream by each local gradient:

vreceives2r * 1 = 2r, sodv = 2ryreceives2r * (-1) = -2r, sody = -2r

Move to v = u + b. Upstream is dv = 2r. Local gradients: dv/du = 1, dv/db = 1. So du = 2r * 1 = 2r and db = 2r * 1 = 2r. Addition copies its upstream gradient unchanged to both parents. That is a pattern you will see constantly.

Move to u = x * w. Upstream is du = 2r. Local gradients: du/dx = w, du/dw = x. So dx = 2r * w and dw = 2r * x. Multiplication routes the upstream gradient to each parent scaled by the other input.

The leaves x, w, b, y have no parents, so the walk stops. Every gradient is computed, and each one came from the same two-step move: take the upstream gradient, multiply by a local gradient, pass it up.

u = x*w. An arrow coming in from the right labeled "upstream gradient du = dL/du". The node itself labeled with its two local gradients, du/dx = w on the edge to x and du/dw = x on the edge to w. Two arrows leaving to the left, labeled dx = du * w and dw = du * x. The takeaway: every node does the same thing, upstream times local, and passes the product to each parent.Notice what each node needs to know, and what it does not. A node needs its upstream gradient and its own local gradient. It does not need to know anything about the rest of the network. The graph delivers the upstream gradient; the forward pass left behind whatever the node needs to compute its local gradient. This is why Parts 3 to 6 can derive one operation in isolation and trust it: each operation only ever has to answer one local question.

The gradient convention

Part 1 worked with scalars, so "shape" never came up. Real models work with tensors, and shape is where bugs live. We fix this with one convention, the same one stated in derivation.md item 1, and we never break it.

L is the scalar loss. For any tensor X in the graph (a parameter, an input, or an intermediate activation), define

and require that dX has exactly the same shape as X. Entry dX[i,j,...] is the partial derivative of L with respect to entry X[i,j,...]. A (B, T, D) activation has a (B, T, D) gradient. A (D, V) weight matrix has a (D, V) gradient. No exceptions.

This turns every operation's backward function into a contract with fixed shapes on both ends. A forward op Y = f(X, W) has a backward function that:

- receives the upstream gradient

dY, which has the shape of the op's outputY, - returns

dXwith the shape ofX, anddWwith the shape ofW.

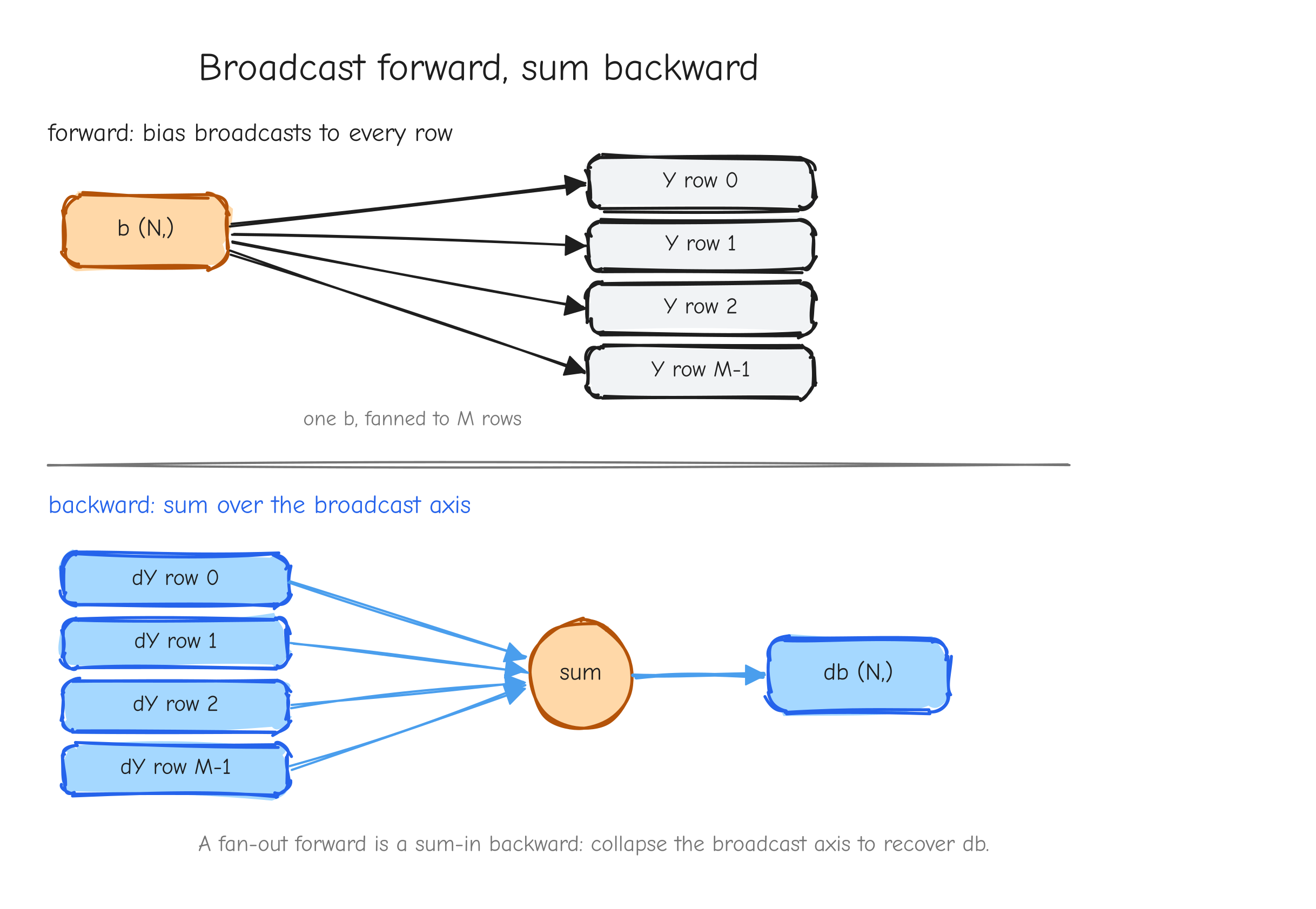

That is the whole interface. The backward function never needs to see the rest of the network; the upstream gradient dY summarizes all of it.

There is one subtlety the convention forces into the open: broadcasting. When a forward op broadcasts an input (a bias of shape (D,) added to an activation of shape (B, T, D), for instance), the output is bigger than that input. The upstream gradient dY then has the output's larger shape, but db must have shape (D,). The convention tells you something must happen on the way back: the gradient has to be summed down over the axes that were broadcast. We will not derive that here. Part 3 does it. But the convention is what makes the requirement visible instead of a surprise.

The per-operation contract

Every operation in Parts 3 to 6 is derived in the same fixed format. You have already seen the shape of it in derivation.md. Here is what each line is for, because the format is doing real work and skipping a line is how derivations go wrong.

- Forward. The equation for

Y = f(...), with the shape of every tensor annotated. You cannot derive a backward pass correctly if you are unsure what the forward pass produced or what shape it is. - Given. The upstream gradient that is available, which is

dY, and its shape. This names the one input to the backward function. - Derive. The actual work: apply the chain rule, usually in index notation, and narrate why each step is taken. For warm-up operations this is a sentence or two. For the deep operations (softmax, LayerNorm, attention) it is the long, careful core of the part.

- Vectorized form. The result rewritten as a NumPy-ready expression on whole arrays, no explicit loops. This is the line that becomes code. It is copied faithfully from

tests/ops.py, not paraphrased. - Shape check. Confirm every returned gradient matches the shape of its input, per the convention above. The fast first test.

- Sanity case. A tiny worked numeric example, 1-D or 2x2, carried all the way through forward and backward by hand.

The Sanity case deserves a word, because it is the line that separates math notes from evidence. A derivation you only read is a claim. A derivation where you plugged in x = 2, w = 3, b = 1, y = 5, computed L forward, computed every gradient backward, and then perturbed one input by a small amount and watched L change by the predicted amount, that is a derivation you have tested. It can still be wrong, but it is no longer just a claim. This is the same discipline as the numerical gradient check in Part 7, done by hand, small enough to do in the margin. Do not skip it. The worked examples in derivation.md are exactly these sanity cases.

Gradient accumulation, previewed

There is one feature of real graphs the small example only barely showed, and it is worth flagging now because it is a classic place to lose a factor.

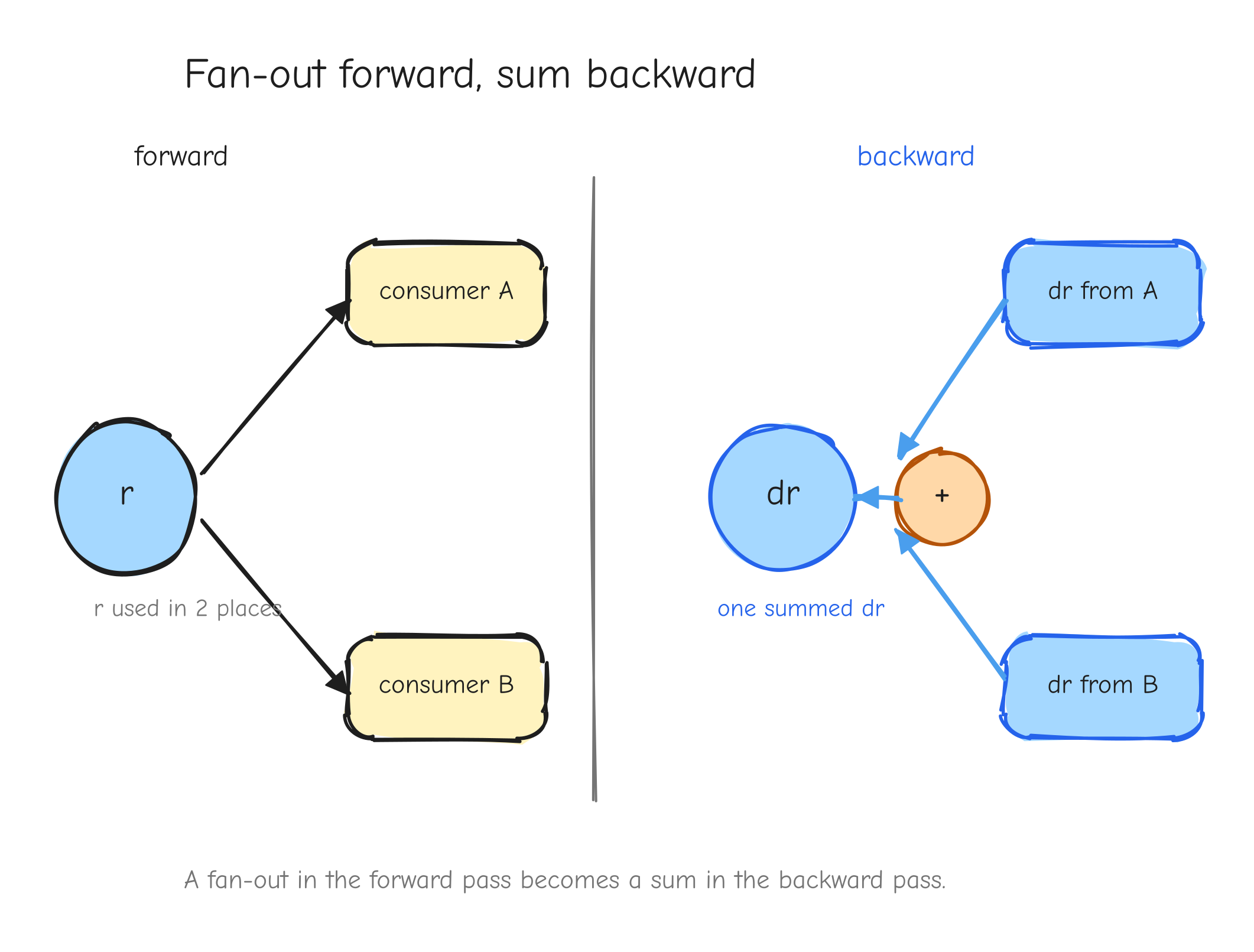

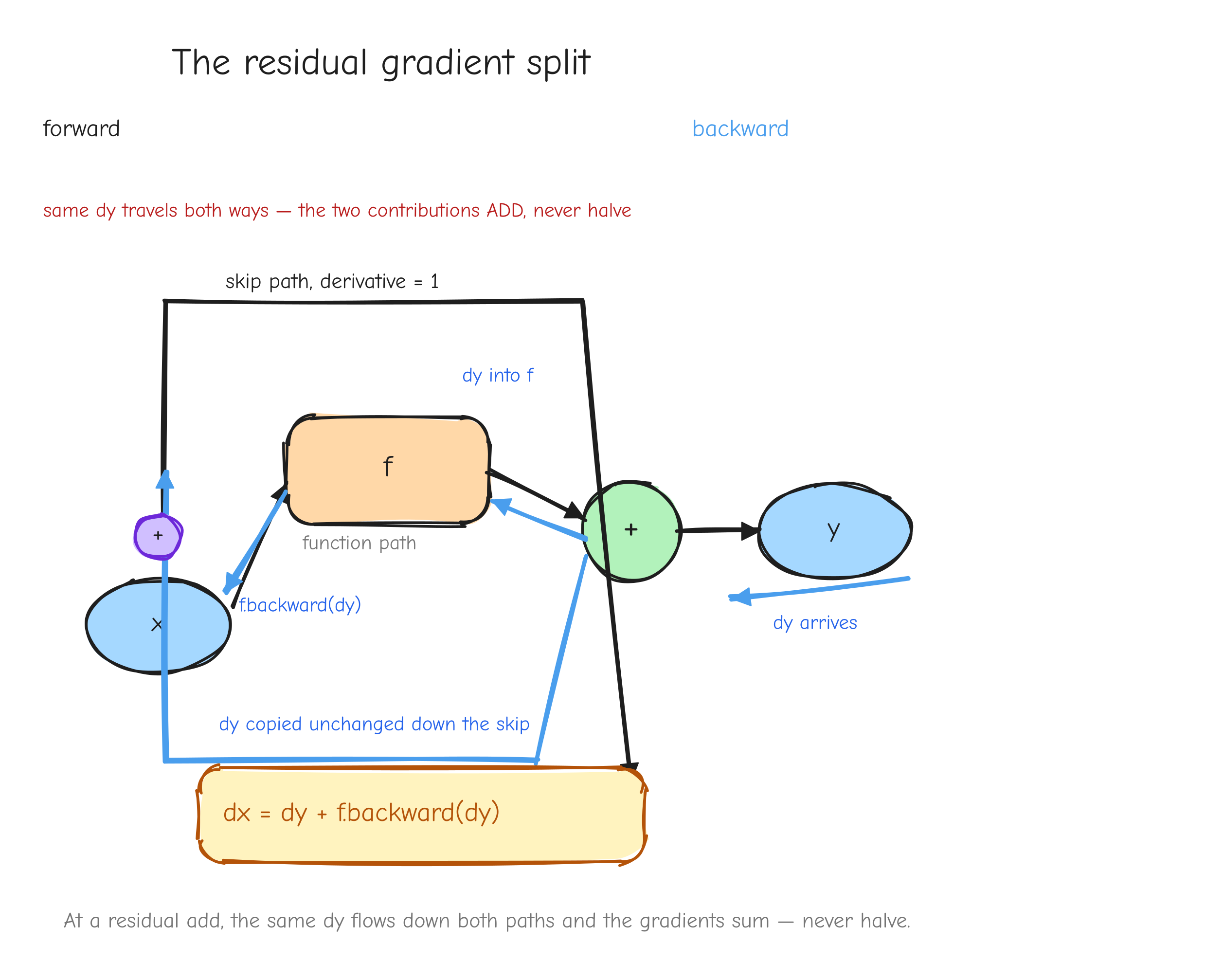

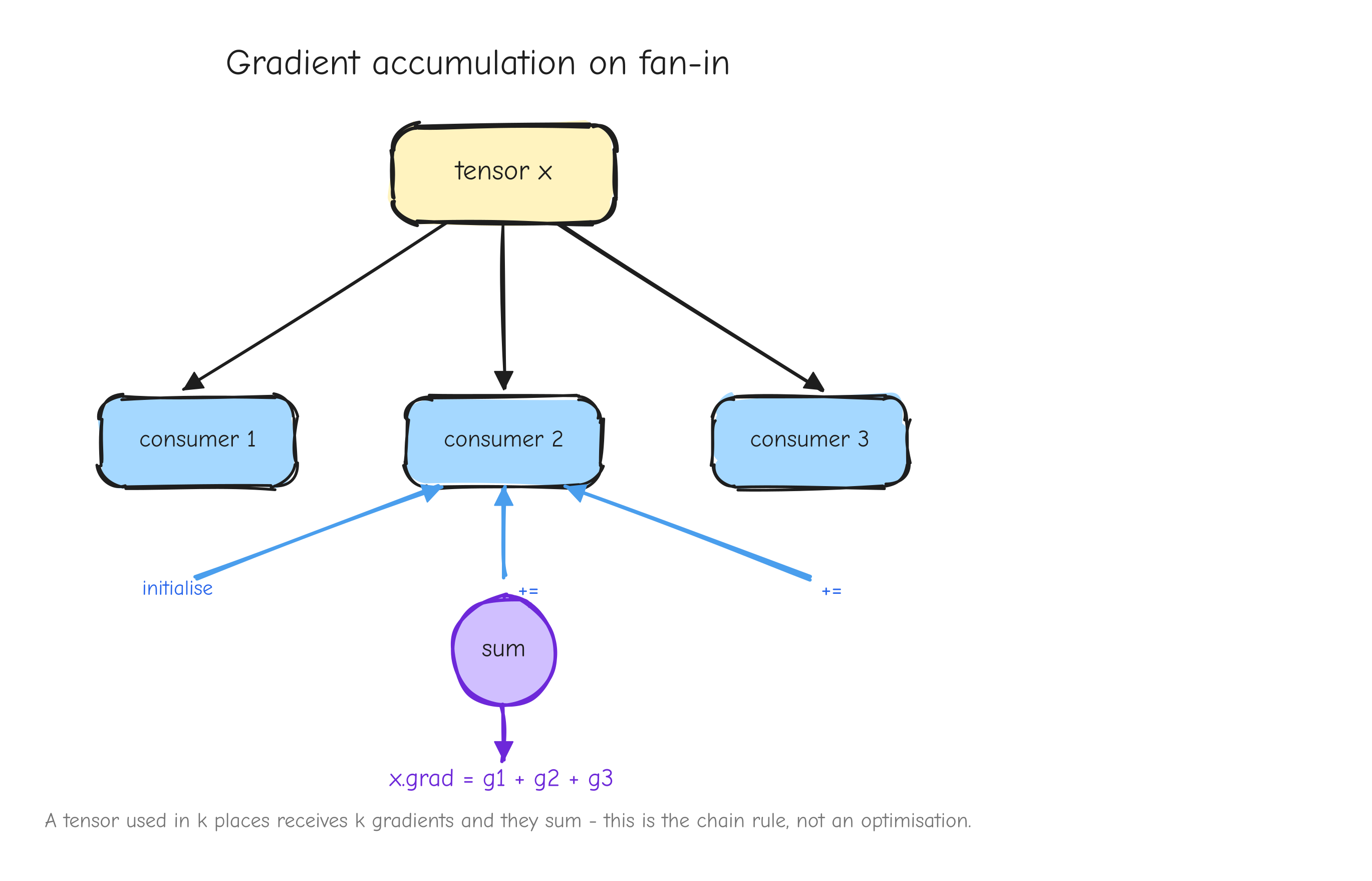

Look again at L = r * r. The value r is used twice: it feeds the square node as both the left and the right input. In the graph, r has fan-out of two. When the backward pass reaches r, it does not get one upstream gradient. It gets one from each place r was used: r * 1 from being the left input, and r * 1 from being the right input. The gradient of r is the sum of those contributions: dr = r + r = 2r. That is where the 2r comes from. Not from a power rule you memorized, but from r fanning out to two consumers and its gradient accumulating across them.

The rule is general and it comes straight from the chain rule in Part 1: if a value feeds more than one place in the graph, its gradient is the sum of the contributions from every consumer. A value used in k places receives k gradients, and they add.

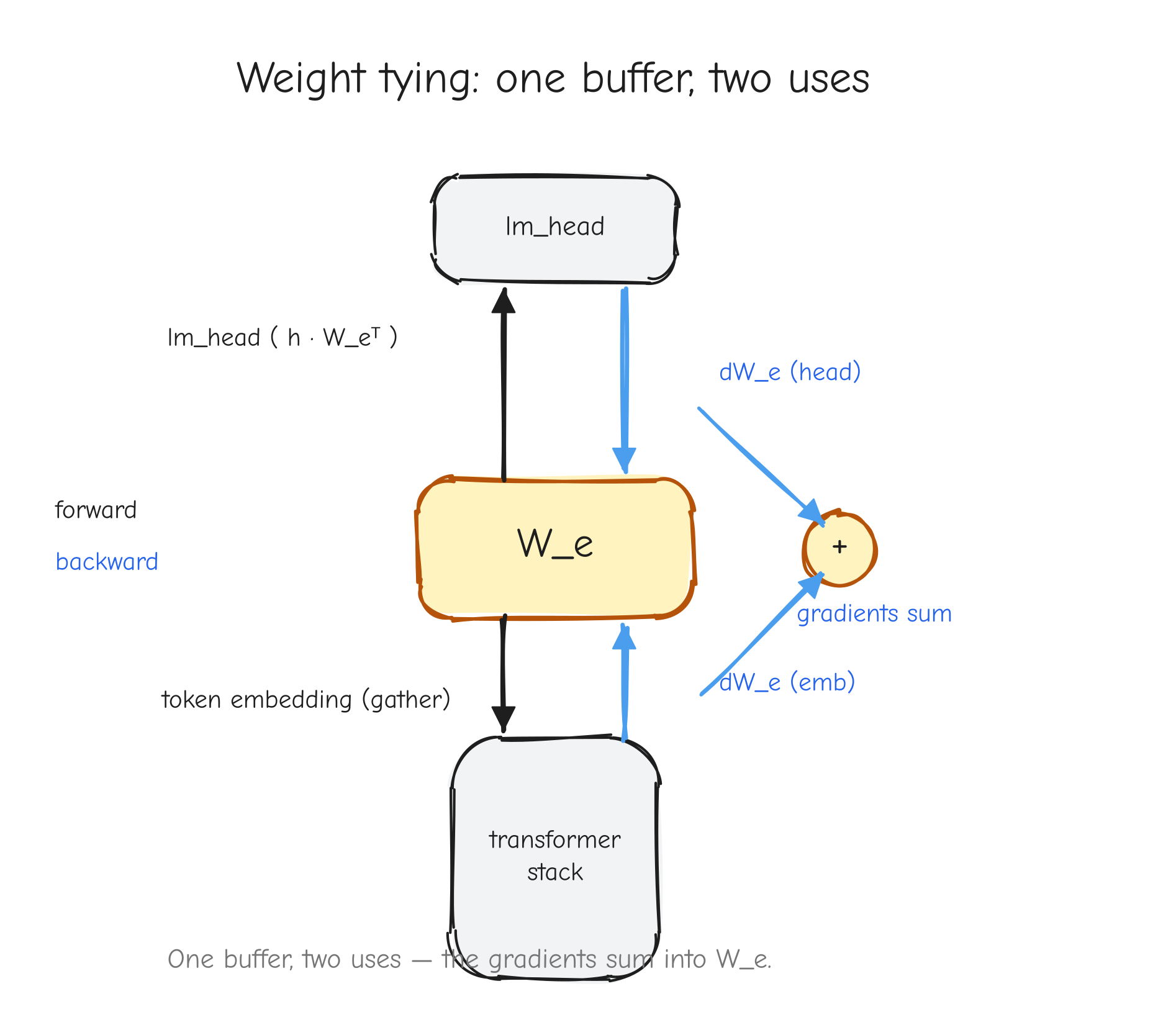

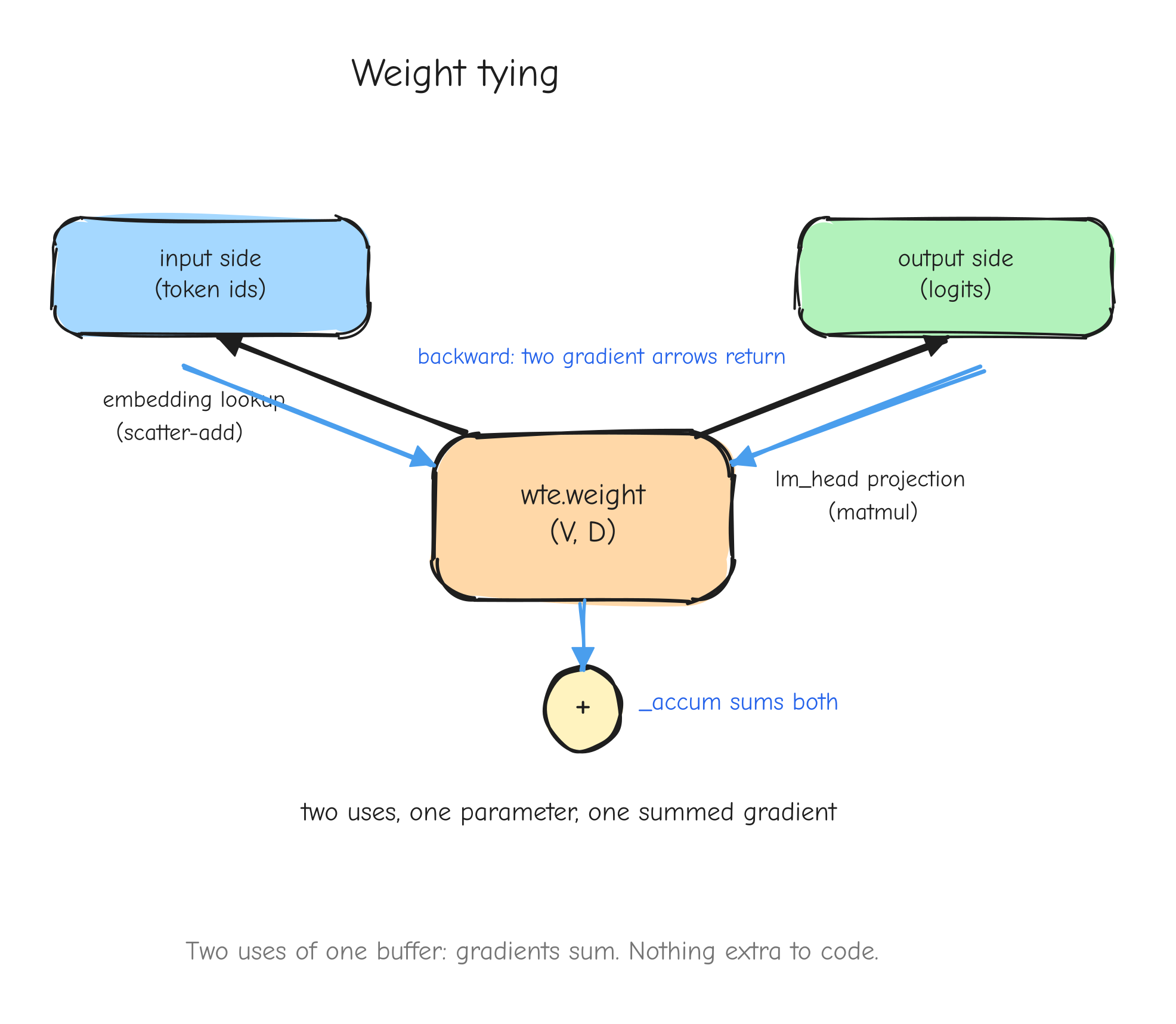

r with two arrows leaving it forward, into two different downstream consumers (left input and right input of the square). On the backward pass, two arrows coming back into r, one from each consumer, meeting at a + symbol that produces the single dr. The takeaway: fan-out forward becomes a sum backward.This is not an edge case in a real model. It is everywhere. A residual connection adds a value to the output of a block, which means that value feeds both the block and the addition: fan-out two, gradients accumulate. Weight tying uses the same embedding matrix at the input and at the output: fan-out two, gradients accumulate. Both are derived in later parts.

Your turn

Part 3: Derivations Tier 1 to 2, warm-ups and linear algebra

Part 2 gave you the per-op contract: every operation owns a backward function that takes the upstream gradient dY and returns a gradient for each of its inputs, every returned gradient the same shape as the input it belongs to. This part is where you apply that contract for real, on the smallest operations in the model.

These are the building blocks. Element-wise addition, scaling, the activation functions, matrix multiply, the reductions, transpose and reshape. None of them is hard on its own. The point of doing them carefully now is twofold: you build the index-notation muscle on operations simple enough that you can check every step by hand, and you produce the exact NumPy backward expressions that Part 8 will wire into the autograd engine. Every op in this part passes numerical gradient checking in Part 7.

Each operation uses the fixed derivation box: Forward, Given, Derive, Vectorized form, Shape check, Sanity case. The warm-ups get a compact Derive. Two operations get the full narrated index-notation treatment: GeLU and matrix multiply. Those two are the ones you should be able to redo from memory.

Tier 1: warm-ups

Element-wise addition

Forward. Y = A + B. In the plain case A, B, Y are all the same shape, say (M, N), and Y_{ij} = A_{ij} + B_{ij}. NumPy also allows broadcasting: a smaller input is stretched to match. The case that matters for the model is the bias add, Y = X + b, where X is (M, N) and b is (N,) added to every row.

Given. Upstream gradient dY, the shape of Y.

Derive. Equal shapes first. Y_{ij} depends on A_{ab} only when (i,j) = (a,b), and then with coefficient 1, so and the VJP collapses to . Addition copies the gradient through: dA = dY, and by symmetry dB = dY.

Now the broadcast case. With b a row vector broadcast over the M rows, Y_{ij} = A_{ij} + b_j, so b_j influences every row and , independent of i. The VJP sums over the row index that b was stretched along:

The gradient for a broadcast input is dY summed over the axes that were expanded. dA = dY still, because A was not broadcast.

N bias vector b drawn once, with arrows fanning out to each of the M rows of Y, every row getting the same b. Bottom row, backward: the M rows of dY drawn, with arrows converging back into a single length-N db, labeled "sum over the broadcast axis." The takeaway: a fan-out forward is a sum-in backward.Vectorized form. From tests/ops.py, the general broadcasting add reduces each gradient back to its input shape with a helper:

def _unbroadcast(grad, shape):

grad = np.asarray(grad, dtype=np.float64)

while grad.ndim > len(shape):

grad = grad.sum(axis=0)

for axis, dim in enumerate(shape):

if dim == 1 and grad.shape[axis] != 1:

grad = grad.sum(axis=axis, keepdims=True)

return grad

def add(a, b):

Y = a + b

def bwd(dY):

return {'a': _unbroadcast(dY, np.shape(a)).copy(),

'b': _unbroadcast(dY, np.shape(b)).copy()}

return Y, bwd

For equal shapes _unbroadcast is a no-op and this is just dA = dY, dB = dY. The dedicated bias-add op writes the broadcast sum out directly:

def add_bias(x, b):

Y = x + b

def bwd(dY):

axes = tuple(range(dY.ndim - 1))

return {'x': dY.copy(), 'b': dY.sum(axis=axes)}

return Y, bwd

axes = tuple(range(dY.ndim - 1)) is every axis except the last, so dY.sum(axis=axes) collapses (B, T, N) or (M, N) down to (N,), matching b.

Shape check. dx is dY.copy(), same shape as x. db is dY summed over all but the last axis, shape (N,), same shape as b.

Sanity case. Let A = [[1, 2], [3, 4]] and b = [10, 20]. Forward broadcasts b over both rows: Y = [[11, 22], [13, 24]]. Take dY = [[0.5, 1.0], [2.0, 3.0]]. Then dA = dY, and db = dY.sum(axis=0) = [0.5+2.0, 1.0+3.0] = [2.5, 4.0]. Check by perturbation: bumping b_0 by epsilon raises both Y_{00} and Y_{10} by epsilon, changing L by (dY_{00} + dY_{10}) * epsilon = 2.5 * epsilon, so db_0 = 2.5.

Scalar multiply

Forward. Y = c * X, with c a fixed constant (not a parameter, it carries no gradient). Y_{ij} = c * X_{ij}.

Given. Upstream gradient dY, shape of X.

Derive. , so the VJP gives dX_{ab} = c * dY_{ab}. Scaling the forward by c scales the backward by the same c:

This is the attention scaling step: the scores get multiplied by c = 1/sqrt(d), so that step's backward is dX = dY / sqrt(d).

Vectorized form. From tests/ops.py:

def scale(x, c):

Y = c * x

def bwd(dY):

return {'x': c * dY}

return Y, bwd

Shape check. c * dY has the shape of dY, which is the shape of X.

Sanity case. Let c = 0.5 and X = [[2, -4], [6, 8]], so Y = [[1, -2], [3, 4]]. With dY all ones, dX = 0.5 * dY is all 0.5. Bumping X_{00} by epsilon changes Y_{00} by 0.5 * epsilon, hence L by 0.5 * epsilon, so dX_{00} = 0.5.

Element-wise nonlinearity (generic)

Forward. Y = f(X) applied entrywise: Y_{ij} = f(X_{ij}), for some scalar function f.

Given. Upstream gradient dY, shape of X.

Derive. Because f runs independently on each entry, Y_{ij} depends only on X_{ij}: . The VJP collapses to

Evaluate the scalar derivative f' at every entry of X, multiply element-wise by dY. Every activation in the model is a special case of this. The only work left for a specific activation is writing down f'.

ReLU

Forward. f(x) = max(0, x).

Given. Upstream gradient dY, shape of X.

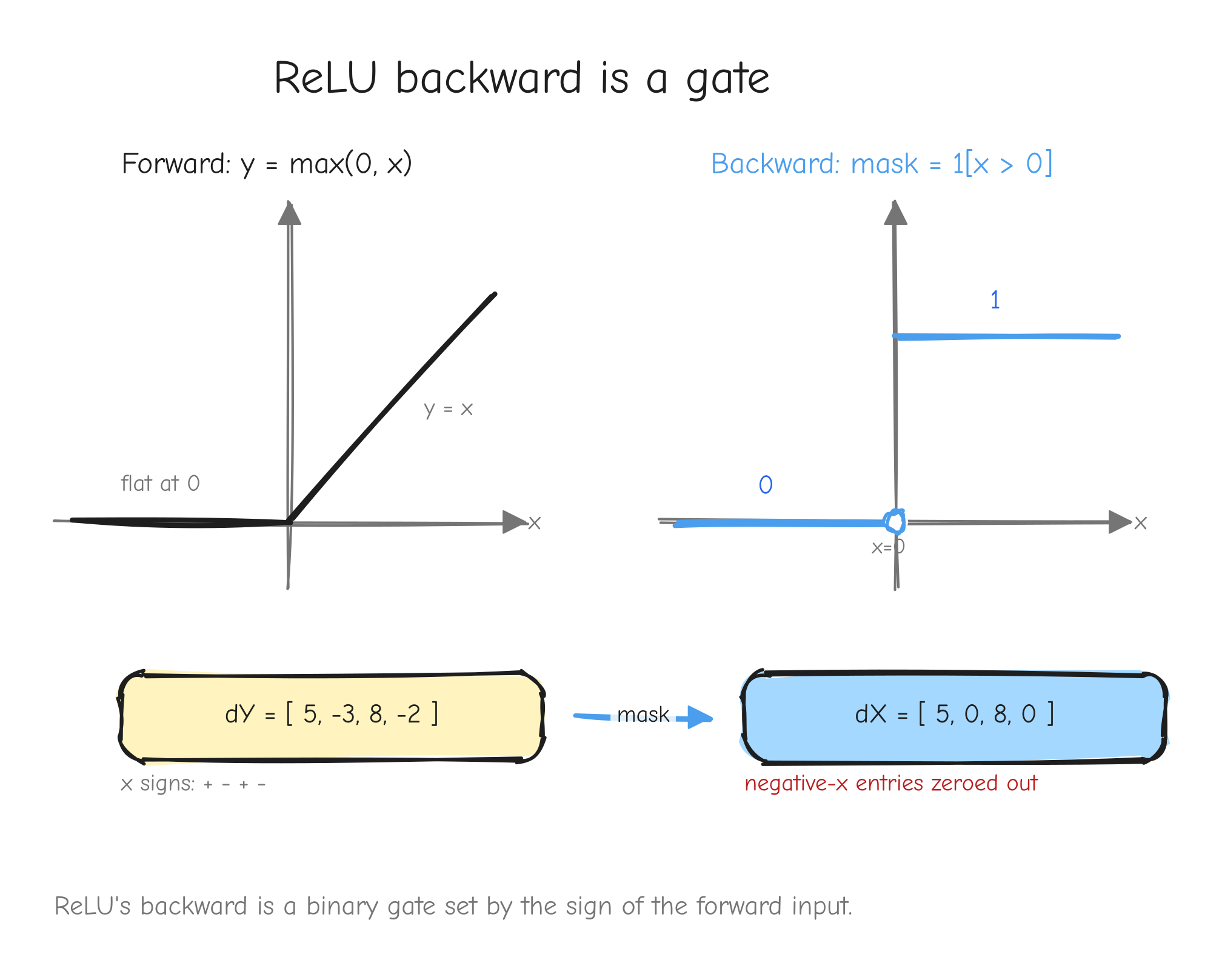

Derive. The derivative of max(0, x) is 1 where x > 0 and 0 where x < 0. The kink at x = 0 is not differentiable; by convention assign it 0. So f'(x) = 1[x > 0] and, from the generic rule, dX = 1[X > 0] ⊙ dY. ReLU gates the gradient: it passes through untouched where the input was positive, and is killed where the input was not.

x < 0 and y = x for x > 0. Right: the gradient mask, a step function that is 0 for x < 0 and 1 for x > 0, with a hollow dot at x = 0. An arrow from a sample dY vector passing through the mask, entries at negative-x positions zeroed out. The takeaway: ReLU's backward is a binary gate set by the sign of the forward input.Vectorized form. From tests/ops.py:

def relu(x):

Y = np.maximum(0.0, x)

mask = (x > 0.0)

def bwd(dY):

return {'x': mask * dY}

return Y, bwd

The mask is computed in the forward and captured by the closure, so the backward is a single multiply.

Shape check. mask has the shape of x, so mask * dY has the shape of x.

Sanity case. X = [[-1, 2], [0, 3]] gives Y = [[0, 2], [0, 3]] and mask = [[0, 1], [0, 1]]. With dY = [[5, 6], [7, 8]], dX = [[0, 6], [0, 8]]. Check: bumping X_{01} = 2 by a small positive epsilon raises Y_{01} by epsilon, so L by 6 * epsilon, giving dX_{01} = 6. Bumping X_{00} = -1 leaves Y_{00} = 0 unchanged, so dX_{00} = 0.

GeLU (exact erf form)

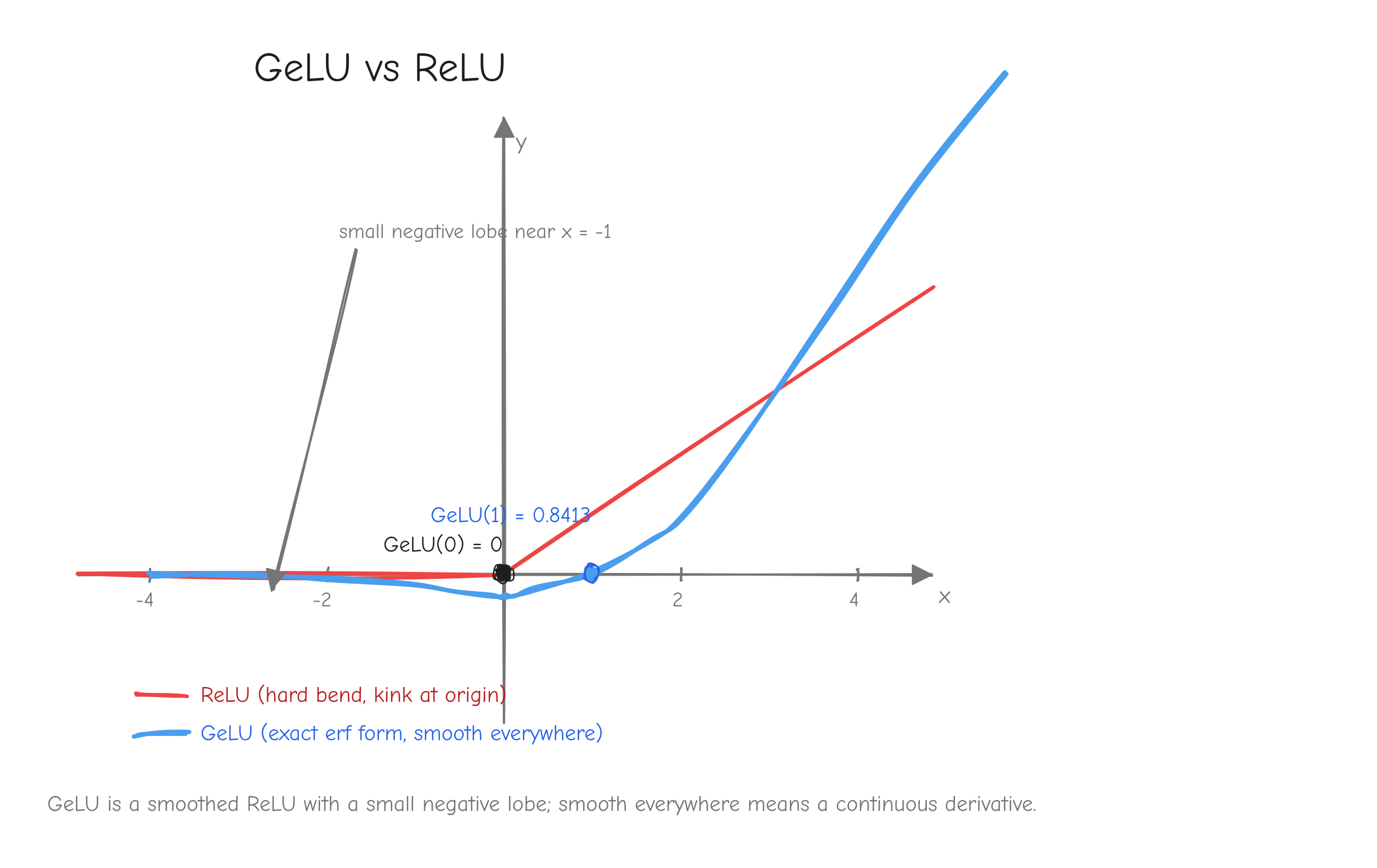

This is the first deep derivation. ReLU's derivative was a step function you could read off by inspection. GeLU's derivative needs the product rule and the chain rule, and it is worth doing slowly because the model uses GeLU in every MLP block.

Forward. GeLU is the input x scaled by the standard normal CDF Phi:

Phi(x) is the standard normal CDF: the probability that a draw from a mean-0 variance-1 Gaussian lands at or below x. It runs smoothly from 0 at x = -infinity to 1 at x = +infinity, passing through 0.5 at x = 0. So GeLU multiplies each input by a smooth, input-dependent number between 0 and 1. For very negative x that number is near 0 and the input is suppressed; for very positive x it is near 1 and the input passes almost unchanged. erf is the error function, the integral form that Phi is built from.

Given. Upstream gradient dY, shape of X.

Derive. f(x) = x * Phi(x) is a product of two functions of x: the factor x and the factor Phi(x). The product rule says the derivative of a product is the derivative of the first times the second, plus the first times the derivative of the second:

So we need Phi'(x), the derivative of the CDF. The derivative of a CDF is its PDF, the density phi(x). Get it explicitly. Phi(x) = 0.5 * (1 + erf(x/sqrt(2))), so differentiate the erf term. The derivative of erf(z) is (2/sqrt(pi)) * exp(-z^2), and here z = x/sqrt(2) so by the chain rule we also multiply by dz/dx = 1/sqrt(2):

That is the standard normal PDF. Putting it together, the fully explicit GeLU derivative is

The backward is the generic element-wise rule with this f': dX = f'(X) ⊙ dY. The forward already computes Phi(X), so the backward reuses it and only adds X ⊙ phi(X).

This is the exact form, built on erf. It is not the tanh approximation. The original GPT-2 and older nanoGPT used the tanh approximation 0.5x(1 + tanh(sqrt(2/pi)(x + 0.044715 x^3))), which agrees with the exact form only to about 1e-3. The nanoGPT model.py this course mirrors uses nn.GELU(), the exact erf form, so the implementation uses the exact form too, for faithful parity with the reference checkpoint. The distinction looks harmless. It is not: Part 14 covers a roadblock that came directly from mixing the two variants.

x from about -4 to 4. Curve 1: ReLU, the hard bend at the origin. Curve 2: GeLU, the exact erf form, a smooth curve that dips slightly negative around x = -1 before flattening to 0 on the left, and hugs y = x on the right. Mark GeLU(1) = 0.8413 and GeLU(0) = 0. The takeaway: GeLU is a smoothed ReLU with a small negative lobe, and being smooth everywhere is what gives it a continuous derivative.Vectorized form. From tests/ops.py:

def gelu(x):

Phi = 0.5 * (1.0 + _erf(x / _SQRT2))

Y = x * Phi

def bwd(dY):

phi = np.exp(-0.5 * x * x) * _INV_SQRT_2PI

fprime = Phi + x * phi

return {'x': fprime * dY}

return Y, bwd

with the module constants _SQRT2 = np.sqrt(2.0) and _INV_SQRT_2PI = 1.0 / np.sqrt(2.0 * np.pi). Phi is computed once in the forward and reused by the closure; phi and fprime are formed in the backward.

Shape check. Phi, phi, fprime all have the shape of x, so fprime * dY has the shape of x.

Sanity case. Take x = 1. Forward: x/sqrt(2) = 0.7071068, erf(0.7071068) = 0.6826895, so Phi(1) = 0.5 * (1 + 0.6826895) = 0.8413447 and f(1) = 1 * Phi(1) = 0.8413447. That matches the known value GeLU(1) ≈ 0.8413. Derivative: phi(1) = exp(-0.5) / sqrt(2*pi) = 0.6065307 / 2.5066283 = 0.2419707, so f'(1) = Phi(1) + 1 * phi(1) = 0.8413447 + 0.2419707 = 1.0833154. With an upstream dY entry of 2.0, dX = f'(1) * 2.0 ≈ 2.1666. The tanh approximation would give f(1) ≈ 0.84119 and f'(1) ≈ 1.0830, off in the fourth decimal, which is exactly why the implementation and the parity reference must use the same variant.

Tier 2: linear algebra ops

Matrix multiply

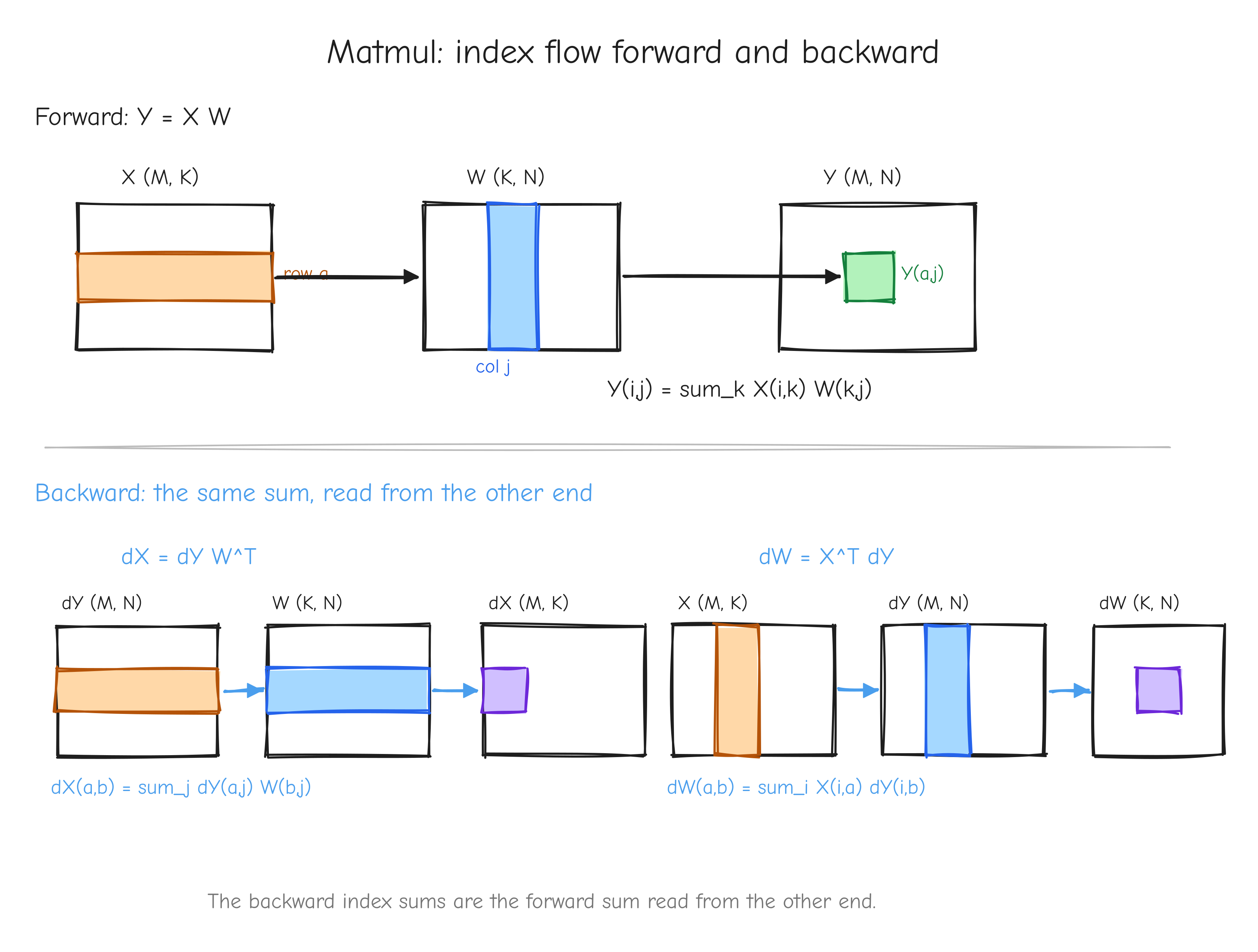

This is the second deep derivation, and the most important one in the part. Matmul is the workhorse of the model: every projection, every attention score matrix, the output head. The backward is two lines of NumPy, but you should be able to rederive those two lines from index notation without looking. Do this one slowly.

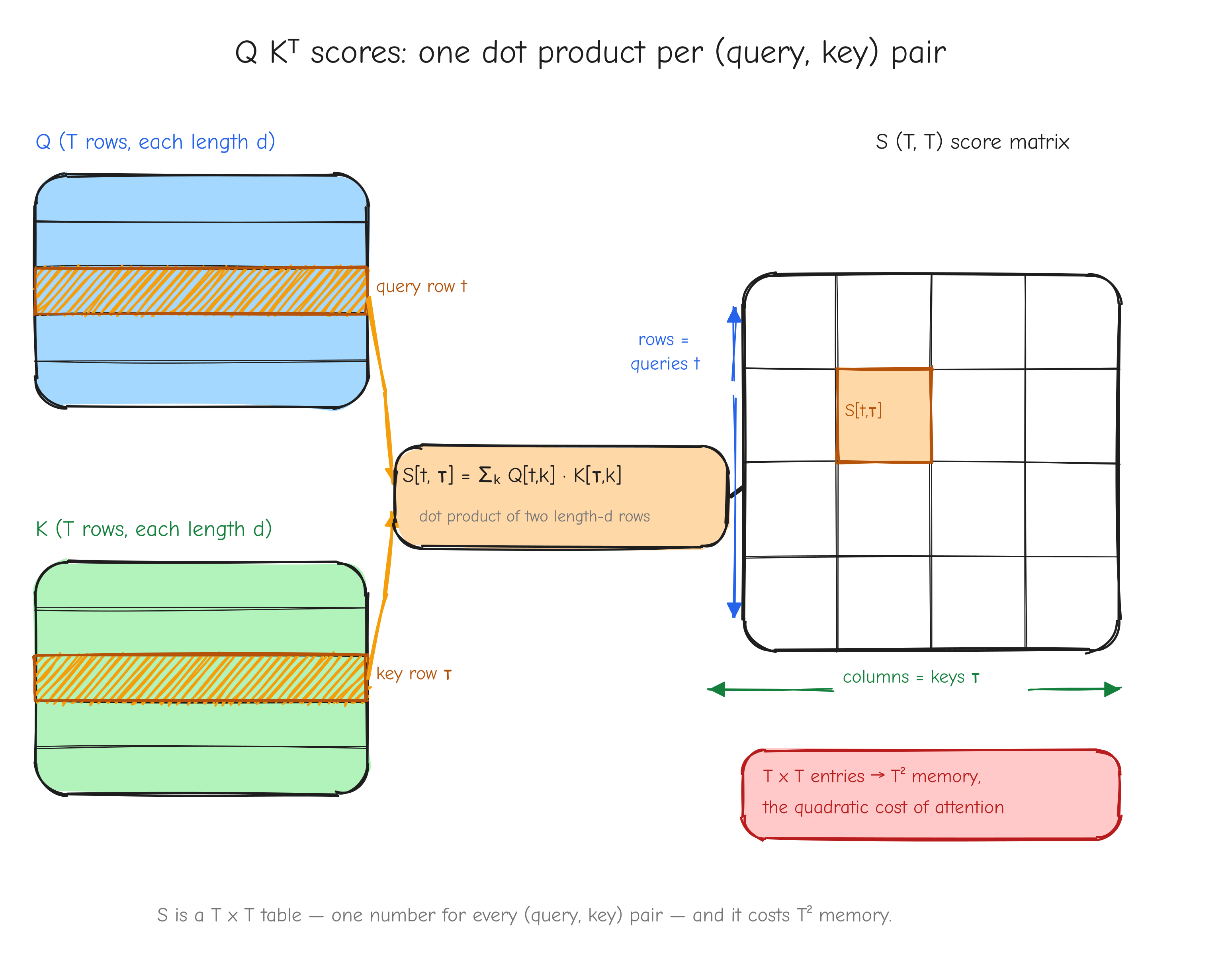

Forward. Y = X W, with X of shape (M, K), W of shape (K, N), and Y of shape (M, N). In index notation, each output entry is a sum over the shared dimension K:

Given. Upstream gradient dY, shape (M, N).

Derive dX. We want dX_{ab}, the gradient with respect to one generic input entry X_{ab}. The chain rule over the whole output is

So the work is the local derivative . Differentiate the sum that defines Y_{ij}:

The term in the k-sum survives only when i = a and k = b. Substitute back into the VJP:

The delta_{ia} killed the i-sum, fixing i = a. Now read the remaining sum as a matrix product. sum_j dY_{aj} W_{bj} = sum_j dY_{aj} (W^T)_{jb} = (dY W^T)_{ab}. So

Derive dW. Same procedure for a generic weight entry W_{ab}. The local derivative:

The VJP:

The delta_{jb} fixed j = b; rewriting X_{ia} as (X^T)_{ai} turns the i-sum into a matrix product. So

X (M,K), W (K,N), Y (M,N). Top half, forward: highlight row a of X and column j of W flowing into entry Y_{aj}, labeled Y_{ij} = sum_k X_{ik} W_{kj}. Bottom half, backward: highlight how dY and W^T combine into dX (row a of dY dotted against row b of W), and X^T and dY combine into dW. Annotate each arrow with the index-sum it represents. The takeaway: the backward index sums are the forward sum read from the other end.The affine form. Y = X W + b with b of shape (N,) broadcast over the M rows is just matmul followed by the bias-add case from the addition op. The matmul node gives dX and dW as above; the add node passes dY straight through to the matmul output and sums dY over the broadcast row axis for the bias. So dX = dY W^T, dW = X^T dY, db = dY.sum(axis=0).

The 3-D batched case. In the model X is usually (B, T, K), not (M, K). The forward X @ W broadcasts the matmul over the leading axes and works unchanged. dX = dY @ W^T also works unchanged. But dW = X^T dY needs care: X now has three axes, and you must contract over both the batch and the time axis, not just one. The fix is to flatten the leading axes first, X.reshape(-1, K), so the contraction is again a clean 2-D matrix product. This flattening is easy to get subtly wrong, and Part 14 covers a roadblock that came from exactly this.

Vectorized form. From tests/ops.py, the matmul op already handles the batched case by flattening:

def matmul(x, W):

Y = x @ W

K, N = W.shape

def bwd(dY):

dx = dY @ W.T

dW = x.reshape(-1, K).T @ dY.reshape(-1, N)

return {'x': dx, 'W': dW}

return Y, bwd

and the affine version adds the bias gradient:

def linear(x, W, b):

Y = x @ W + b

K = W.shape[0]

N = W.shape[1]

def bwd(dY):

dx = dY @ W.T

dW = x.reshape(-1, K).T @ dY.reshape(-1, N)

db = dY.reshape(-1, N).sum(axis=0)

return {'x': dx, 'W': dW, 'b': db}

return Y, bwd

x.reshape(-1, K) flattens every leading axis into one, so x.reshape(-1, K).T @ dY.reshape(-1, N) is the clean 2-D X^T dY regardless of how many batch axes there were. For 2-D input reshape(-1, K) is a no-op and this reduces to the plain X.T @ dY.

Shape check. dx: (M, N) @ (N, K) -> (M, K), the shape of x (or with batch axes, (B, T, N) @ (N, K) -> (B, T, K)). dW: (K, M) @ (M, N) -> (K, N), the shape of W. db: (M, N) summed over axis=0 gives (N,), the shape of b.

Sanity case. Let X = [[1, 2], [3, 4]] and W = [[1, 0], [0, 1]] (the identity). Forward: Y = X W = X = [[1, 2], [3, 4]]. Take dY = [[1, 0], [0, 1]].

dX = dY W^T = dY I = [[1, 0], [0, 1]].

dW = X^T dY = [[1, 3], [2, 4]] [[1, 0], [0, 1]] = [[1, 3], [2, 4]].

Check dW_{00} directly. W_{00} enters Y_{i0} = X_{i0} W_{00} + X_{i1} W_{10}, so partial Y_{i0} / partial W_{00} = X_{i0} and all other partials are 0. Then dW_{00} = sum_i dY_{i0} X_{i0} = dY_{00} X_{00} + dY_{10} X_{10} = 1*1 + 0*3 = 1, matching. Check dX_{00}: X_{00} enters Y_{0j} = X_{00} W_{0j} + X_{01} W_{1j}, so partial Y_{0j} / partial X_{00} = W_{0j}, and dX_{00} = sum_j dY_{0j} W_{0j} = 1*1 + 0*0 = 1, matching dY W^T. Affine spot-check: with the same dY, db = dY.sum(axis=0) = [1, 1], shape (2,) = (N,).

Sum and mean reductions

Forward. Y = X.sum(axis) or Y = X.mean(axis). Take a row sum over the last axis as the concrete case: X is (M, N), Y is (M,) with Y_i = sum_n X_{in}. Mean is the same with a 1/N factor, Y_i = (1/N) sum_n X_{in}, where N is the number of elements reduced over.

Given. Upstream gradient dY, one number per surviving position.

Derive. For the sum, partial Y_i / partial X_{ab} = sum_n delta_{ia} delta_{nb} = delta_{ia}, independent of the column b: every element of row a contributed equally. The VJP gives dX_{ab} = sum_i dY_i delta_{ia} = dY_a. So dX is dY broadcast back over the axis that was summed away. Mean is 1/N times sum, so its gradient is the same broadcast divided by N: dX = broadcast(dY) / N. Both reductions show up inside LayerNorm, where the mean and the variance reduce over the feature axis, so these broadcast-back rules are reused there.

Vectorized form. From tests/ops.py, for the last-axis sum and mean:

def sum_lastaxis(x):

Y = x.sum(axis=-1)

def bwd(dY):

return {'x': np.broadcast_to(dY[..., None], x.shape).copy()}

return Y, bwd

def mean_lastaxis(x):

N = x.shape[-1]

Y = x.mean(axis=-1)

def bwd(dY):

return {'x': np.broadcast_to(dY[..., None], x.shape).copy() / N}

return Y, bwd

dY[..., None] inserts the size-1 axis where the reduction happened, then np.broadcast_to(..., x.shape) stretches it back to x's shape; .copy() is there because broadcast_to returns a read-only view. The full reduction sum_all, used to form the scalar loss in the autograd tests, fills x's shape with the single upstream value:

def sum_all(x):

Y = np.asarray(x, dtype=np.float64).sum()

def bwd(dY):

return {'x': np.full(np.shape(x), dY, dtype=np.float64)}

return Y, bwd

Shape check. dY[..., None] is (M, 1), broadcast to (M, N) = x.shape. Dividing by the scalar N leaves the shape unchanged. sum_all returns np.full(x.shape, dY), exactly x.shape.

Sanity case. Let X = [[1, 2, 3], [4, 5, 6]], reduce over the last axis, N = 3. Sum: Y = [6, 15]; with dY = [1, 10], dX = [[1, 1, 1], [10, 10, 10]]. Bumping X_{00} by epsilon raises Y_0 by epsilon, so L by 1 * epsilon, giving dX_{00} = 1. Mean: Y = [2, 5]; with the same dY, dX = [[1/3, 1/3, 1/3], [10/3, 10/3, 10/3]]. Bumping X_{10} by epsilon raises Y_1 by epsilon/3, so L by 10 * epsilon / 3, giving dX_{10} = 3.3333.

Transpose

Forward. Y = X^T. X is (M, N), Y is (N, M), with Y_{ij} = X_{ji}.

Given. Upstream gradient dY, shape (N, M).

Derive. partial Y_{ij} / partial X_{ab} = partial X_{ji} / partial X_{ab} = delta_{ja} delta_{ib}, so the VJP gives dX_{ab} = sum_{ij} dY_{ij} delta_{ja} delta_{ib} = dY_{ba} = (dY^T)_{ab}. Transpose is its own inverse, so its backward is again a transpose: dX = dY^T. No gradient values change, only the layout.

Vectorized form. From tests/ops.py:

def transpose2d(x):

Y = x.T

def bwd(dY):

return {'x': dY.T}

return Y, bwd

Shape check. dY is (N, M), so dY.T is (M, N), the shape of X.

Sanity case. X = [[1, 2, 3], [4, 5, 6]] (2x3) gives Y = X^T = [[1, 4], [2, 5], [3, 6]] (3x2). Take dY = [[10, 40], [20, 50], [30, 60]]. Then dX = dY^T = [[10, 20, 30], [40, 50, 60]] (2x3). Check: X_{01} = 2 lands at Y_{10}, so bumping X_{01} by epsilon raises Y_{10} by epsilon, changing L by dY_{10} * epsilon = 20 * epsilon, giving dX_{01} = 20.

Reshape

Forward. Y = reshape(X, new_shape). Y holds exactly the same elements as X in the same flat row-major order; only the index layout changes. There is a fixed bijection between an X index and a Y index with derivative 1 along that pairing and 0 everywhere else.

Given. Upstream gradient dY, shape new_shape.

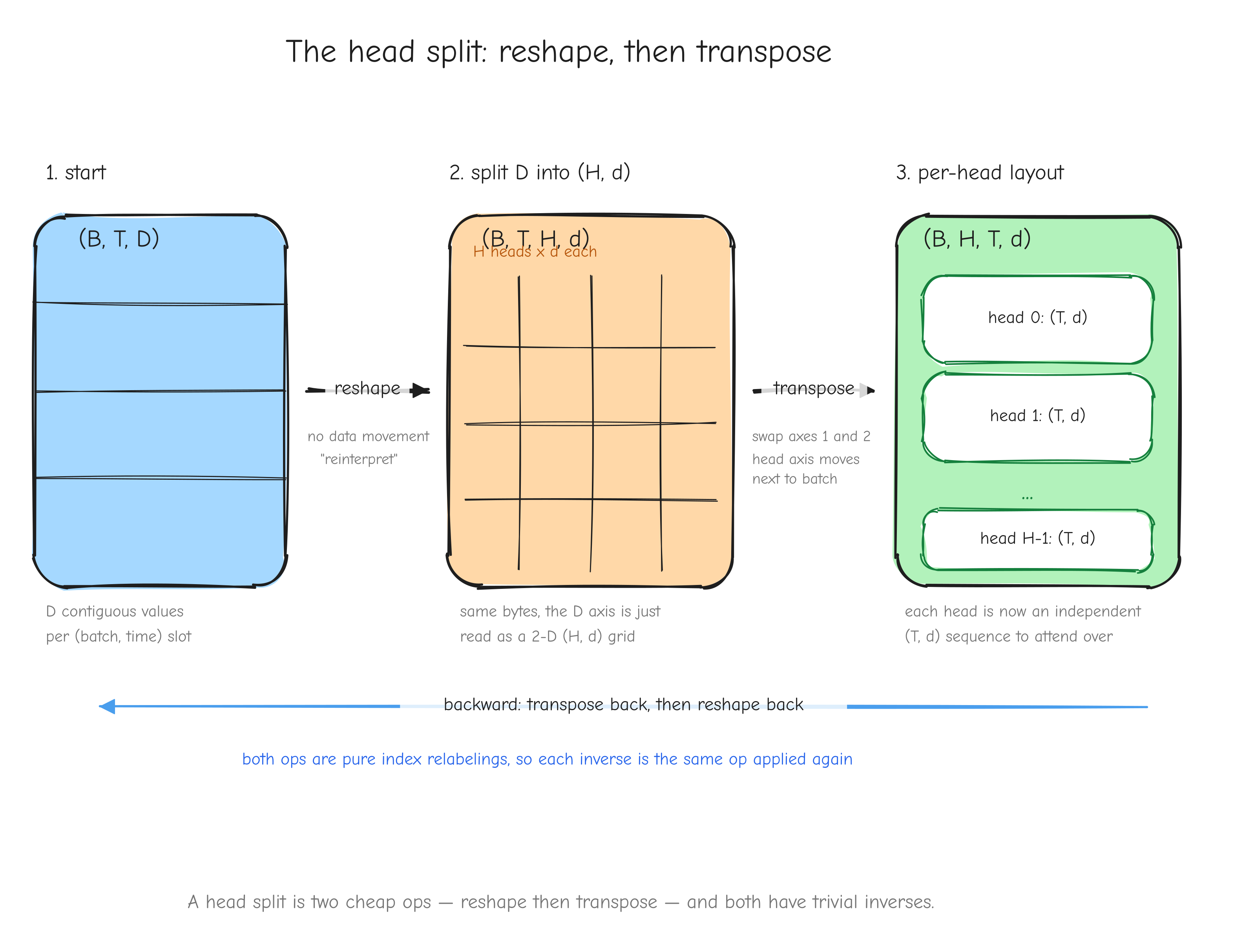

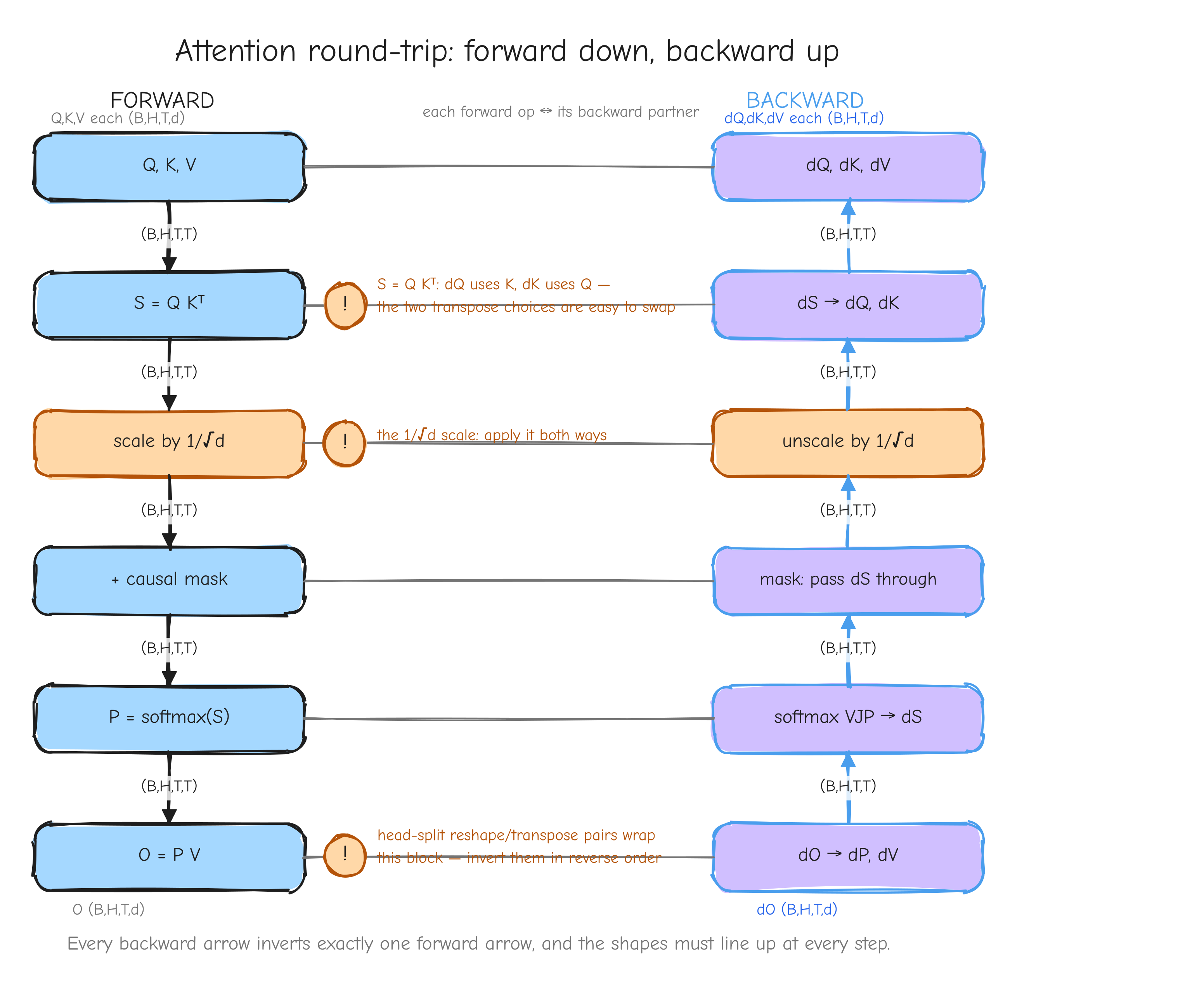

Derive. The VJP routes each upstream entry back to the input position it came from, which is exactly reshaping dY back to X's shape: dX = reshape(dY, X.shape). The gradient values are untouched, only the layout is reverted. This is the head-split in attention: the forward reshapes (B, T, D) toward (B, T, H, d), and the backward reshapes the gradient back.

Vectorized form. From tests/ops.py:

def reshape_op(x, newshape):

Y = x.reshape(newshape)

def bwd(dY):

return {'x': dY.reshape(x.shape)}

return Y, bwd

Shape check. dY.reshape(x.shape) has shape x.shape by construction, valid because reshape preserves the total element count.

Sanity case. X = [1, 2, 3, 4] (shape (4,)) reshaped to Y = [[1, 2], [3, 4]] (shape (2, 2)). Take dY = [[7, 8], [9, 10]]. Then dX = reshape(dY, (4,)) = [7, 8, 9, 10]. Check: X_2 = 3 maps to Y_{10}; bumping X_2 by epsilon raises Y_{10} by epsilon, changing L by dY_{10} * epsilon = 9 * epsilon, giving dX_2 = 9.

Multi-axis permute

Forward. Y = transpose(X, p) for a permutation p of the axes. The attention head-split uses p = (0, 2, 1, 3), swapping the head axis and the time axis of a (B, H, T, d)-style tensor.

Given. Upstream gradient dY, shape p applied to X's shape.

Derive. Same reasoning as transpose: each output index is a relabeling of an input index, so the VJP relabels the gradient back. The backward applies the inverse permutation, dX = transpose(dY, p_inv), where p_inv[p[i]] = i. For p = (0, 2, 1, 3) the permutation is its own inverse, so the backward uses the same (0, 2, 1, 3).

Vectorized form. From tests/ops.py, the specific attention permutation:

def permute_bhtd(x):

Y = x.transpose(0, 2, 1, 3)

def bwd(dY):

return {'x': dY.transpose(0, 2, 1, 3)}

return Y, bwd

For a general p the backward is np.transpose(dY, np.argsort(p)), since np.argsort(p) produces p_inv.

Shape check. Applying p_inv to dY's shape, which is p applied to X's shape, recovers X's shape. For the involution (0, 2, 1, 3), applying it twice returns the original.

Sanity case. Take X of shape (1, 2, 3, 4). The forward gives Y of shape (1, 3, 2, 4): axes 1 and 2 swapped. A dY of shape (1, 3, 2, 4) goes back through dY.transpose(0, 2, 1, 3) to shape (1, 2, 3, 4), matching X. The value at dX[b, h, t, e] is dY[b, t, h, e]: the same number, moved back to the position it came from.

Your turn

Part 4: Derivations Tier 3, the loss layer

Tiers 1 and 2 built the muscle: element-wise ops, matmul, reductions. This tier is shorter, but it is where the backward pass actually begins. The loss is a single scalar at the very top of the model. Backpropagation starts there and flows down. So the gradient this tier produces, the partial of L with respect to the logits, is the seed: every other gradient in GPT-2 is a consequence of it.

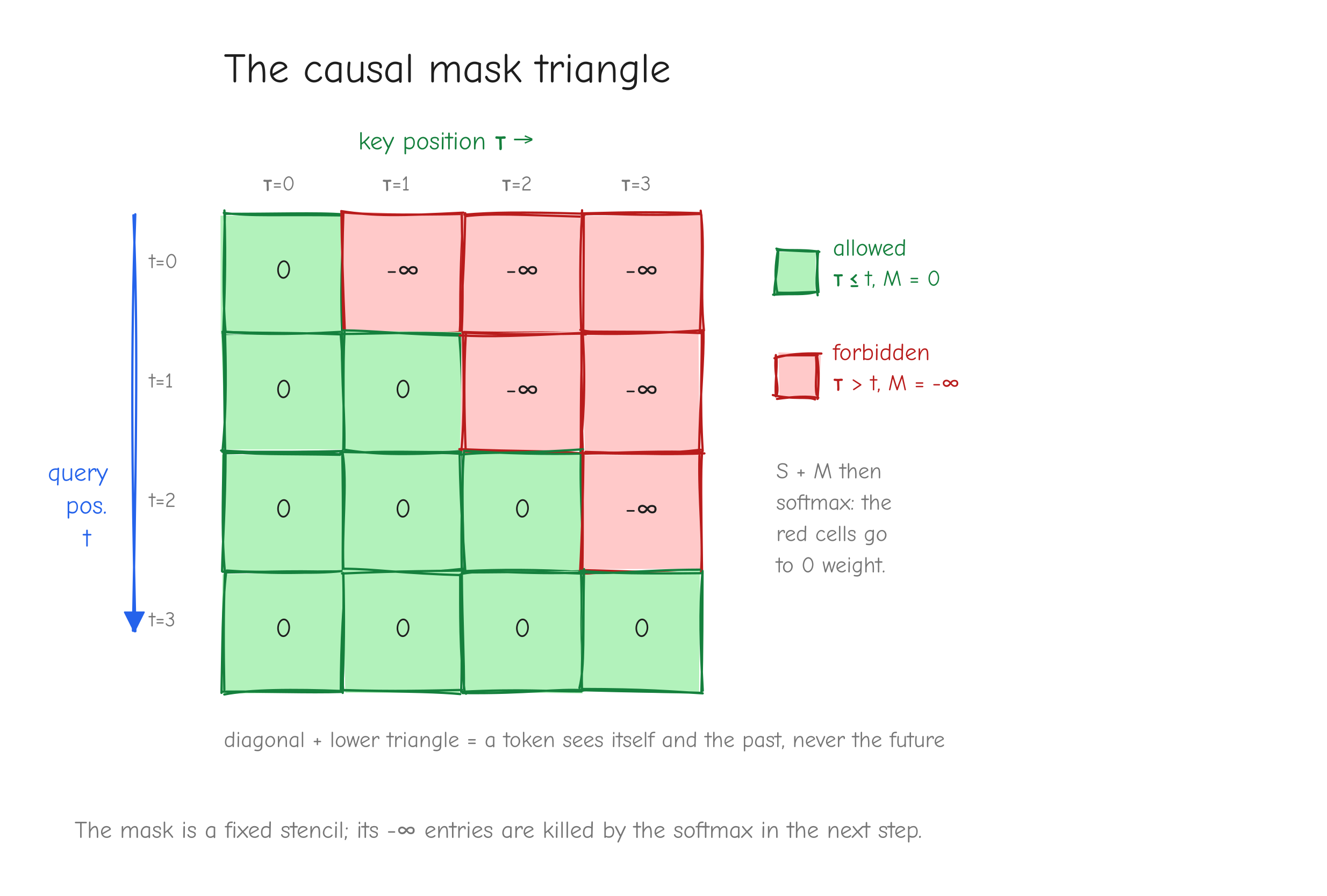

Three operations get us there. Softmax turns logits into a probability distribution. Cross-entropy turns that distribution plus a target into a scalar loss. Then we fuse the two, and the fused form collapses to one of the cleanest results in the whole course: dx = p - y.

We derive softmax and cross-entropy as standalone ops first, because we need the standalone softmax again later inside attention. Then we compose them and watch the algebra simplify.

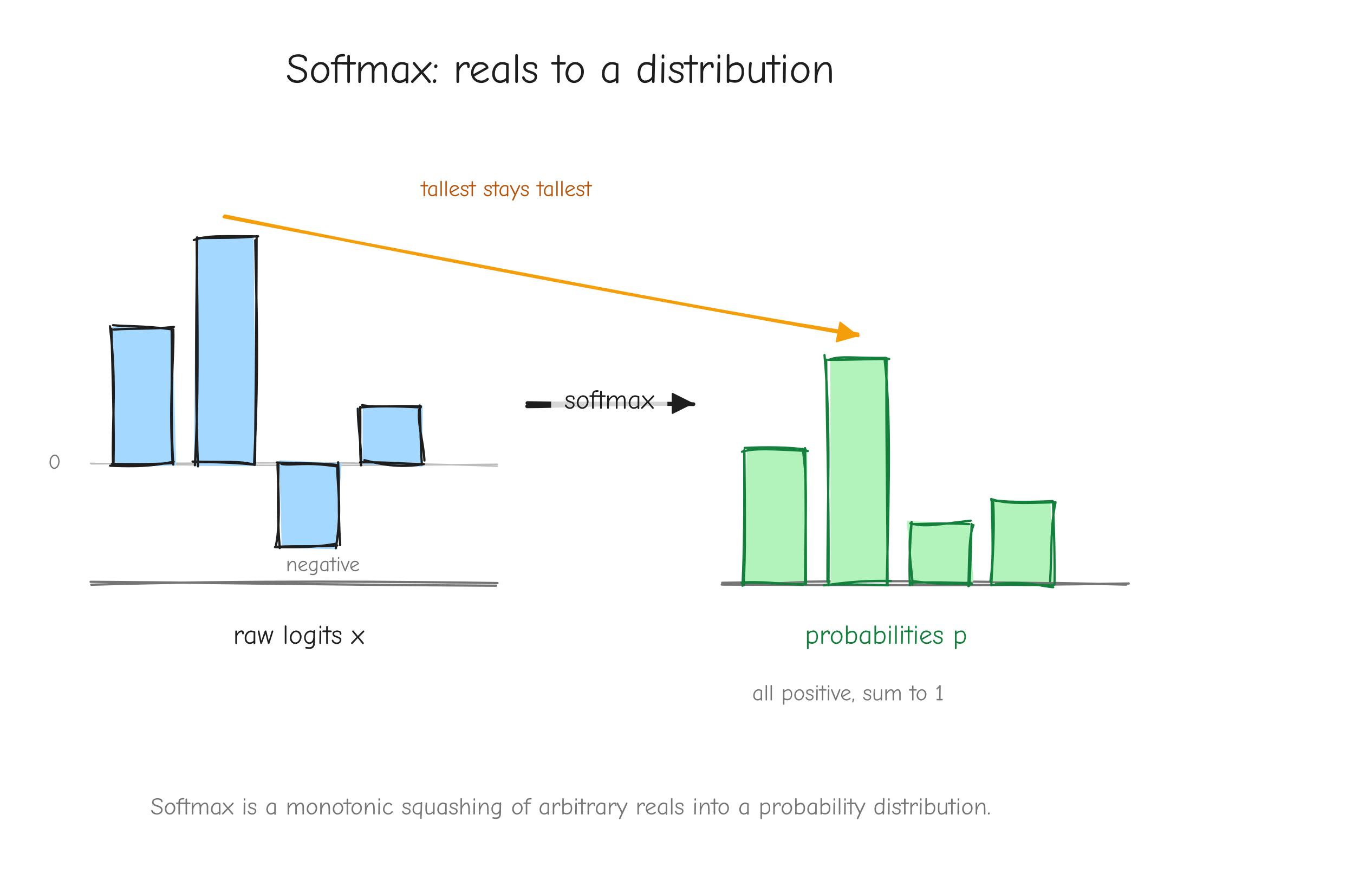

Softmax

Forward. For a single logit vector x of shape (V,):

The output p has shape (V,), every p_i > 0, and the entries sum to 1. In practice softmax is applied row-wise to a (B, T, V) tensor of logits, or to attention scores of shape (..., T), always over the last axis.

One detail before the derivation: numerical stability. Computing exp(x_i) directly overflows when x_i is large. The fix is to subtract the row max m = max_k x_k before exponentiating:

The e^{-m} factors cancel top and bottom. The output p is identical, so the gradient is identical too. Treat m as a constant when differentiating: since the function value is unchanged, the shift contributes nothing to the derivative.

x. An arrow labeled "softmax" pointing to a bar chart on the right where all bars are positive, sum to 1, and the tallest input logit became the tallest probability. Label the right chart p. Takeaway: softmax is a monotonic squashing of arbitrary reals into a distribution.Given. Upstream gradient dp of shape (V,), the same shape as p.

Derive. This is a deep op. We do it in two steps: first the full Jacobian partial p_i / partial x_j, then the vector-Jacobian product that contracts it against dp.

Step 1, the Jacobian. Write S = sum_k exp(x_k) so that p_i = exp(x_i) / S. Note that S depends on every input, so partial S / partial x_j = exp(x_j). There are two cases.

Case i = j. Both the numerator exp(x_i) and the denominator S depend on x_i, so we use the quotient rule:

Case i != j. The numerator exp(x_i) does not depend on x_j, only the denominator does:

Both cases fold into one formula using the Kronecker delta:

Check it. Set i = j: you get p_i(1 - p_i). Set i != j: you get p_i(0 - p_j) = -p_i p_j. Both match.

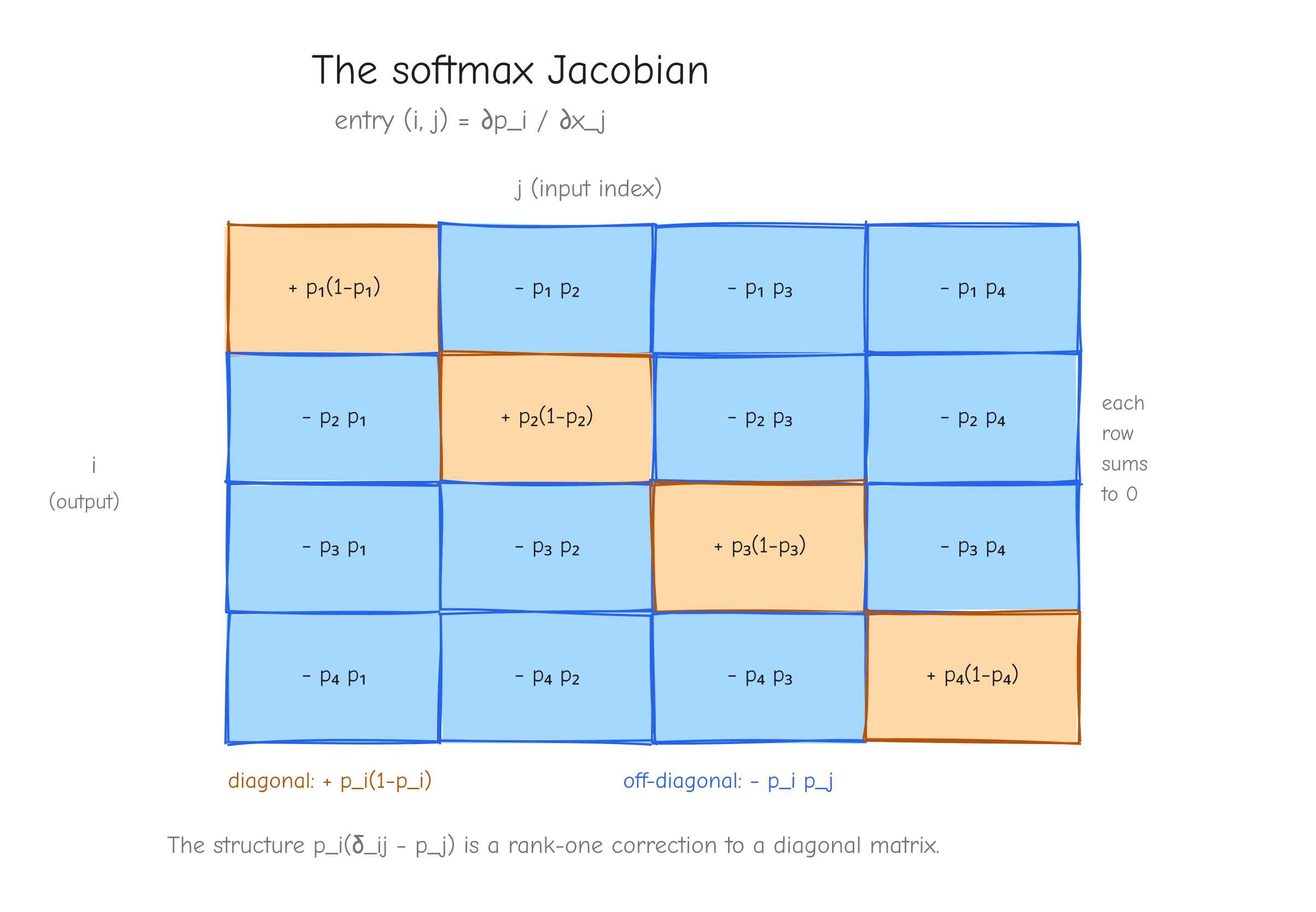

V x V grid (use V = 4) showing the Jacobian matrix partial p_i / partial x_j. Color the diagonal cells one color labeled p_i(1 - p_i) with a "+" sign, and the off-diagonal cells another color labeled -p_i p_j with a "-" sign. Annotate that every row sums to zero (raising one logit and the redistribution cancel). Takeaway: the structure p_i(delta_ij - p_j) is a rank-one correction to a diagonal.Step 2, the vector-Jacobian product. We never want the full V x V Jacobian as a materialized matrix. We want dx, and the chain rule gives it by contracting dp against the Jacobian. Output index j depends on input index i through partial p_j / partial x_i = p_j(delta_ji - p_i):

The first sum collapses: delta_ji is nonzero only when j = i, so it picks out the single term dp_i p_i. In the second sum, p_i does not depend on the summation index j, so factor it out: p_i sum_j dp_j p_j = p_i (dp . p), where dp . p is a single scalar, the dot product of the upstream gradient with the probability vector. Putting the two together:

In vector form:

This is the standalone softmax backward. The factor dp . p is a scalar per row, computed from whatever upstream gradient happens to arrive. We need this exact form again in Part 6, Attention and composition: the softmax inside attention receives its upstream gradient from the weights @ V matmul, not from cross-entropy. There is no shortcut there. Do not assume dp is anything special.

Vectorized form. Copied from tests/ops.py, softmax_op:

def softmax_op(x):

"""Item 8. p = softmax(x) over the last axis (numerically stable).

dx = p (.) (dp - (dp . p)), the per-row dot taken over the last axis."""

z = x - x.max(axis=-1, keepdims=True)

with np.errstate(under='ignore'):

e = np.exp(z)

p = e / e.sum(axis=-1, keepdims=True)

def bwd(dp):

dx = p * (dp - np.sum(dp * p, axis=-1, keepdims=True))

return {'x': dx}

return p, bwd

The keepdims=True on the sum is what makes dp . p broadcast back correctly: it stays shape (..., 1) so it subtracts from every column of dp.

Shape check. p and dp are both (V,) (or (..., V) batched). The term np.sum(dp * p, axis=-1, keepdims=True) is (..., 1), a scalar per row. Subtracting it from dp broadcasts to (..., V), and the element-wise product with p keeps (..., V), the shape of x. Correct.

Sanity case. Let x = [0, ln 2], length 2. Then exp(x) = [1, 2], S = 3, so p = [1/3, 2/3]. Take upstream dp = [1, 0].

dp . p = 1 * 1/3 + 0 * 2/3 = 1/3.dx_1 = p_1(dp_1 - dp.p) = 1/3 (1 - 1/3) = 1/3 * 2/3 = 2/9.dx_2 = p_2(dp_2 - dp.p) = 2/3 (0 - 1/3) = -2/9.

So dx = [2/9, -2/9]. Cross-check against the explicit Jacobian: partial p_1 / partial x_1 = p_1(1 - p_1) = 1/3 * 2/3 = 2/9, and partial p_1 / partial x_2 = -p_1 p_2 = -2/9. With dp = [1, 0], dx_1 = dp_1 (partial p_1 / partial x_1) + dp_2 (partial p_2 / partial x_1) = 1 * 2/9 + 0 = 2/9, and dx_2 = 1 * (-2/9) + 0 = -2/9. The VJP formula and the explicit Jacobian agree.

Cross-entropy from probabilities

Forward. Given a probability vector p and a target y, both shape (V,):

p has p_i > 0 and sums to 1. y is the target: a one-hot vector in the usual case, or more generally any distribution that sums to 1 (label smoothing, distillation). L is a scalar.

Given. The loss is the scalar output, so the upstream gradient is dL = partial L / partial L = 1.

Derive. This one is short. Differentiate L with respect to each p_i. Only the i-th term of the sum contains p_i:

So dp_i = -y_i / p_i. The target y is a constant, not a variable we differentiate.

One-hot special case. If y is one-hot at the true class c (so y_c = 1 and y_i = 0 otherwise), then:

Only the true-class probability gets a nonzero gradient, and L itself reduces to -log p_c.

Vectorized form. Copied from tests/ops.py, cross_entropy_from_probs:

def cross_entropy_from_probs(p, y):

"""Item 9. L = -sum_i y_i log(p_i) (summed over all elements).

dp = -y / p. y (the target) is treated as a constant."""

L = -np.sum(y * np.log(p))

def bwd(dL):

return {'p': (-y / p) * dL}

return L, bwd

Shape check. y and p are both (V,), so -y / p is (V,), the shape of p. Correct.

Sanity case. Take p = [1/3, 2/3] and one-hot target y = [1, 0], true class c = 1.

L = -(1 * log(1/3) + 0 * log(2/3)) = -log(1/3) = log 3 ~ 1.0986.dp_1 = -y_1 / p_1 = -1 / (1/3) = -3.dp_2 = -y_2 / p_2 = 0.

So dp = [-3, 0]. This matches the one-hot rule: -1/p_c = -3 at the true class, zero elsewhere.

Fused softmax plus cross-entropy

This is the payoff. The two ops above are almost never used separately for the loss. They are composed: logits go in, a scalar loss comes out, and the gradient that comes back is p - y. We derive it two ways, because seeing both is the point.

Forward. Compose softmax then cross-entropy, for a single token with logit vector x of shape (V,):

Input is logits x, target y is one-hot (or a distribution summing to 1), output L is a scalar.

Given. dL = 1. The scalar loss is the root of the backward pass; nothing is upstream of it.

Derive. Method A, substitute and expand the log. Use log p_i = x_i - log sum_k exp(x_k):

Since y sums to 1, the second term is just log sum_k exp(x_k). So L = -sum_i y_i x_i + log sum_k exp(x_k). Differentiate with respect to x_j. The first term contributes -y_j. The second term is the log-sum-exp, whose derivative is exactly softmax:

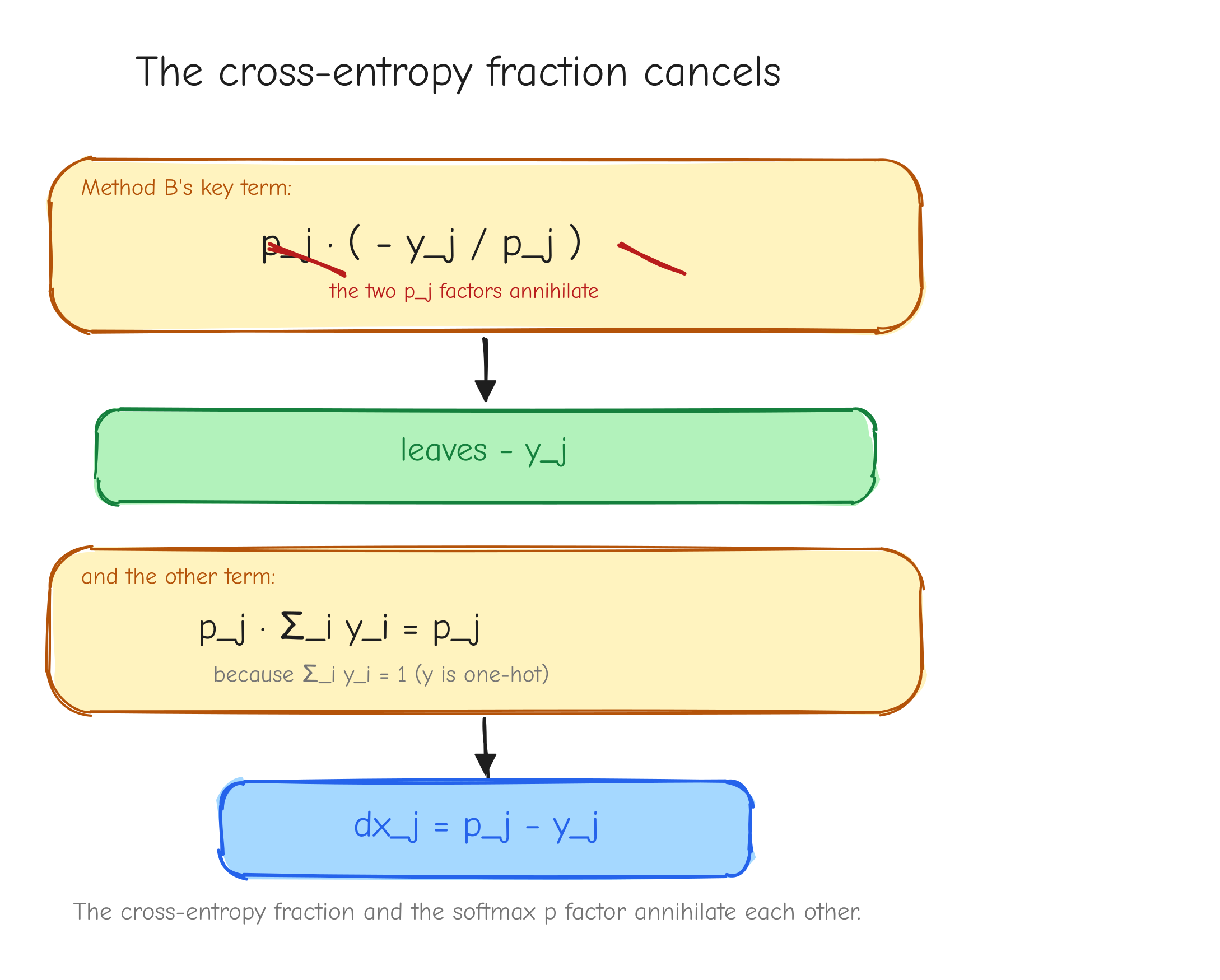

Method B, chain through the two ops and watch the fractions cancel. From cross-entropy, the upstream into softmax is dp_i = -y_i / p_i. Feed that into the standalone softmax VJP dx_j = p_j(dp_j - sum_i dp_i p_i):

Look at the two pieces inside the parentheses after multiplying by p_j. The first: p_j * (-y_j / p_j) = -y_j. The p_j cancels the 1/p_j from cross-entropy exactly. The second: p_j * sum_i y_i = p_j * 1 = p_j. So dx_j = -y_j + p_j = p_j - y_j. Same answer.

p_j multiplying (-y_j / p_j). Strike through the two p_j factors with a diagonal line, leaving -y_j. Below it, show p_j * sum_i y_i collapsing to p_j because sum y_i = 1. Final line: dx_j = p_j - y_j. Takeaway: the messy cross-entropy fraction and the softmax p factor annihilate each other.Batch and averaging, the 1/N factor. In GPT-2 training the reported loss is the mean over all predicted tokens, not a single token. This introduces a factor that is easy to drop and expensive to drop. Derive it explicitly.

Let there be N tokens, indexed by n. Each has its own logit vector x^(n) of shape (V,), its own probabilities p^(n) = softmax(x^(n)), and its own one-hot target y^(n). The total loss is the mean of the per-token losses:

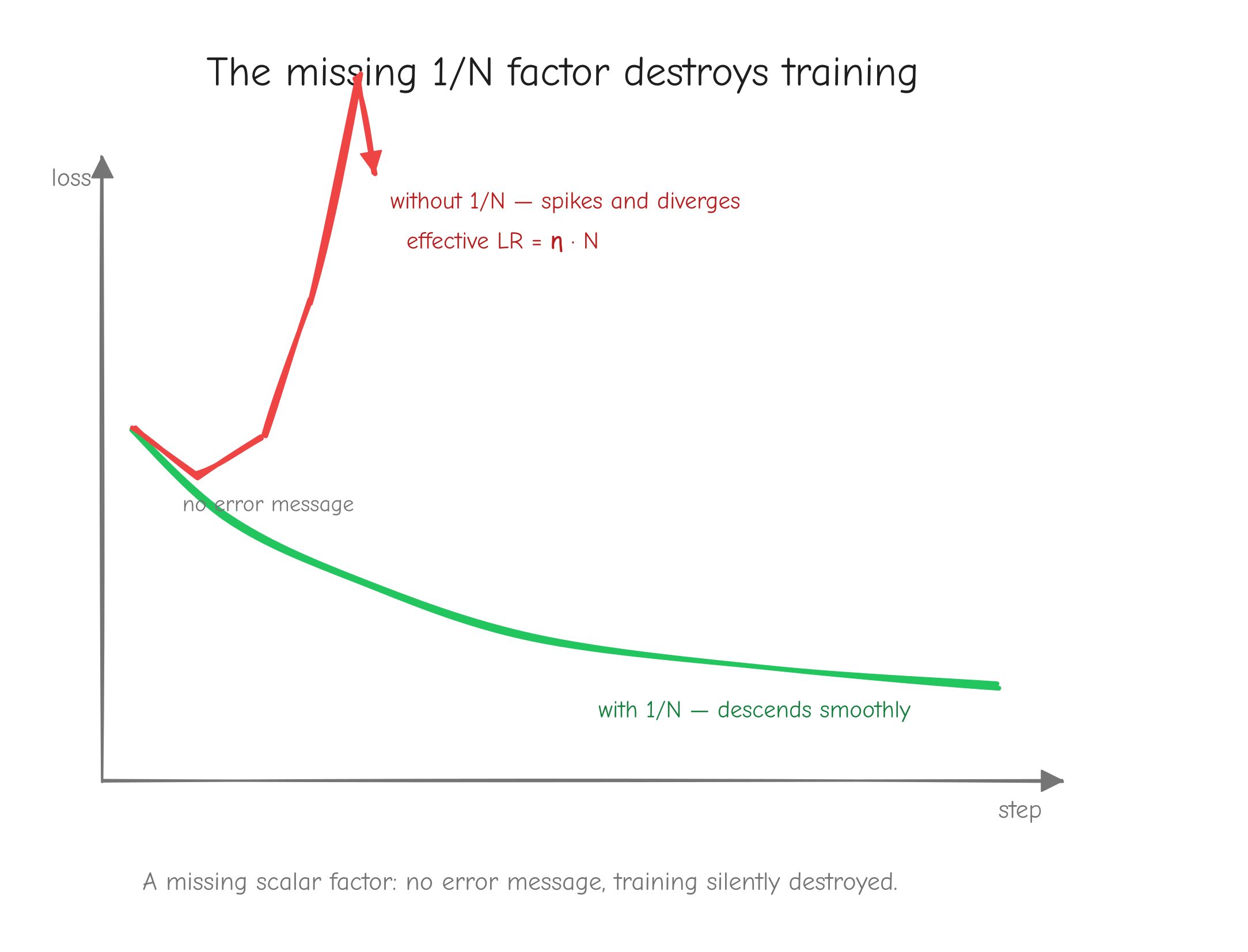

The logits of token n affect only L_n. There is no cross-token coupling in the loss layer (the coupling happens earlier, inside attention; here each token's logits are independent). So when we differentiate L with respect to one token's logits, only that token's term survives, and it carries the 1/N from the mean:

The subtraction and division are per token. N is the number of tokens averaged over, typically N = B * T, every position in every sequence. If some positions are masked or ignored (padding, ignore_index), N is the count of non-ignored tokens, and the ignored rows of dlogits are set to zero.

This dlogits, shape (B, T, V) or (N, V) flattened, is the seed gradient that kicks off the entire backward pass through GPT-2.

Vectorized form. Copied from tests/ops.py, softmax_ce_fused:

def softmax_ce_fused(logits, targets):

"""Item 10. Fused softmax + cross-entropy, mean-reduced over all B*T tokens.

L = mean_n CE(softmax(logits_n), onehot(targets_n)).

dlogits = (p - onehot) / N, N = B*T."""

B, T, V = logits.shape

N = B * T

flat = logits.reshape(N, V)

tgt = targets.reshape(N)

z = flat - flat.max(axis=-1, keepdims=True)

with np.errstate(under='ignore'):

e = np.exp(z)

p = e / e.sum(axis=-1, keepdims=True) # (N, V)

rows = np.arange(N)

L = -np.mean(np.log(p[rows, tgt]))

def bwd(dL):

dflat = p.copy()

dflat[rows, tgt] -= 1.0

dflat = dflat / N * dL

return {'logits': dflat.reshape(B, T, V)}

return L, bwd

Note how the backward builds p - y_onehot without ever materializing the one-hot matrix: it copies p, then subtracts 1 only at the true-class column of each row. That is p - y for one-hot y. Then / N, then * dL.

Shape check. logits is (B, T, V), flattened to (N, V). p is (N, V). Subtracting 1 in place at the target columns keeps (N, V). Dividing by the scalar N keeps (N, V). Reshaping back gives (B, T, V), the shape of logits. Correct.

Sanity case. Single token: x = [0, ln 2], so p = [1/3, 2/3]. One-hot target y = [1, 0], true class 1.

dx = p - y = [1/3 - 1, 2/3 - 0] = [-2/3, 2/3].

Cross-check via Method B: dp = -y / p = [-3, 0]. Softmax VJP: dp . p = -3 * 1/3 + 0 * 2/3 = -1. Then dx_1 = p_1(dp_1 - dp.p) = 1/3(-3 - (-1)) = 1/3(-2) = -2/3, and dx_2 = p_2(dp_2 - dp.p) = 2/3(0 - (-1)) = 2/3. Both routes agree, and a finite-difference check on L = -log p_{class 1} gives dx ~ [-0.6667, 0.6667], matching.

Now put this token in a batch of N = 2 averaged tokens:

Exactly half the single-example gradient. That is the 1/N factor in action.

Your turn

Part 5: Derivations Tier 4 to 5, normalization, embeddings, weight tying

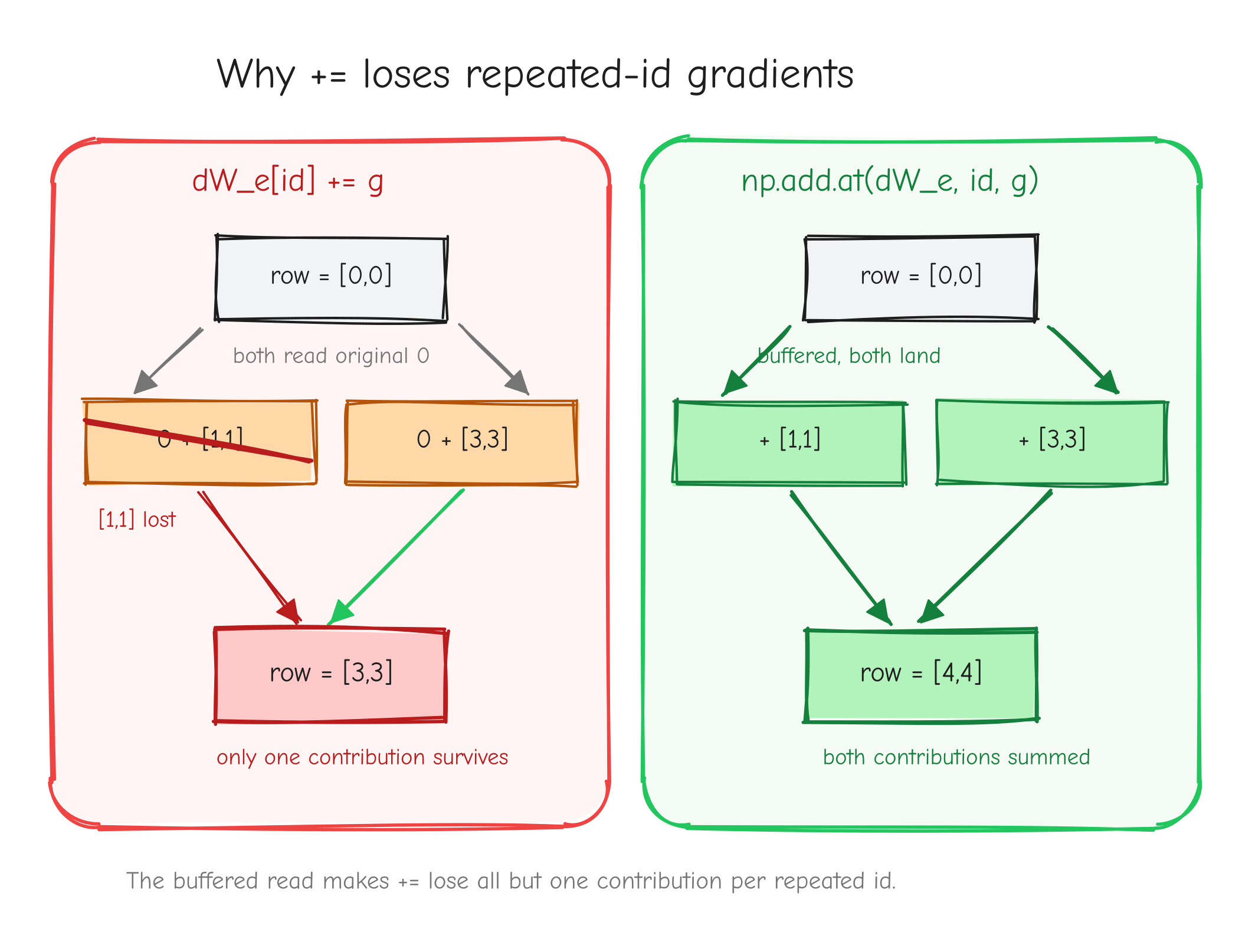

Four operations here, and they split cleanly by difficulty. LayerNorm is the hardest derivation in the course so far: one input feeds the output through three channels at once, and the work is keeping those channels straight without dropping a term. Then come the embeddings, which are not hard calculus but hide two of the most common silent bugs in a from-scratch GPT: the np.add.at trap and the weight-tying accumulation. Get those wrong and nothing crashes. The model just trains worse and you never know why.

We keep the same discipline: forward equation with shapes, the upstream gradient we are handed, the derivation in index notation, the vectorized form copied from tests/ops.py, a shape check, and a worked number.

Tier 4: normalization

LayerNorm

Forward. LayerNorm normalizes over the last dimension. For one feature vector (the operation is applied independently to every position of a tensor):

The variance is the biased one (divide by , not ). The parameters are learnable and shared across all positions: the same vector is broadcast everywhere. Per position, and are scalars. Write for the normalizing standard deviation, so .

Given. Upstream gradient , same shape as : , or for a single position.

Derive. We do the easy parameters first, then spend the rest of the section on .

The easy params: and . For a single position, . The partials are immediate: and . The VJP for one position:

But and are shared across every position. The same vector was broadcast up to in the forward pass, so by the broadcasting backward rule (a tensor broadcast to a larger shape gets its gradient summed back over the broadcast axes) we sum the per-position contributions over batch and sequence:

Each is shape . That is the whole story for the parameters.

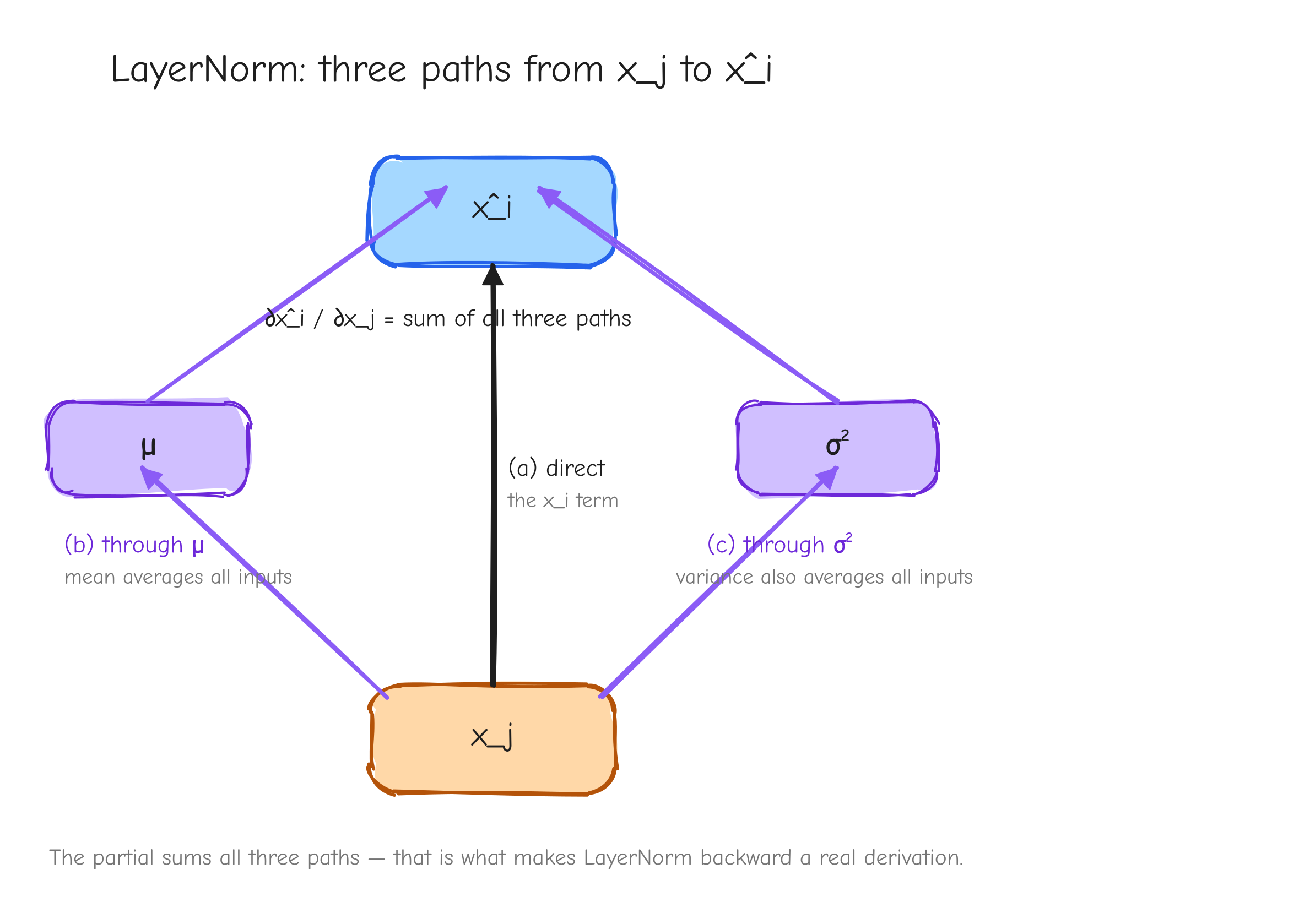

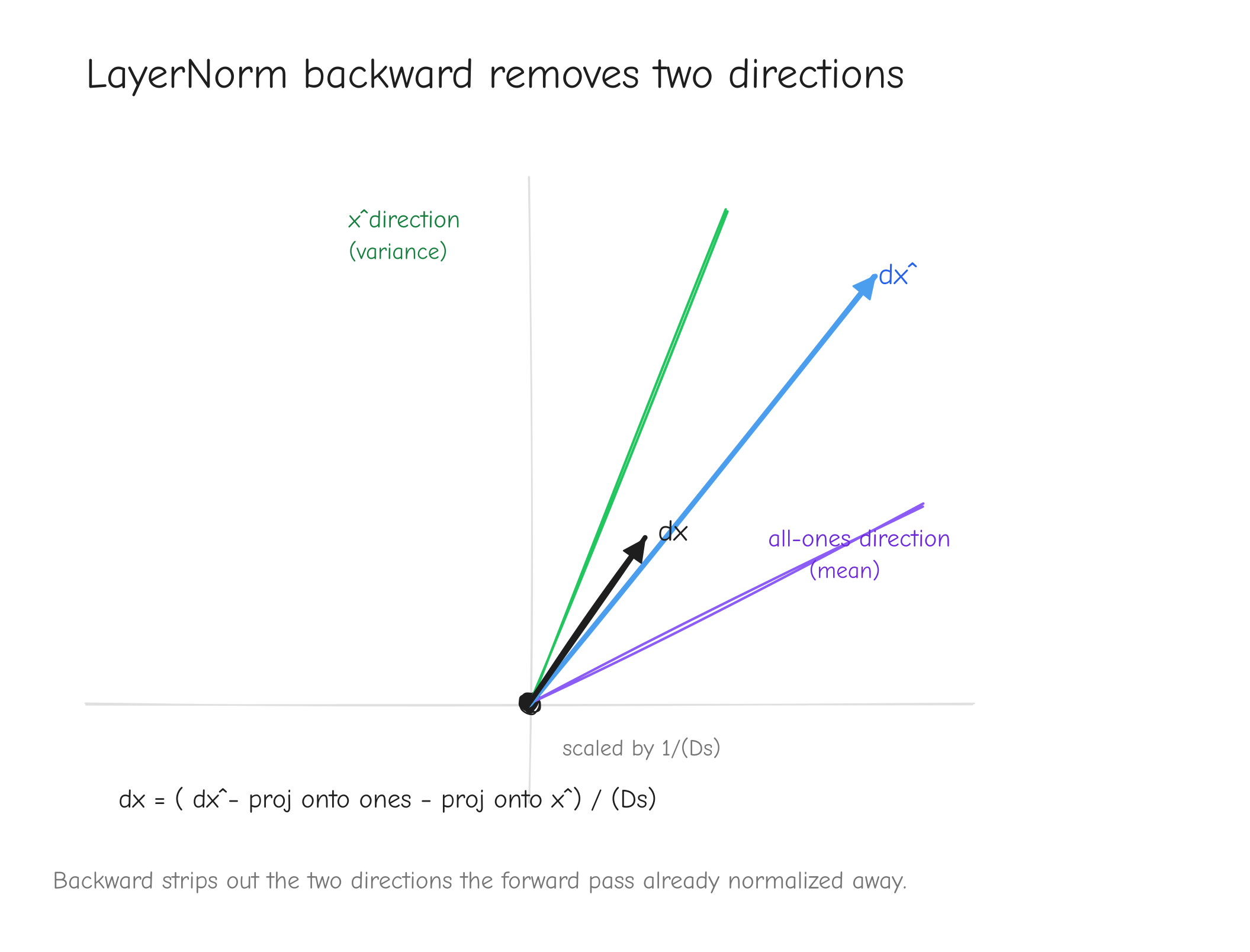

The hard part: . This is where LayerNorm earns its reputation. We build it in three moves.

Move 0, peel off . Since and does not depend on , we have , so

Now we need from , which means the Jacobian . Here is why it is hard: and are both averages over the whole vector, so every moves every . There is no clean per-component story. One input nudges the output through three separate channels.

Move 1, the ingredients. We need how and depend on a single . The mean is easy:

The variance takes care, because and itself depends on :

Split the sum. The first piece, . The second piece, , because by the definition of . So

Then , so by the chain rule

Move 2, the three contributions to . Write and apply the product rule over the two -dependent factors:

The first piece: . That single expression already holds two of the three channels:

The second piece: , so

Putting all three together:

Term (c) cleans up. Since , the product , so (c) .

Move 3, stitch the VJP. The chain rule gives . Use the Jacobian formula with output index and input index , so :

Distribute the sum into three pieces and simplify each:

Piece 1: the collapses the sum to the single term . Piece 2: nothing inside depends on , it is one scalar subtracted from every component. Piece 3: and come out of the -sum, leaving the scalar .

Factor out of all three (for piece 1, rewrite ):

Both sums run over the normalized (last) dim only. For a batched tensor they are sum(..., axis=-1, keepdims=True), and each position is handled independently.

Vectorized form (full tensor , sums over the last axis), copied from tests/ops.py:

def layernorm(x, gamma, beta, eps):

D = x.shape[-1]

mu = x.mean(axis=-1, keepdims=True)

xc = x - mu

var = np.mean(xc * xc, axis=-1, keepdims=True)

s = np.sqrt(var + eps)

xhat = xc / s

y = gamma * xhat + beta

lead = tuple(range(x.ndim - 1))

def bwd(dy):

dgamma = np.sum(dy * xhat, axis=lead)

dbeta = np.sum(dy, axis=lead)

dxhat = gamma * dy

sum_dxhat = np.sum(dxhat, axis=-1, keepdims=True)

sum_dxhat_xhat = np.sum(dxhat * xhat, axis=-1, keepdims=True)

dx = (1.0 / (D * s)) * (D * dxhat - sum_dxhat - xhat * sum_dxhat_xhat)

return {'x': dx, 'gamma': dgamma, 'beta': dbeta}

return y, bwd

Here lead is (0, 1) for a (B,T,D) input: the batch and sequence axes that and were broadcast over. var keeps its trailing dim, shape , so s broadcasts cleanly against the tensors.

Shape check.

- : is broadcast over , is , so is .

- : sum of over axes gives , the shape of .

- : same, , the shape of .

- : is ;

sum_dxhatis and broadcasts; is ; the per-position prefactor times gives , the shape of .

Sanity case. Single position, . Take

The is chosen so is a perfect square and the arithmetic stays exact. The derivation holds for any .

Forward.

- .

- deviations ; .

- , so .

- .

- .

Backward. Take upstream .

- .

- .

- .

- .

- .

- prefactor .

Apply the compact form, :

- .

- .

- .

So . The check: , as it must be. LayerNorm output is invariant to adding a constant to every input, so the gradient has no all-ones component. Finite-difference gradient checking returns , matching exactly.

To see the compact form was not a lucky shortcut, evaluate straight from the raw three-term Jacobian with , , , , :

| (a) | (b) | (c) | (a)+(b)+(c) | sum | |

|---|---|---|---|---|---|

| 1 | |||||

| 2 | |||||

| 3 |

Sum: . The raw sum and the compact form agree.

Tier 5: embeddings and weight tying

These three operations are calculus-light. The work is not the derivative; it is the NumPy.

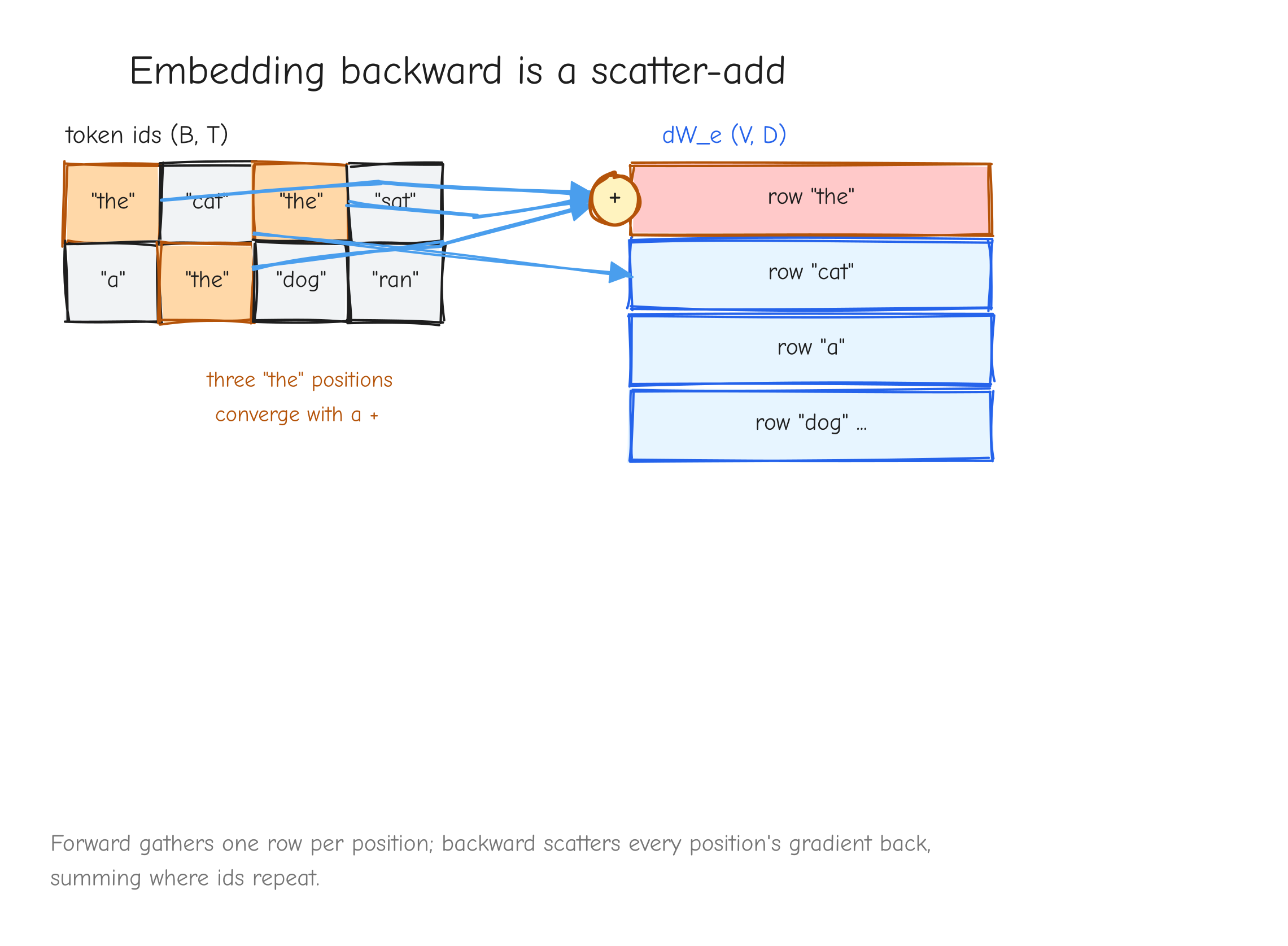

Embedding lookup

Forward.

- , the embedding table (parameter).

- , integer token ids in (constant, not differentiated).

- , the gathered embeddings.

This is a pure gather: row of is copied into output slot .

Given. Upstream gradient .

Derive. Since is not differentiable, the only gradient is , shape . Write the forward with an explicit selector. For one output element:

The partial of one output with respect to one table entry:

The VJP sums the upstream gradient against this Jacobian over all output indices :

The collapses the -sum to :